Machine Generated Data

Tags

Amazon

created on 2019-06-04

Clarifai

created on 2019-06-04

Imagga

created on 2019-06-04

| chair | 35.5 | |

|

| ||

| building | 19.3 | |

|

| ||

| man | 18.8 | |

|

| ||

| musical instrument | 17.4 | |

|

| ||

| seat | 16.8 | |

|

| ||

| city | 16.6 | |

|

| ||

| architecture | 15.7 | |

|

| ||

| people | 15.6 | |

|

| ||

| old | 14.6 | |

|

| ||

| room | 14.5 | |

|

| ||

| interior | 14.1 | |

|

| ||

| table | 13.8 | |

|

| ||

| person | 13.6 | |

|

| ||

| urban | 13.1 | |

|

| ||

| street | 12.9 | |

|

| ||

| shopping cart | 12.8 | |

|

| ||

| house | 12.5 | |

|

| ||

| male | 12.1 | |

|

| ||

| wind instrument | 11.9 | |

|

| ||

| furniture | 11.8 | |

|

| ||

| accordion | 11.6 | |

|

| ||

| sitting | 11.2 | |

|

| ||

| restaurant | 10.7 | |

|

| ||

| sun | 10.5 | |

|

| ||

| summer | 10.3 | |

|

| ||

| dark | 10 | |

|

| ||

| dirty | 9.9 | |

|

| ||

| handcart | 9.8 | |

|

| ||

| chairs | 9.8 | |

|

| ||

| keyboard instrument | 9.8 | |

|

| ||

| sax | 9.7 | |

|

| ||

| outdoor | 9.2 | |

|

| ||

| style | 8.9 | |

|

| ||

| wall | 8.5 | |

|

| ||

| business | 8.5 | |

|

| ||

| life | 8.4 | |

|

| ||

| wood | 8.3 | |

|

| ||

| sky | 8.3 | |

|

| ||

| inside | 8.3 | |

|

| ||

| window | 8.2 | |

|

| ||

| home | 8 | |

|

| ||

| working | 8 | |

|

| ||

| work | 7.8 | |

|

| ||

| empty | 7.7 | |

|

| ||

| tree | 7.7 | |

|

| ||

| wheeled vehicle | 7.7 | |

|

| ||

| folding chair | 7.7 | |

|

| ||

| fashion | 7.5 | |

|

| ||

| monument | 7.5 | |

|

| ||

| device | 7.4 | |

|

| ||

| holding | 7.4 | |

|

| ||

| historic | 7.3 | |

|

| ||

| protection | 7.3 | |

|

| ||

| danger | 7.3 | |

|

| ||

| black | 7.2 | |

|

| ||

| suit | 7.2 | |

|

| ||

| portrait | 7.1 | |

|

| ||

| travel | 7 | |

|

| ||

| modern | 7 | |

|

| ||

Google

created on 2019-06-04

| Snapshot | 84.5 | |

|

| ||

| Room | 78 | |

|

| ||

| Vintage clothing | 69.4 | |

|

| ||

| Monochrome | 60.1 | |

|

| ||

| Family | 54.3 | |

|

| ||

| History | 54.1 | |

|

| ||

| Art | 50.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

Imagga

AWS Rekognition

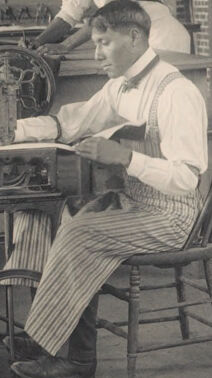

| Age | 26-43 |

| Gender | Male, 54.8% |

| Angry | 45.2% |

| Calm | 47.9% |

| Sad | 50.8% |

| Confused | 45.2% |

| Disgusted | 45.5% |

| Happy | 45.2% |

| Surprised | 45.1% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 53.4% |

| Disgusted | 45.5% |

| Confused | 45.2% |

| Surprised | 45.3% |

| Calm | 51.6% |

| Happy | 45.8% |

| Angry | 45.4% |

| Sad | 46.3% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 53.3% |

| Angry | 45.3% |

| Surprised | 45% |

| Disgusted | 45.1% |

| Happy | 45% |

| Calm | 45.3% |

| Sad | 54.1% |

| Confused | 45.1% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 50.5% |

| Sad | 48.1% |

| Happy | 45.3% |

| Surprised | 45.5% |

| Disgusted | 46% |

| Angry | 46.1% |

| Calm | 48.5% |

| Confused | 45.5% |

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 50.6% |

| Disgusted | 50.7% |

| Calm | 47% |

| Surprised | 45.3% |

| Angry | 45.3% |

| Sad | 45.3% |

| Confused | 45.2% |

| Happy | 46.1% |

AWS Rekognition

| Age | 20-38 |

| Gender | Female, 50.8% |

| Surprised | 45.3% |

| Sad | 46.6% |

| Happy | 45.2% |

| Disgusted | 45.3% |

| Confused | 45.2% |

| Calm | 50.6% |

| Angry | 46.8% |

Imagga

| Traits | no traits identified |

Imagga

| Traits | no traits identified |

Imagga

| Traits | no traits identified |

Imagga

| Traits | no traits identified |

Feature analysis

Categories

Imagga

| streetview architecture | 59.7% | |

|

| ||

| interior objects | 38.7% | |

|

| ||

| paintings art | 1.2% | |

|

| ||

Captions

Microsoft

created by unknown on 2019-06-04

| a group of people sitting in a chair in front of a building | 91.9% | |

|

| ||

| a group of people sitting on a chair in front of a building | 90.1% | |

|

| ||

| a group of people sitting in front of a building | 90% | |

|

| ||

Clarifai

created by general-english-image-caption-blip on 2025-05-16

| a photograph of a group of men in uniforms working on machines | -100% | |

|

| ||

Text analysis

5 0

5

0