Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 60-90 |

| Gender | Male, 99.7% |

| Happy | 1.5% |

| Sad | 43.4% |

| Disgusted | 0.9% |

| Angry | 4% |

| Calm | 43.1% |

| Surprised | 1.2% |

| Confused | 5.8% |

Feature analysis

Amazon

| Person | 91.1% | |

Categories

Imagga

| paintings art | 47.4% | |

| food drinks | 26.3% | |

| interior objects | 10.9% | |

| pets animals | 6.8% | |

| nature landscape | 4.7% | |

| text visuals | 2% | |

Captions

Microsoft

created on 2019-03-22

| a screen shot of a social media post | 53.8% | |

OpenAI GPT

Created by gpt-4 on 2024-12-23

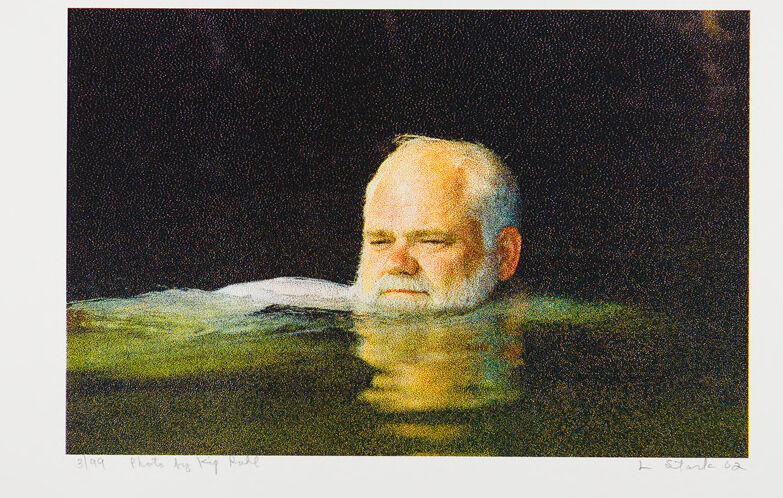

This image depicts artwork featuring a person facing sideways, placed against a dark backdrop that fades into a lighter area resembling a horizon or a reflective surface akin to water. The artwork exhibits a sense of calmness and depth with its soothing colors and indistinct boundaries. The signature of the artist, as well as some text or numbers, can be seen at the bottom corners, suggesting it might be a limited print or a numbered piece in a series. The use of color and shadow gives the piece an evocative and perhaps contemplative character.

Created by gpt-4o-2024-05-13 on 2024-12-23

Anthropic Claude

Created by claude-3-haiku-20240307 on 2024-12-23

The image depicts a close-up view of a person's head and upper body partially submerged in water, with only the face and shoulders visible above the surface. The person appears to have a beard and a serene, contemplative expression on their face. The background is dark, creating a somber and reflective atmosphere around the subject. The image conveys a sense of tranquility and introspection.

Created by claude-3-5-sonnet-20241022 on 2024-12-23

This is an artistic photograph showing someone partially submerged in water, with just their head visible above the surface. The image has a dark, moody atmosphere with the water appearing greenish in color against a black background. The subject appears to have their eyes closed and seems to be floating peacefully. The photograph has an ethereal, contemplative quality to it and appears to be professionally shot. The composition is striking in its simplicity, with the head emerging from the water creating an interesting visual divide between above and below the surface.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-12-23

The image depicts a man submerged in water, with his head above the surface. He is positioned centrally in the frame, facing forward and to the right. His eyes are closed, and he appears to be in a state of relaxation or even sleep. The man's face is illuminated by a light source, which casts a warm glow on his features. His skin tone is fair, and he has a prominent forehead, nose, and chin. His hair is white and thinning, with a few strands visible on top of his head. A small patch of hair is also visible on his chin. The man's body is mostly submerged in the water, with only his head and shoulders visible above the surface. The water is dark and murky, reflecting the light from the surrounding environment. The background of the image is a deep, rich brown, which adds to the overall sense of depth and atmosphere. The image is signed by the artist in the bottom-right corner, but the signature is illegible. The overall effect of the image is one of serenity and tranquility, capturing a moment of peacefulness and stillness.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-23

The image is a painting of a man with white hair and a beard, floating in water. The man's head is above the waterline, and his eyes are closed. He appears to be relaxed or sleeping. The background of the painting is dark, which suggests that it may be nighttime or that the man is in a dimly lit area. The overall atmosphere of the painting is peaceful and serene, with the man's calm expression and the gentle ripples in the water creating a sense of tranquility. The painting style is impressionistic, with loose brushstrokes and vivid colors used to capture the play of light on the water and the man's features. The artist has also used a range of blues and greens to depict the water, which adds to the sense of depth and movement in the painting. Overall, the painting is a beautiful and evocative depiction of a moment of relaxation and contemplation. It invites the viewer to step into the peaceful world of the painting and experience the serenity of the scene for themselves.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-26

The image features a man swimming in a pool, captured in a portrait-oriented format. The man is bald and has a white beard. He is swimming with his eyes closed, suggesting a sense of tranquility or meditation. The water is dark, and the man's reflection is visible in the water, adding to the serene atmosphere of the image. The image has a black border, giving it a classic and timeless feel. The text "3199" and "I Stark 02" is written in the bottom left and right corners, respectively, possibly indicating the edition number or the artist's signature.

Created by amazon.nova-pro-v1:0 on 2025-02-26

An elderly man with white hair and a white beard is floating in the water, probably in a swimming pool, and is probably meditating. He is wearing a white shirt, and his eyes are closed. The water is green and clear, reflecting the man's image. The image has a watermark with the text "Photo by Ralf R." in the lower left corner. The image is printed on a white sheet of paper.