Machine Generated Data

Tags

Amazon

created on 2019-05-29

Clarifai

created on 2019-05-29

Imagga

created on 2019-05-29

Google

created on 2019-05-29

| Photograph | 97 | |

|

| ||

| Snapshot | 86.1 | |

|

| ||

| Black-and-white | 74.4 | |

|

| ||

| Gentleman | 72.7 | |

|

| ||

| Sitting | 71.1 | |

|

| ||

| Photography | 67.8 | |

|

| ||

| Monochrome | 60.1 | |

|

| ||

| Stock photography | 59.4 | |

|

| ||

| Vintage clothing | 53.5 | |

|

| ||

| White-collar worker | 52.2 | |

|

| ||

Microsoft

created on 2019-05-29

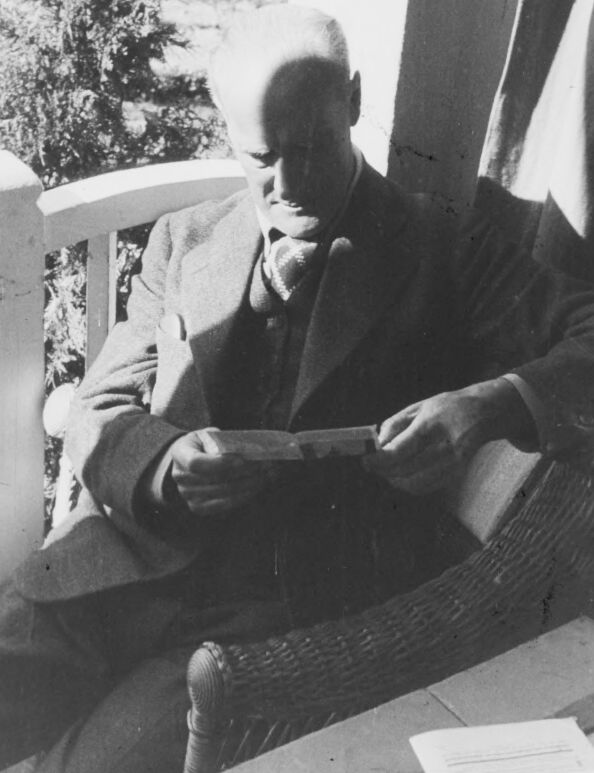

| person | 99.6 | |

|

| ||

| clothing | 98 | |

|

| ||

| man | 96.6 | |

|

| ||

| human face | 93.6 | |

|

| ||

| outdoor | 89.8 | |

|

| ||

| black and white | 89.2 | |

|

| ||

| old | 40.6 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 35-52 |

| Gender | Female, 53.1% |

| Happy | 3% |

| Surprised | 3.8% |

| Confused | 4.5% |

| Angry | 7.9% |

| Sad | 18.2% |

| Calm | 59.9% |

| Disgusted | 2.7% |

Feature analysis

Amazon

| Person | 96.7% | |

|

| ||

Categories

Imagga

| people portraits | 37.7% | |

|

| ||

| paintings art | 31.7% | |

|

| ||

| streetview architecture | 17.1% | |

|

| ||

| events parties | 9.8% | |

|

| ||

| nature landscape | 1.4% | |

|

| ||

| food drinks | 1.2% | |

|

| ||

Captions

Microsoft

created on 2019-05-29

| a man sitting on a bench | 89.2% | |

|

| ||

| a man is sitting on a bench | 84.8% | |

|

| ||

| a man that is sitting on a bench | 84.7% | |

|

| ||