Machine Generated Data

Tags

Amazon

created on 2019-05-29

Clarifai

created on 2019-05-29

Imagga

created on 2019-05-29

| person | 27.9 | |

|

| ||

| man | 26.2 | |

|

| ||

| adult | 25.5 | |

|

| ||

| sitting | 24 | |

|

| ||

| people | 24 | |

|

| ||

| male | 23.9 | |

|

| ||

| lifestyle | 23.1 | |

|

| ||

| child | 19.9 | |

|

| ||

| happy | 18.2 | |

|

| ||

| attractive | 17.5 | |

|

| ||

| sofa | 15.9 | |

|

| ||

| holding | 14.9 | |

|

| ||

| smiling | 14.5 | |

|

| ||

| cute | 14.3 | |

|

| ||

| portrait | 14.2 | |

|

| ||

| outdoors | 14.2 | |

|

| ||

| wicker | 13.8 | |

|

| ||

| fashion | 13.6 | |

|

| ||

| home | 13.6 | |

|

| ||

| couch | 13.5 | |

|

| ||

| women | 13.4 | |

|

| ||

| love | 13.4 | |

|

| ||

| couple | 13.1 | |

|

| ||

| lady | 13 | |

|

| ||

| human | 12.7 | |

|

| ||

| room | 12.3 | |

|

| ||

| face | 12.1 | |

|

| ||

| clothing | 12 | |

|

| ||

| pretty | 11.9 | |

|

| ||

| chair | 11.5 | |

|

| ||

| one | 11.2 | |

|

| ||

| casual | 11 | |

|

| ||

| work | 10.9 | |

|

| ||

| armchair | 10.8 | |

|

| ||

| cheerful | 10.6 | |

|

| ||

| old | 10.4 | |

|

| ||

| looking | 10.4 | |

|

| ||

| furniture | 10.2 | |

|

| ||

| happiness | 10.2 | |

|

| ||

| model | 10.1 | |

|

| ||

| relax | 10.1 | |

|

| ||

| relaxation | 10 | |

|

| ||

| relaxing | 10 | |

|

| ||

| park | 9.9 | |

|

| ||

| family | 9.8 | |

|

| ||

| kid | 9.7 | |

|

| ||

| together | 9.6 | |

|

| ||

| sexy | 9.6 | |

|

| ||

| expression | 9.4 | |

|

| ||

| senior | 9.4 | |

|

| ||

| product | 9.3 | |

|

| ||

| guy | 9.3 | |

|

| ||

| summer | 9 | |

|

| ||

| seat | 8.8 | |

|

| ||

| indoors | 8.8 | |

|

| ||

| hair | 8.7 | |

|

| ||

| boy | 8.7 | |

|

| ||

| youth | 8.5 | |

|

| ||

| two | 8.5 | |

|

| ||

| black | 8.4 | |

|

| ||

| elegance | 8.4 | |

|

| ||

| joy | 8.4 | |

|

| ||

| grandfather | 8.3 | |

|

| ||

| fun | 8.2 | |

|

| ||

| bench | 8.2 | |

|

| ||

| dress | 8.1 | |

|

| ||

| body | 8 | |

|

| ||

| look | 7.9 | |

|

| ||

| husband | 7.8 | |

|

| ||

| wall | 7.7 | |

|

| ||

| trainer | 7.6 | |

|

| ||

| loving | 7.6 | |

|

| ||

| laptop | 7.6 | |

|

| ||

| leisure | 7.5 | |

|

| ||

| 20s | 7.3 | |

|

| ||

| rest | 7.3 | |

|

| ||

| sensuality | 7.3 | |

|

| ||

| musical instrument | 7 | |

|

| ||

Google

created on 2019-05-29

| Photograph | 95.8 | |

|

| ||

| Snapshot | 82 | |

|

| ||

| Black-and-white | 80.7 | |

|

| ||

| Sitting | 76.4 | |

|

| ||

| Stock photography | 70.9 | |

|

| ||

| Monochrome | 67.6 | |

|

| ||

| Monochrome photography | 64.7 | |

|

| ||

| Photography | 62.4 | |

|

| ||

| Gentleman | 54.5 | |

|

| ||

| Style | 51 | |

|

| ||

Microsoft

created on 2019-05-29

| clothing | 95 | |

|

| ||

| person | 91.1 | |

|

| ||

| black and white | 86.6 | |

|

| ||

| man | 80.4 | |

|

| ||

| human face | 60.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

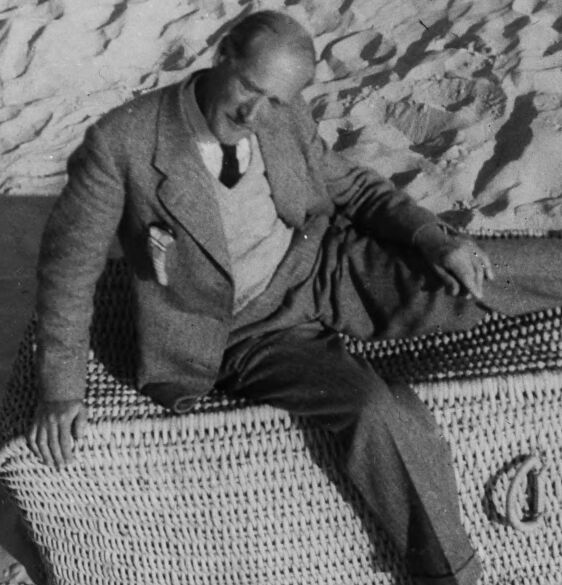

| Age | 48-68 |

| Gender | Male, 97.7% |

| Sad | 6% |

| Surprised | 1% |

| Confused | 6.2% |

| Calm | 81% |

| Happy | 1.6% |

| Angry | 3.6% |

| Disgusted | 0.6% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 74.3% |

| Angry | 4.1% |

| Calm | 80% |

| Sad | 3.4% |

| Disgusted | 6.3% |

| Surprised | 2.7% |

| Happy | 1.6% |

| Confused | 1.9% |

Feature analysis

Amazon

Person

| Person | 97.1% | |

|

| ||

Categories

Imagga

| streetview architecture | 58.7% | |

|

| ||

| nature landscape | 16.4% | |

|

| ||

| interior objects | 11.8% | |

|

| ||

| paintings art | 7% | |

|

| ||

| pets animals | 2.6% | |

|

| ||

| beaches seaside | 1.4% | |

|

| ||

Captions

Microsoft

created on 2019-05-29

| a person sitting on a bed | 57.6% | |

|

| ||

| a person sitting in a bag | 57.5% | |

|

| ||

| a person sitting on a suitcase | 57.4% | |

|

| ||