Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

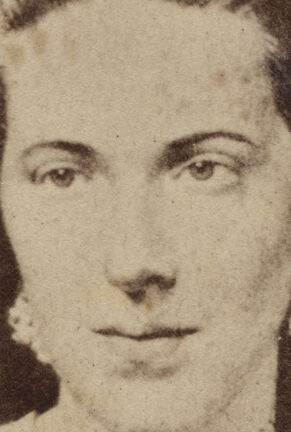

| Age | 19-31 |

| Gender | Female, 93.3% |

| Calm | 99.3% |

| Sad | 0.1% |

| Angry | 0.1% |

| Disgusted | 0% |

| Happy | 0.3% |

| Fear | 0% |

| Surprised | 0.1% |

| Confused | 0.1% |

Microsoft Cognitive Services

| Age | 32 |

| Gender | Female |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Amazon

| Person | 99% | |

Categories

Imagga

| paintings art | 92.7% | |

| people portraits | 5.8% | |

Captions

Microsoft

created on 2019-10-04

| an old picture of a person | 57.6% | |

| a person posing for the camera | 57.5% | |

| a close up of a womans face | 57.4% | |

Azure OpenAI

Created on 2024-11-15

This is an old sepia-toned photograph of the torso and partial head of an individual who appears to be wearing a dark dress or outfit with a prominent collar or lapel decoration that includes a bow and possibly a pinned ornament. The clothing style suggests it may be a historical photo from the 19th or early 20th century. The photo is mounted on a card with decorative borders and contains the text "Campbell & Ecker" and "Louisville, Ky," which might refer to the photography studio and its location. The card's edges are slightly worn, indicating age. The composition and style are consistent with portrait photography from the Victorian era or the carte de visite format, which was popular during that time.

Anthropic Claude

Created on 2024-11-14

The image appears to be an old photographic portrait of a woman. The woman has curled, shoulder-length hair and is wearing a dress with a bow tie or accessory at the neck. The portrait is printed on what looks like a vintage cabinet card, with the photographer's name "Campbell & Ecker" and the location "Louisville, Ky" printed at the bottom.

Meta Llama

Created on 2024-11-21

The image is a vintage photograph of a woman, likely from the 19th century. The photograph is in sepia tone and features a woman with dark hair styled in an updo, wearing a dress with a high neckline and a bow at the collar. The woman's attire suggests a formal or semi-formal occasion. The photograph is mounted on a card with a decorative border, which includes the names "Campbell & Ecker" and "Louisville, Ky." in cursive script at the bottom. The card appears to be aged, with visible signs of wear and tear, such as creases, tears, and discoloration. The background of the image is a plain gray color, which helps to focus attention on the photograph itself. Overall, the image presents a nostalgic and elegant portrait of a woman from a bygone era.