Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 20-38 |

| Gender | Female, 52.5% |

| Confused | 45.4% |

| Angry | 45.8% |

| Sad | 48.9% |

| Happy | 46% |

| Disgusted | 45.5% |

| Surprised | 45.7% |

| Calm | 47.7% |

Feature analysis

Amazon

| Person | 98.5% | |

Categories

Imagga

| paintings art | 98% | |

Captions

Microsoft

created by unknown on 2019-04-17

| a vintage photo of a person | 87.9% | |

| a vintage photo of a person holding a book | 56.1% | |

| a vintage photo of a group of people posing for the camera | 56% | |

Clarifai

created by general-english-image-caption-blip-2 on 2025-06-30

| an engraving of a woman and men in a room | -100% | |

| a photograph of a painting of a woman in a dress and a man in a suit | -100% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-31

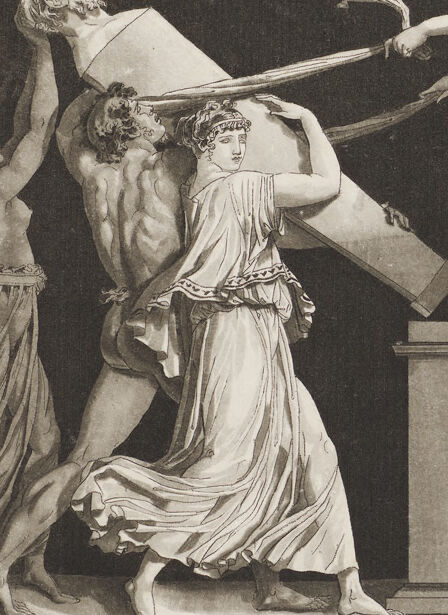

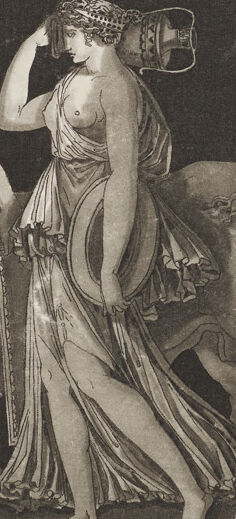

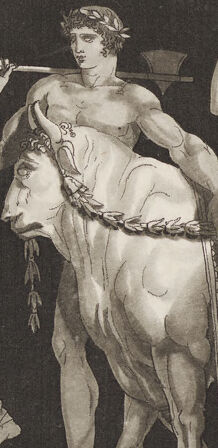

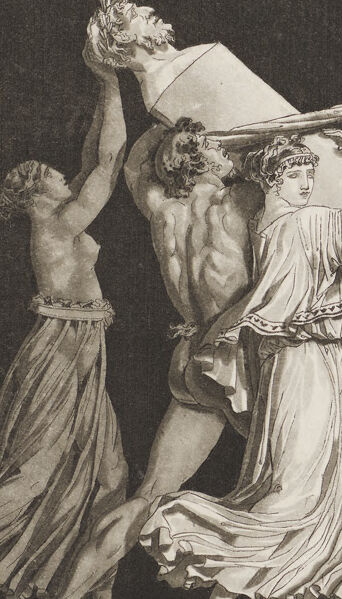

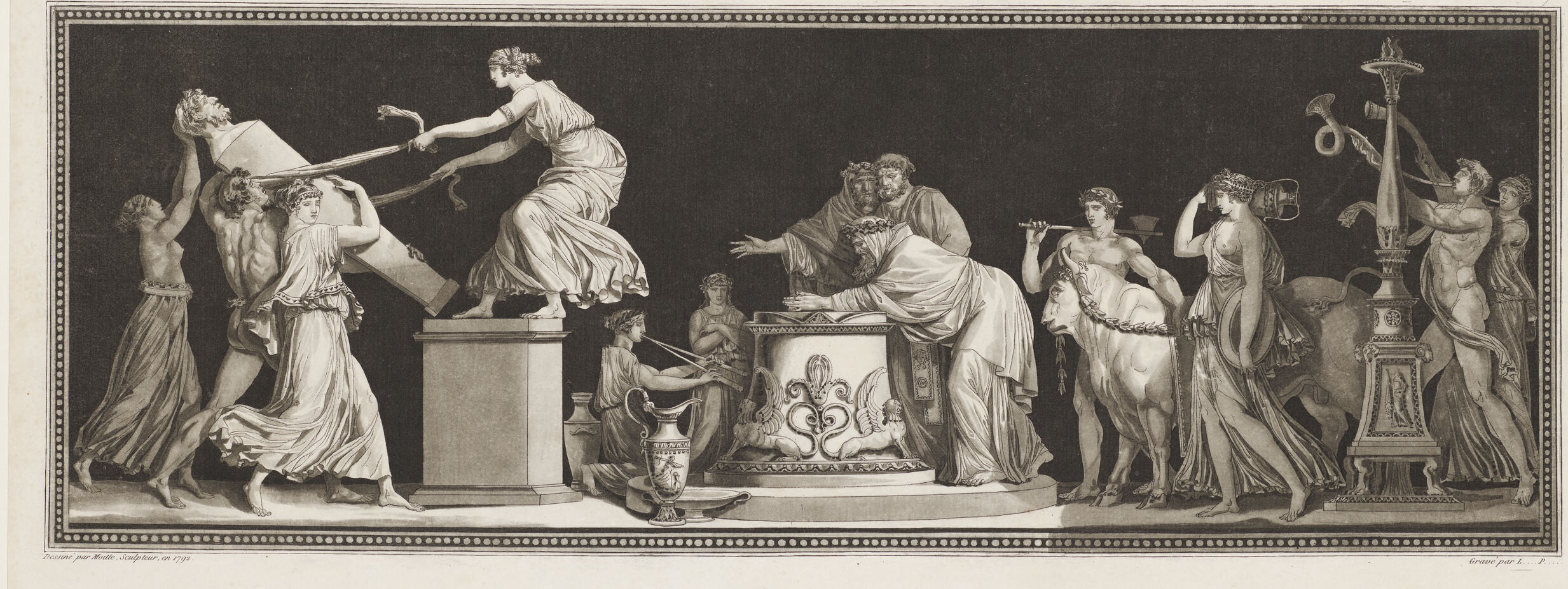

The image depicts a classical scene reminiscent of ancient Greek or Roman relief sculpture. There are several figures in various poses, engaged in what appears to be a ritualistic or ceremonial activity.

On the left side, a group of figures is interacting with a large object, possibly a statue or an offering, being hoisted or positioned by a couple of people. One figure, standing on a pedestal, is reaching out and seems to be guiding the object.

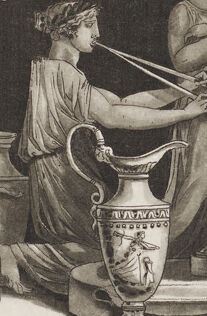

In the center, a large ornate altar or pedestal is depicted. A figure is bent over this central structure, while other figures nearby are involved in various activities, including one figure who appears to be playing a musical instrument.

To the right, more figures are shown in motion, with another central object that looks like an ancient lampstand or another ceremonial artifact. One of the figures is holding a horn-like instrument, and another one is holding a staff or spear.

The scene overall suggests a narrative involving worship, sacrifice, or celebration, characteristic of ancient civilizations. The figures are adorned in flowing, classical garments, emphasizing the historical and cultural theme of the artwork.

Created by gpt-4 on 2024-01-27

This black and white image appears to be a classical engraving depicting a ritual or ceremonial scene. A central figure in classical attire reaches out over an altar adorned with detailed ornamentation. This figure is surrounded by other individuals in flowing robes engaged in various dynamic actions—some interact with the altar, while others seem to be partaking in a procession or carrying ceremonial objects such as urns and musical instruments. The composition is rich with patterned textiles, classical motifs, and a sense of movement, evoking an atmosphere that's both solemn and active. The setting suggests an ancient or mythological context, likely drawing from Greco-Roman iconography. The frame within the image suggests this is an artwork created for print, with text underneath indicating it may be a plate from a collection or book, with references to Paris and potential publishers or creators' names, enhancing its historical value.

Anthropic Claude

Created by claude-3-haiku-48k-20240307 on 2024-03-29

This black and white engraved image depicts a complex, allegorical scene from what appears to be ancient Greek or Roman mythology. The central figure in the composition is a goddess or female deity, surrounded by various other figures representing mythological characters or personifications. The scene appears to be set in an ornate, temple-like setting, with architectural elements and decorative vessels and objects visible. The figures are shown in dramatic poses and gestures, conveying a sense of movement and dynamism within the static image. The overall style and subject matter suggest this is a high-quality work of classical or neoclassical art, likely created in the 18th or 19th century.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-26

This is a black and white classical artwork, appears to be an engraving or print, depicting a lively Bacchanalian scene or ancient Roman/Greek celebration. The composition shows multiple figures in flowing robes and classical dress engaged in an animated dance or celebration. The figures are arranged in a dynamic composition, with some appearing to leap or move energetically across the scene. There are decorative pedestals or columns interspersed throughout the image, and the overall style suggests this is from the neoclassical period. The artwork has a theatrical quality to it, with dramatic poses and flowing drapery. The scene is framed with a decorative border of dots or beading around the edge. At the bottom of the image, there appears to be text in French, suggesting this might be from a French publication or artist.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

The image depicts a black-and-white illustration of a scene from ancient Greek mythology, featuring various figures and objects. The central figure is a woman in a flowing robe, standing on a pedestal and holding a staff. To her left, a man is shown being pulled by two other men, while to her right, a group of people are gathered around a table with a snake on it.

In the background, there are several other figures, including a man playing a musical instrument, a woman holding a torch, and a centaur. The overall atmosphere of the image suggests a sense of drama and tension, as if the characters are engaged in some kind of ritual or ceremony.

The illustration is surrounded by a decorative border, which adds to the overall sense of grandeur and importance. The use of black and white gives the image a timeless quality, making it difficult to determine when it was created. Overall, the image is a striking representation of ancient Greek mythology, capturing the imagination and conveying a sense of drama and intrigue.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-12-01

The image depicts a black and white engraving of a scene from ancient Greek mythology, showcasing a group of people in various poses around a central figure. The engraving is surrounded by a decorative border, adding to its aesthetic appeal.

Key Elements:

- Central Figure: A woman sits on a pedestal, surrounded by a group of people in various poses.

- Figures: The figures are dressed in classical Greek attire, with some holding objects such as a torch, a lyre, and a vase.

- Decorative Border: The engraving is surrounded by a decorative border, which adds to its aesthetic appeal.

- Background: The background of the engraving is dark, which helps to highlight the figures and their surroundings.

Overall Impression:

The engraving appears to be a representation of a scene from ancient Greek mythology, possibly depicting a mythological figure or event. The use of classical Greek attire and the inclusion of mythological objects suggest that the engraving is intended to evoke a sense of history and culture. The decorative border and dark background add to the engraving's aesthetic appeal, making it a visually striking piece of art.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-24

The image is a black-and-white print featuring a large, intricate scene with multiple figures. The scene appears to be a historical or mythological tableau, possibly inspired by classical art. The central focus is on a group of figures, including a woman holding a hammer and a man lying on a bed, surrounded by other characters. The figures are depicted in classical attire, with flowing robes and intricate details. The scene is framed by a decorative border, and the image has a monochromatic, artistic quality.

Created by amazon.nova-pro-v1:0 on 2025-02-24

The image is a black-and-white drawing of a group of people in ancient Greek attire. The people are arranged in a line, with some standing on pedestals. Some of them are holding objects, such as a flute, a torch, and a vase. A man in the middle is sitting on a pedestal, while others are standing. A watermark is at the bottom of the drawing.

Google Gemini

Created by gemini-2.0-flash on 2025-04-27

The image is an engraving in a black and white style, depicting a scene with several figures in classical attire, reminiscent of ancient Greek or Roman art. The composition is framed by a decorative border.

On the left side, three figures are carrying a large object, possibly a stone sarcophagus or a sculpted block. One of the figures is a woman, and the group appears to be struggling with the weight of the object. Above them, a woman stands on a pedestal, extending her arms to help maneuver the object into place.

In the center, there is an altar with two figures attending to it. One is kneeling and using a bellows, while the other stands and oversees the activity. Behind them, two additional figures stand in conversation.

To the right, a man leads a bull with a rope, followed by a woman carrying a round object. Further to the right, there's a tall, ornate lampstand with various musical instruments hanging from it, accompanied by two more figures.

The overall scene conveys a sense of a ritual or ceremonial procession, possibly related to a religious or commemorative event. The style and attire suggest a historical or mythological context.

Created by gemini-2.0-flash-lite on 2025-04-27

This is a detailed, grayscale print, likely an etching or engraving, depicting a classical or neoclassical scene. It's framed with a decorative border and the composition is divided into several distinct groupings or vignettes. Here's a breakdown:

Overall Scene:

- The scene is set against a dark background, emphasizing the figures and objects in the foreground.

- The figures are primarily draped in classical-style garments, suggesting a Greek or Roman influence.

- The composition evokes a sense of solemnity and ritual.

Groupings:

Bringing Objects:

- Figures appear to be carrying or moving an object that appears to be a bust or relief, and another heavy object, possibly a tablet.

- A woman is standing on a pedestal, seemingly involved in guiding the process, while holding a ribbon or snake.

Ritual/Ceremony at an Altar:

- A central altar is decorated with ornate carvings and surrounded by figures, some of whom appear to be priests.

- One figure is reading or studying from an open book or tablet resting on the altar.

- Another figure is possibly offering incense.

- A figure plays with a wind instrument.

Sacrifice/Procession:

- Figures with a cow or bull that appears to be part of a procession or sacrifice.

More figures.

- Torch, horns

Additional Details:

- The print is labeled with text in French, giving information about the location and artist.

- The overall style and subject matter are characteristic of neoclassical art.

In conclusion, the image is a meticulously rendered print that depicts a multi-part classical scene, likely of a religious or ceremonial nature, filled with symbolism and carefully composed figures.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-04-27

The image depicts a classical scene, likely inspired by Greek or Roman mythology, featuring a group of figures engaged in various activities. The scene is framed by a decorative border, and the entire composition is in black and white, suggesting it is an engraving or etching.

From left to right, the figures and their activities are as follows:

- A woman in flowing robes appears to be holding a large object, possibly a shield or a mirror, while a man next to her is holding a staff or spear.

- Another woman, dressed in classical attire, is holding a large horn or cornucopia, symbolizing abundance or prosperity.

- A seated figure, possibly a sage or philosopher, is engaged in conversation or instruction with a younger figure who is kneeling before him.

- A centaur, a mythical creature with the upper body of a human and the lower body of a horse, is playing a lyre, a stringed musical instrument.

- A woman is holding a large urn or vase, possibly pouring something from it.

- Another woman is holding a torch or staff, with a serpent wrapped around it, symbolizing wisdom or healing.

- A man is holding a large hammer or mallet, possibly representing craftsmanship or labor.

- A woman is holding a large wreath or garland, symbolizing victory or honor.

The central figure, who appears to be the focal point of the scene, is seated on a throne or chair, suggesting a position of authority or wisdom. The overall composition and the figures' attire and props suggest a theme of mythology, philosophy, or the arts. The inscription at the bottom indicates that this is a work from the 18th century, specifically from the Louvre Museum in Paris.