Machine Generated Data

Tags

Color Analysis

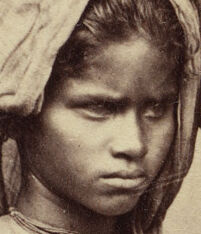

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 57.7% |

| Sad | 23.2% |

| Disgusted | 4% |

| Angry | 8.5% |

| Happy | 0.8% |

| Confused | 7.8% |

| Surprised | 5.9% |

| Calm | 49.7% |

Feature analysis

Amazon

| Person | 99.5% | |

Categories

Imagga

| paintings art | 47.3% | |

| events parties | 34.5% | |

| interior objects | 16.2% | |

| people portraits | 1% | |

| streetview architecture | 0.4% | |

| food drinks | 0.2% | |

| text visuals | 0.1% | |

| pets animals | 0.1% | |

| sunrises sunsets | 0.1% | |

| nature landscape | 0.1% | |

Captions

Microsoft

created on 2018-10-18

| a couple of people that are standing in the rain holding an umbrella | 57.5% | |

| a couple of people that are standing in the rain | 57.4% | |

| a person standing posing for the camera | 57.3% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-31

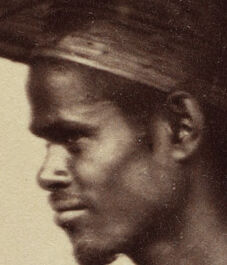

The image depicts two individuals standing in traditional attire. The person on the left is dressed in a light long-sleeved shirt and a draped lower garment, with headwear wrapped around their head. The individual on the right is bare-chested, wearing a wrapped lower garment, and a distinctive large, flat, circular hat. Both are barefoot and standing on a woven mat, with a plain background behind them.

Created by gpt-4 on 2024-11-19

The image displays two individuals standing side by side against a neutral background. The person on the right is wearing a traditional wide-brimmed hat and minimal clothing that wraps around the waist, suggesting a cultural garment possibly for a warm climate. The individual on the left is dressed in a long-sleeved blouse and a draped lower garment. Both are barefoot, standing on what appears to be a woven mat, and the photo has a sepia tone indicative of historical photography techniques. The context suggests it could be an anthropological or ethnographic photograph from the 19th or early 20th century.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-27

This appears to be a historical photograph, likely from the 19th century, showing two individuals in traditional Filipino or Southeast Asian dress. The image is in sepia tones, characteristic of early photography. On the right stands a person wearing a large circular hat (likely a traditional salakot) and simple white wrapped garments around the lower body, with a bare torso. On the left is a shorter individual wearing loose-fitting light-colored clothing including a head covering and draped garments. Both are barefoot and standing on what appears to be a woven mat or floor covering. The photograph has an ethnographic quality to it, typical of colonial-era documentation of indigenous peoples and their traditional dress.

Created by claude-3-haiku-20240307 on 2024-11-19

The image appears to be a historical photograph depicting two people from what appears to be an indigenous or non-Western cultural background. The person on the left is a young person wearing a long cloth garment and head covering, while the person on the right is a bare-chested adult wearing a traditional hat or headpiece. Both individuals have a serious, contemplative expression on their faces and are standing in front of what looks like a patterned textile or mat. The overall impression is one of documenting traditional attire and customs from a different cultural context.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

This image is a black and white photograph of a man and a young girl standing side by side. The man, positioned on the right, is shirtless and wears a long, white cloth wrapped around his waist. He has a large, round hat on his head, which is made of a woven material, possibly bamboo or straw. The hat is quite large and has a wide brim that extends outward from the crown. The girl, standing on the left, is dressed in a long, white shirt with rolled-up sleeves and a long, white skirt. She also wears a headscarf that covers her hair and neck. Both individuals are barefoot and stand on a woven mat or rug that covers the floor. The background of the image is a plain wall, which appears to be made of concrete or plaster. The overall atmosphere of the image suggests that it was taken in a studio or indoor setting, possibly in the late 19th or early 20th century.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-12-01

The image is a vintage photograph of two individuals, likely from the early 20th century, based on the clothing and style of the photograph. * The person on the left is a young girl or woman, standing barefoot on a woven mat. She wears a long, loose-fitting dress that reaches her ankles and a headscarf tied around her head, leaving her hair visible underneath. Her attire appears to be made of a lightweight, possibly cotton or linen fabric. + The dress is a light color, possibly white or off-white, and has a simple design with no visible patterns or embellishments. + The headscarf is also light-colored and has a few loose threads hanging from it. * The person on the right is a man, also standing barefoot on the same woven mat. He wears a traditional Asian conical hat, known as a "nón lá" in Vietnamese or "salakot" in Filipino, which is made of woven bamboo or palm leaves. + The hat is large and flat, with a wide brim that provides protection from the sun. + The man's torso is bare, revealing his muscular physique. + He wears a sarong-style garment wrapped around his waist, which is also made of a lightweight fabric. + The background of the photograph is a plain wall, possibly made of mud or plaster, with a few scratches and marks visible. In summary, the image depicts two individuals from a rural or agricultural community, likely from Southeast Asia, based on their clothing and the style of the photograph. The man's conical hat and the woman's headscarf suggest a traditional or cultural significance to their attire.