Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

Imagga

AWS Rekognition

| Age | 11-18 |

| Gender | Male, 95.2% |

| Disgusted | 3.2% |

| Confused | 6.8% |

| Happy | 2.7% |

| Angry | 2.5% |

| Calm | 77.2% |

| Surprised | 3.2% |

| Sad | 4.4% |

Feature analysis

Amazon

| Person | 99.9% | |

Categories

Imagga

| people portraits | 99.5% | |

| paintings art | 0.4% | |

| streetview architecture | 0.1% | |

Captions

Microsoft

created by unknown on 2018-03-22

| a group of people sitting on a bench posing for the camera | 96.2% | |

| a group of people sitting on a bench posing for a photo | 95.4% | |

| a group of people sitting on a bench in front of a building | 94.2% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-12

| a photograph of a group of men and women sitting on steps | -100% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-29

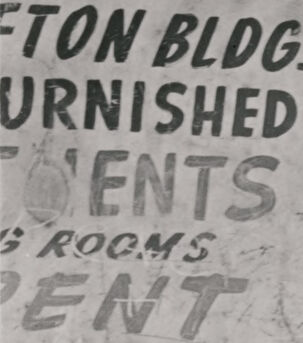

The image depicts a group of boys and a baby in a street setting. The boys are situated on the steps of a building entrance. Some of them are sitting on the steps while others are standing. One boy is holding a baby, and their clothing appears casual, typical of mid-20th century styles. There is a sign on the building which reads "Boston Bldg. Furnished Apartments" indicating that this might be a residential area. The overall scene gives a sense of urban life during that time period.

Created by gpt-4 on 2024-11-28

The image depicts a group of individuals on the steps of a building entryway. The style of clothing and the black-and-white format suggest the photograph may be from an earlier era. The photograph captures a candid moment among the individuals who appear to be casually posing or relaxing on the steps. There's a sign on the left side of the doorway that reads 'FURNISHED ROOMS FOR RENT', indicating the location might be a residential building offering accommodations. The building itself shows some wear and age, with visible textures on the wall and woodwork around the doorway.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-26

This is a black and white photograph that appears to be from the mid-20th century. It shows a group of young people gathered on what looks like the front steps of a building. There's a sign visible in the image that reads "TON BLDG FURNISHED...ROOMS FOR RENT." The subjects are wearing casual clothing typical of the era - rolled up jeans, short-sleeved shirts, and casual shoes. Some are sitting while others are standing in the doorway. The composition has an informal, candid quality that captures a moment of everyday life. The image has the documentary style characteristic of street photography from that period.

Created by claude-3-haiku-20240307 on 2024-11-27

The image appears to be a black and white photograph depicting a group of young boys standing and sitting in the doorway of what looks like a building or apartment. The boys have a range of expressions on their faces, from serious to playful. Some are sitting on the steps while others are standing. In the background, there is a sign that says "FTON BLDG FURNISHED RENT ROOMS". The overall scene conveys a sense of informality and youthful energy.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

This image is a black-and-white photograph of a group of young boys sitting on the steps of a building. The boys are dressed in casual clothing, with some wearing button-down shirts and others wearing t-shirts. They appear to be between the ages of 8 and 14.

In the background, there is a sign that reads "TON BLDG. URNISHED ENTS ROOMS ENT." The sign appears to be hand-painted and is attached to the wall of the building. The overall atmosphere of the image suggests a relaxed and informal setting, possibly a summer day or a weekend afternoon. The boys seem to be enjoying each other's company, and the image captures a moment of carefree youth.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-26

The image depicts a group of young boys sitting on the steps of a building, with one boy standing in the doorway behind them. The boys are all dressed in casual clothing, with some wearing shirts and pants, while others wear shorts and t-shirts. They appear to be in their early teens, with some looking more mature than others.

In the background, a sign on the wall reads "TON BLDG. FURNISHED APARTMENTS ROOMS RENT," suggesting that the building may be an apartment complex or boarding house. The overall atmosphere of the image is one of informality and camaraderie, as the boys seem to be enjoying each other's company.

Google Gemini

Created by gemini-2.0-flash on 2025-04-30

This black and white photo shows a group of boys sitting and standing on the steps in front of an open doorway. On the left, a sign for the "Eton Building, Furnished Rooms, Rents" is partially visible.

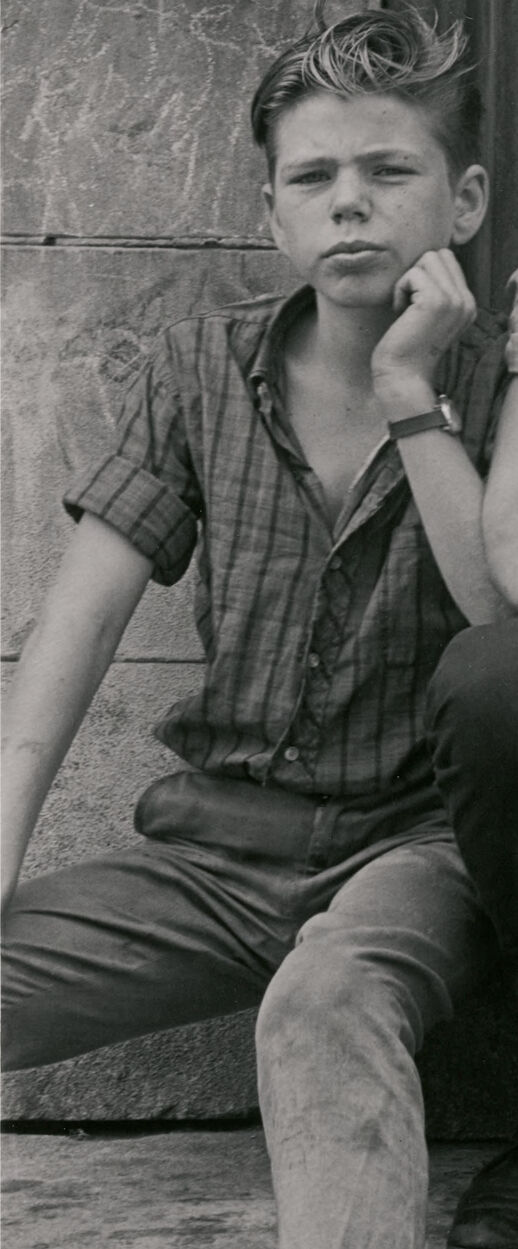

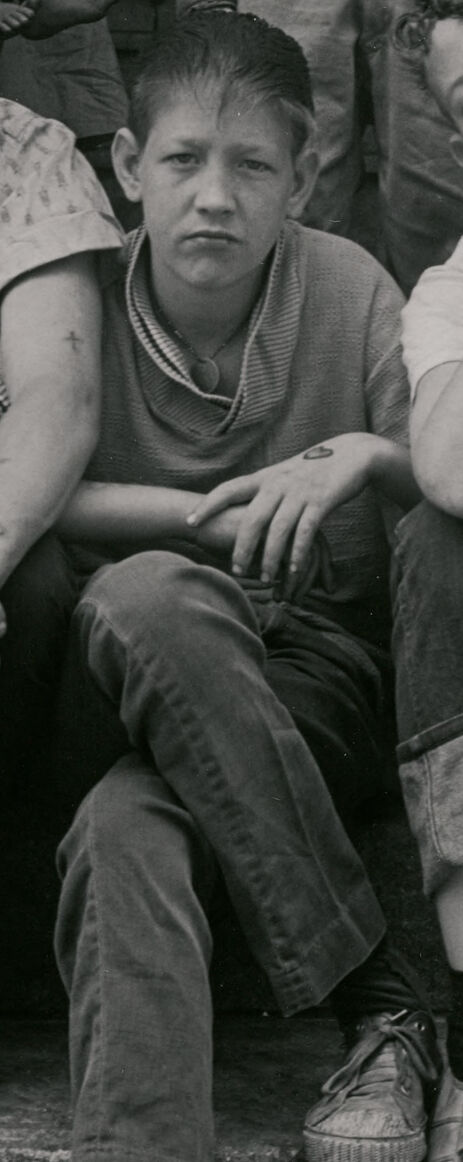

In the front row, four boys sit on the steps. The boy on the far left has his hair slicked back and wears a short-sleeved checkered shirt and pants. He rests his chin on his hand and has a watch on his wrist. Next to him, a boy with curly hair wears a patterned short-sleeved shirt and dark pants. He has his hands clasped in front of him. The boy in the center wears a striped shirt and dark pants, looking down with a somewhat sullen expression. On the right, the fourth boy wears a t-shirt and pants rolled up at the bottom. He rests his head on his hand and seems to be either deep in thought or tired. Tattoos are visible on his arm.

Behind them, three more boys are standing in the doorway. A very young child, possibly a toddler, is being held by someone behind them. The boy in the center has a wide smile and wears a collared shirt and pants. To the right, another boy in a striped shirt and shorts smiles towards the boy in the center.

The doorway behind them is dark, suggesting an interior space. The building appears old, with worn and weathered architectural details. The overall image captures a moment of everyday life, possibly in an urban neighborhood.

Created by gemini-2.0-flash-lite on 2025-04-30

Here's a description of the image:

The photograph is a black and white shot capturing a group of children posing on the steps of a building. The composition is a mix of posed and candid moments, reflecting the essence of childhood.

Here's a breakdown of the key elements:

- Subjects: The main subjects are a group of young boys of varying ages. They are the focal point, taking up most of the frame. Some are seated on the steps, while others are standing. They each have distinct expressions and postures, creating a sense of individual personality.

- Expressions and Poses: The boys' expressions range from serious to playful. Some are looking directly at the camera, while others are gazing off to the side. Their postures are relaxed, yet the overall mood suggests they are aware of being photographed. Some have arms crossed.

- Background: The background hints at an urban setting. The building facade, with its weathered look and a sign advertising "Furnished Apartments," gives context to their surroundings. A baby is held by an older person in the doorway.

- Lighting and Style: The black and white format gives the image a classic, timeless feel. The lighting appears natural, with shadows that add depth and contrast.

- Overall Impression: The photograph evokes a sense of nostalgia. It captures a moment in time and the everyday lives of children. It suggests a period, possibly the mid-20th century.