Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

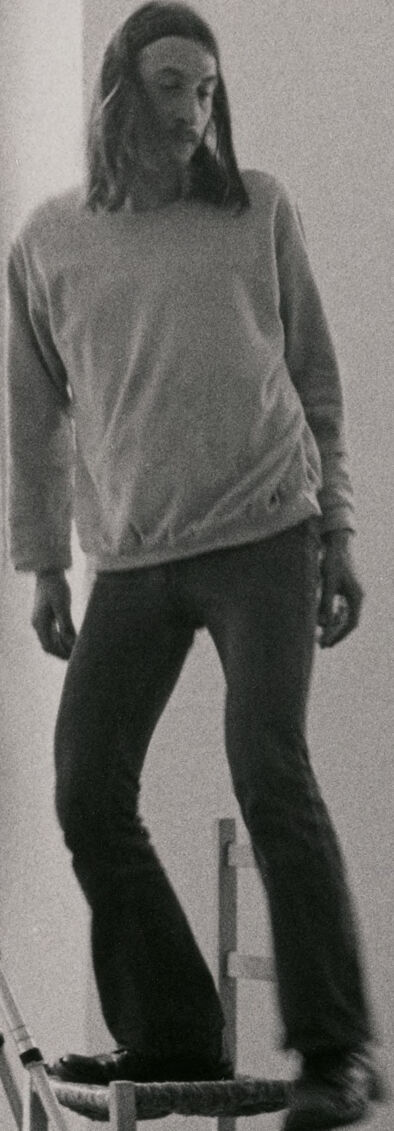

| Age | 26-43 |

| Gender | Male, 87% |

| Calm | 63.9% |

| Angry | 4.4% |

| Disgusted | 6.2% |

| Sad | 9.2% |

| Surprised | 1.9% |

| Confused | 1.3% |

| Happy | 13% |

Feature analysis

Amazon

| Person | 99.6% | |

Categories

Imagga

| interior objects | 100% | |

Captions

Microsoft

created on 2018-02-09

| a person standing in a room | 88.6% | |

| a black and white photo of a person | 68% | |

| a person standing in a room | 67.9% | |

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-26

This is a black and white photograph showing what appears to be a photography studio or artistic space. The image shows various elements of a photo shoot setup, including a sawhorse or work table in the middle, a camera on a tripod, and white fabric or backdrop material. On the far right, someone is standing on a chair, wearing casual clothing including a sweater and pants. To the left side of the image, there appears to be a bathroom or changing area. The floor is wooden and the space has a minimal, industrial feel to it. The lighting in the photograph is soft and diffused, creating subtle shadows and a contemplative atmosphere.

Created by claude-3-haiku-20240307 on 2024-11-27

The image shows a black and white photograph of a studio setting. In the foreground, there is a wooden easel with a camera mounted on a tripod. In the background, there is a partially visible human figure standing near a curtain or backdrop. The lighting suggests this is an artist's studio or photography workspace. The scene has an artistic, contemplative atmosphere.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

This image is a black and white photograph of a room with two people. The room is well-lit, with natural light coming from a window on the right side of the image.

In the foreground, there is a woman standing on a chair to the right of the image. She is wearing a long-sleeved shirt and pants, and has her hair pulled back. To the left of the image, there is another woman standing in a doorway, partially obscured by a curtain. She is naked and appears to be holding a towel or cloth in front of her body.

In the center of the image, there is a large piece of paper or cardboard propped up on an easel. There is also a camera on a tripod positioned in front of the paper. The floor is made of wood, and there are several objects scattered around the room, including a chair, a table, and a sink.

Overall, the image appears to be a photograph of a studio or workspace, possibly used for artistic or creative purposes. The presence of the camera and tripod suggests that the image may have been taken for a specific project or assignment.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-25

The image is a black-and-white photograph of a woman standing on a chair, with a camera on a tripod in front of her. The woman is wearing a long-sleeved shirt and pants, and has long hair. She is standing on a wooden chair, with her feet shoulder-width apart.

In the background, there is a large white sheet draped over a wooden frame, which appears to be a makeshift photo studio. There is also a mirror on the wall, reflecting the woman's image.

The overall atmosphere of the image suggests that the woman is posing for a photo shoot, possibly as part of an artistic or creative project. The use of a tripod and a camera suggests that the photo is being taken with care and attention to detail.

The image may also suggest that the woman is comfortable with her body and is not afraid to be vulnerable in front of the camera. The fact that she is standing on a chair, which is not a typical pose, may indicate that she is feeling confident and expressive.

Overall, the image conveys a sense of creativity, self-expression, and confidence. It suggests that the woman is embracing her individuality and is not afraid to take risks and try new things.