Machine Generated Data

Tags

Color Analysis

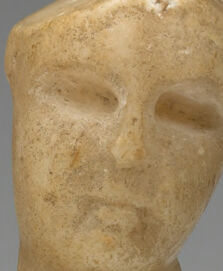

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 29-45 |

| Gender | Female, 89.1% |

| Angry | 5.1% |

| Disgusted | 52.5% |

| Sad | 11.8% |

| Calm | 16.9% |

| Happy | 7.5% |

| Confused | 4% |

| Surprised | 2.2% |

Microsoft Cognitive Services

| Age | 76 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Categories

Imagga

| food drinks | 37% | |

| pets animals | 24.9% | |

| nature landscape | 19.5% | |

| paintings art | 15.4% | |

| interior objects | 2.1% | |

| beaches seaside | 0.3% | |

| macro flowers | 0.3% | |

| people portraits | 0.2% | |

| text visuals | 0.2% | |

| streetview architecture | 0.1% | |

Captions

Microsoft

created on 2018-02-09

| a person sitting on a table | 17% | |

| a person holding a banana | 5.1% | |

| a person sitting on the ground | 5% | |

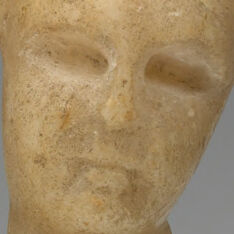

Azure OpenAI

Created on 2024-02-07

This is a photograph of a marble sculpture capturing the torso and draped lower body of a reclining figure. The sculpture's detailed carving technique emphasizes the fabric folds and the anatomical contours of the body. It is set against a neutral grey background that contrasts with its creamy stone texture, highlighting the sculpture's form and composition. The artwork, poised in a relaxed and contemplative pose, exudes a sense of classical beauty and serene grace.

Anthropic Claude

Created on 2024-03-29

The image depicts a sculptural work featuring a stylized figure lying in a reclining pose. The figure appears to be made of a light-colored, textured material, possibly stone or plaster. The figure has a simple, minimalist design with a smooth, elongated torso and limbs. The face is somewhat abstract, with simple features. The overall impression is a serene, almost meditative quality to the sculpture.

Meta Llama

Created on 2024-11-26

The image depicts a small, antique marble sculpture of a reclining woman. The sculpture is crafted from light-colored marble and features a woman reclining on her side, with her head turned towards the right side of the image. Her left arm is extended above her, while her right arm is positioned below her. The woman's body is covered by a long, flowing garment that drapes over her legs and hips. The sculpture is set against a gradient background that transitions from dark gray at the top to light gray at the bottom. The overall atmosphere of the image suggests a museum or gallery setting, where the sculpture is displayed for public viewing.