Machine Generated Data

Tags

Amazon

created on 2019-05-28

| Clothing | 99.4 | |

|

| ||

| Apparel | 99.4 | |

|

| ||

| Hat | 99 | |

|

| ||

| Human | 98.8 | |

|

| ||

| Person | 98.8 | |

|

| ||

| Person | 98.5 | |

|

| ||

| Person | 97.8 | |

|

| ||

| Animal | 86.2 | |

|

| ||

| Mammal | 86 | |

|

| ||

| Hat | 85.9 | |

|

| ||

| Horse | 84.7 | |

|

| ||

| Face | 84.2 | |

|

| ||

| Bull | 77.7 | |

|

| ||

| Sun Hat | 70.4 | |

|

| ||

| Shorts | 69.4 | |

|

| ||

| Coat | 66.6 | |

|

| ||

| Cattle | 66.1 | |

|

| ||

| Photography | 61.8 | |

|

| ||

| Photo | 61.8 | |

|

| ||

| Portrait | 61.8 | |

|

| ||

| Outdoors | 60.1 | |

|

| ||

| Pants | 58 | |

|

| ||

| Female | 57.3 | |

|

| ||

| Spoke | 56.8 | |

|

| ||

| Machine | 56.8 | |

|

| ||

| Girl | 56.4 | |

|

| ||

| Cowboy Hat | 55.5 | |

|

| ||

Clarifai

created on 2019-05-28

Imagga

created on 2019-05-28

| cowboy hat | 100 | |

|

| ||

| hat | 100 | |

|

| ||

| headdress | 86.4 | |

|

| ||

| clothing | 58.5 | |

|

| ||

| cowboy | 39.5 | |

|

| ||

| covering | 30.5 | |

|

| ||

| consumer goods | 30.2 | |

|

| ||

| male | 24.1 | |

|

| ||

| man | 22.2 | |

|

| ||

| horse | 22 | |

|

| ||

| animal | 19.5 | |

|

| ||

| outdoors | 18.7 | |

|

| ||

| people | 17.9 | |

|

| ||

| cattle | 17.1 | |

|

| ||

| bull | 16.5 | |

|

| ||

| western | 15.5 | |

|

| ||

| portrait | 14.9 | |

|

| ||

| farm | 14.3 | |

|

| ||

| person | 12.9 | |

|

| ||

| two | 12.7 | |

|

| ||

| cow | 12.7 | |

|

| ||

| mammal | 12.3 | |

|

| ||

| outdoor | 12.2 | |

|

| ||

| farmer | 11.9 | |

|

| ||

| buffalo | 11.8 | |

|

| ||

| guy | 11 | |

|

| ||

| field | 10.9 | |

|

| ||

| sport | 10.7 | |

|

| ||

| wild | 10.5 | |

|

| ||

| old | 10.5 | |

|

| ||

| danger | 10 | |

|

| ||

| face | 9.9 | |

|

| ||

| travel | 9.9 | |

|

| ||

| horn | 9.8 | |

|

| ||

| riding | 9.8 | |

|

| ||

| ride | 9.7 | |

|

| ||

| together | 9.6 | |

|

| ||

| happy | 9.4 | |

|

| ||

| smile | 9.3 | |

|

| ||

| uniform | 9.2 | |

|

| ||

| bovine | 8.9 | |

|

| ||

| horns | 8.8 | |

|

| ||

| brown | 8.8 | |

|

| ||

| rural | 8.8 | |

|

| ||

| boy | 8.7 | |

|

| ||

| military | 8.7 | |

|

| ||

| safari | 8.7 | |

|

| ||

| senior | 8.4 | |

|

| ||

| saddle | 8.4 | |

|

| ||

| adult | 8.4 | |

|

| ||

| animals | 8.3 | |

|

| ||

| child | 8.3 | |

|

| ||

| leisure | 8.3 | |

|

| ||

| tourism | 8.3 | |

|

| ||

| vacation | 8.2 | |

|

| ||

| style | 8.2 | |

|

| ||

| recreation | 8.1 | |

|

| ||

| activity | 8.1 | |

|

| ||

| wildlife | 8 | |

|

| ||

| cowgirl | 7.9 | |

|

| ||

| country | 7.9 | |

|

| ||

| agriculture | 7.9 | |

|

| ||

| love | 7.9 | |

|

| ||

| cape | 7.9 | |

|

| ||

| gun | 7.8 | |

|

| ||

| five | 7.8 | |

|

| ||

| men | 7.7 | |

|

| ||

| outside | 7.7 | |

|

| ||

| ox | 7.7 | |

|

| ||

| walk | 7.6 | |

|

| ||

| dangerous | 7.6 | |

|

| ||

| laborer | 7.6 | |

|

| ||

| hand | 7.6 | |

|

| ||

| fun | 7.5 | |

|

| ||

| shirt | 7.5 | |

|

| ||

| close | 7.4 | |

|

| ||

| smiling | 7.2 | |

|

| ||

| looking | 7.2 | |

|

| ||

| cute | 7.2 | |

|

| ||

| game | 7.1 | |

|

| ||

| grass | 7.1 | |

|

| ||

| happiness | 7.1 | |

|

| ||

Google

created on 2019-05-28

| Photograph | 96.6 | |

|

| ||

| Snapshot | 84.9 | |

|

| ||

| Stock photography | 79.4 | |

|

| ||

| Working animal | 79.2 | |

|

| ||

| Photography | 78.8 | |

|

| ||

| Pack animal | 75.7 | |

|

| ||

| Black-and-white | 68.3 | |

|

| ||

| Bovine | 66.3 | |

|

| ||

| Cowboy | 65.9 | |

|

| ||

| Farmer | 65.3 | |

|

| ||

| Horse trainer | 62.9 | |

|

| ||

| Horse | 60 | |

|

| ||

| Monochrome photography | 57.8 | |

|

| ||

| Bridle | 50.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

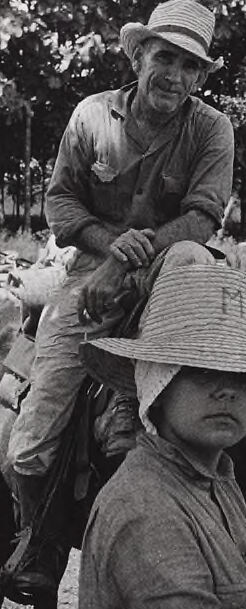

| Age | 48-68 |

| Gender | Male, 91.9% |

| Happy | 0.6% |

| Confused | 0.1% |

| Calm | 98.6% |

| Angry | 0.2% |

| Sad | 0.2% |

| Disgusted | 0.1% |

| Surprised | 0.2% |

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 68% |

| Calm | 81% |

| Confused | 3.9% |

| Happy | 0.5% |

| Disgusted | 2.5% |

| Surprised | 3.1% |

| Angry | 4.2% |

| Sad | 4.9% |

AWS Rekognition

| Age | 45-65 |

| Gender | Male, 50.4% |

| Angry | 49.6% |

| Disgusted | 49.6% |

| Surprised | 49.5% |

| Happy | 49.5% |

| Calm | 50.2% |

| Confused | 49.5% |

| Sad | 49.6% |

Microsoft Cognitive Services

| Age | 70 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Unlikely |

| Headwear | Very likely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| people portraits | 92.5% | |

|

| ||

| paintings art | 3.4% | |

|

| ||

| streetview architecture | 3.4% | |

|

| ||

Captions

Microsoft

created on 2019-05-28

| a black and white photo of a person riding a horse | 94.2% | |

|

| ||

| a person holding a white horse | 94.1% | |

|

| ||

| a person standing next to a horse | 94% | |

|

| ||

Text analysis

Amazon

AHA

CAHAG'eY/9g AHA aiey

aiey

CAHAG'eY/9g