Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

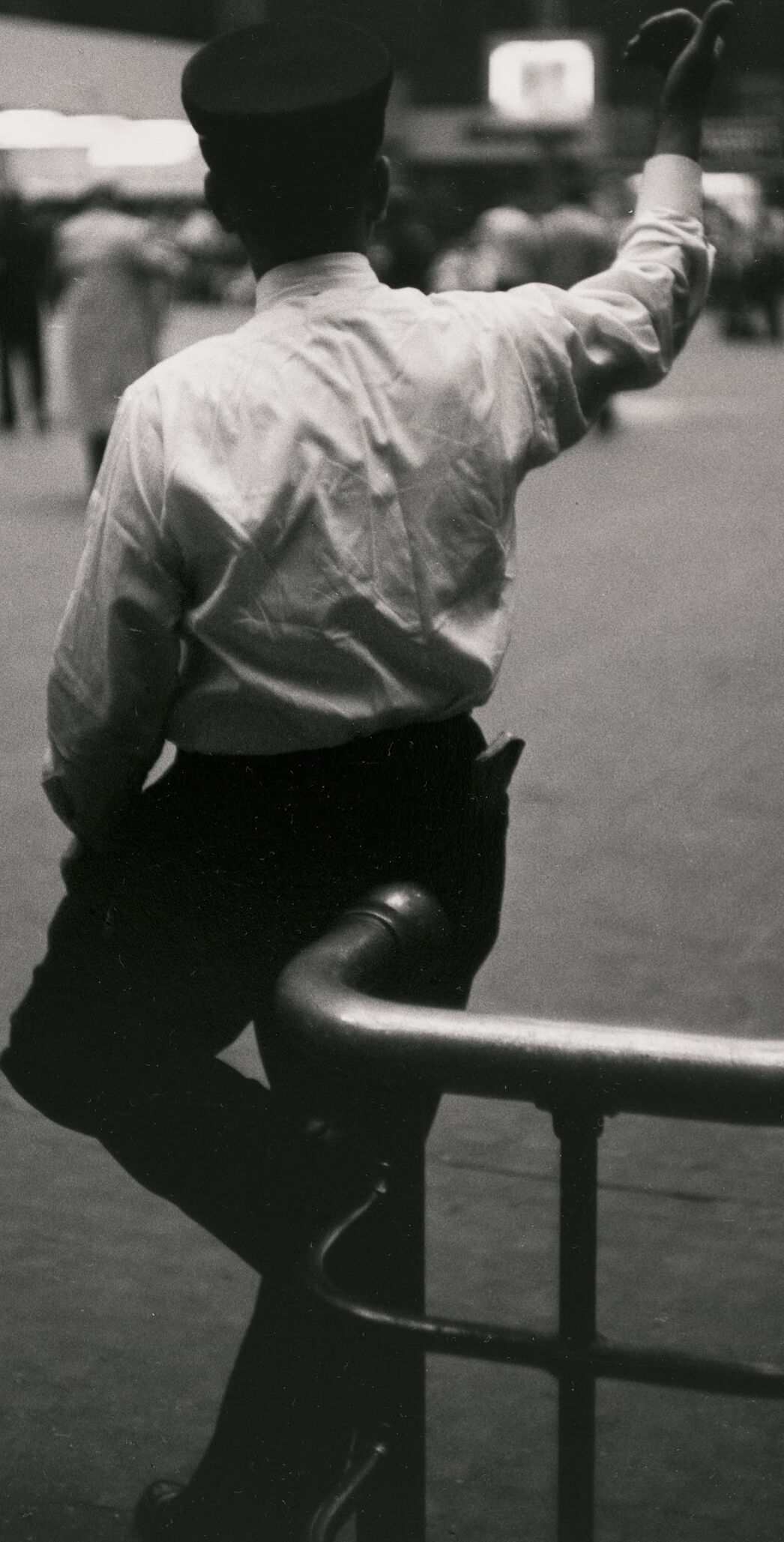

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 70.9% |

| Happy | 0.2% |

| Disgusted | 0.2% |

| Surprised | 0.3% |

| Confused | 0.1% |

| Calm | 98.4% |

| Sad | 0.4% |

| Angry | 0.3% |

Feature analysis

Categories

Imagga

| paintings art | 43.6% | |

| people portraits | 31.8% | |

| pets animals | 7.1% | |

| events parties | 4.8% | |

| food drinks | 4.5% | |

| interior objects | 3.6% | |

| streetview architecture | 2.3% | |

| nature landscape | 2.2% | |

| text visuals | 0.1% | |

| cars vehicles | 0.1% | |

| macro flowers | 0.1% | |

Captions

Microsoft

created on 2018-02-10

| a person sitting on a bench | 62.7% | |

| a person standing on top of a bench | 62.1% | |

| a person that is sitting on a bench | 56.4% | |

Azure OpenAI

Created on 2024-11-28

The black and white image depicts an indoor scene, possibly a station or a public hall, characterized by a high ceiling and a large clock visible in the background. In the foreground, a person wearing a white shirt, dark pants, and a dark cap is standing with one arm resting on a metal railing and the other arm raised in a gesture that may suggest hailing a taxi or signaling to someone. The individual appears to be in a semi-profile stance. There's soft overhead lighting and a sense of motion blur in the background, suggesting the presence of people moving about, contributing to the sense of a busy environment.

Anthropic Claude

Created on 2024-11-27

The image shows a black and white photograph of a person sitting on a chair in a dimly lit urban setting. The person is wearing a white shirt and has their back turned to the camera, their face not visible. The scene appears to be taking place on a city street, with some blurry figures visible in the background and street signs or advertisements faintly visible in the background. The overall mood of the image is one of isolation and contemplation, with the lone figure seemingly lost in thought amidst the bustle of the city.

Meta Llama

Created on 2024-11-26

The image depicts a black-and-white photograph of a man standing in a train station, waving at someone or something. He is dressed in a white shirt, black pants, and a black hat, and is leaning against a railing with his left leg bent at the knee. His right arm is raised in a wave, and he appears to be looking out of the frame. In the foreground, there is a person wearing a dark coat and cap standing next to the man, although they are mostly out of frame. The background of the image shows a bustling train station, with people milling about and a clock visible on the wall. The overall atmosphere of the image suggests that the man is saying goodbye to someone or something, possibly a loved one or a fellow traveler.