Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Imagga

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 57.7% |

| Happy | 1.2% |

| Disgusted | 1.4% |

| Surprised | 0.8% |

| Confused | 1.7% |

| Calm | 80.3% |

| Sad | 11.6% |

| Angry | 2.9% |

Feature analysis

Amazon

| Person | 94.2% | |

Categories

Imagga

| food drinks | 49.5% | |

| people portraits | 30.8% | |

| paintings art | 6.2% | |

| events parties | 5.6% | |

| cars vehicles | 5% | |

| interior objects | 0.8% | |

| pets animals | 0.6% | |

| streetview architecture | 0.5% | |

| nature landscape | 0.5% | |

| text visuals | 0.2% | |

| macro flowers | 0.2% | |

Captions

Microsoft

created by unknown on 2018-02-10

| a person wearing a costume | 69.2% | |

| a close up of a person wearing a costume | 62.2% | |

| a person standing in front of a building | 44.4% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-12

| a photograph of a man in a black and white photo | -100% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-30

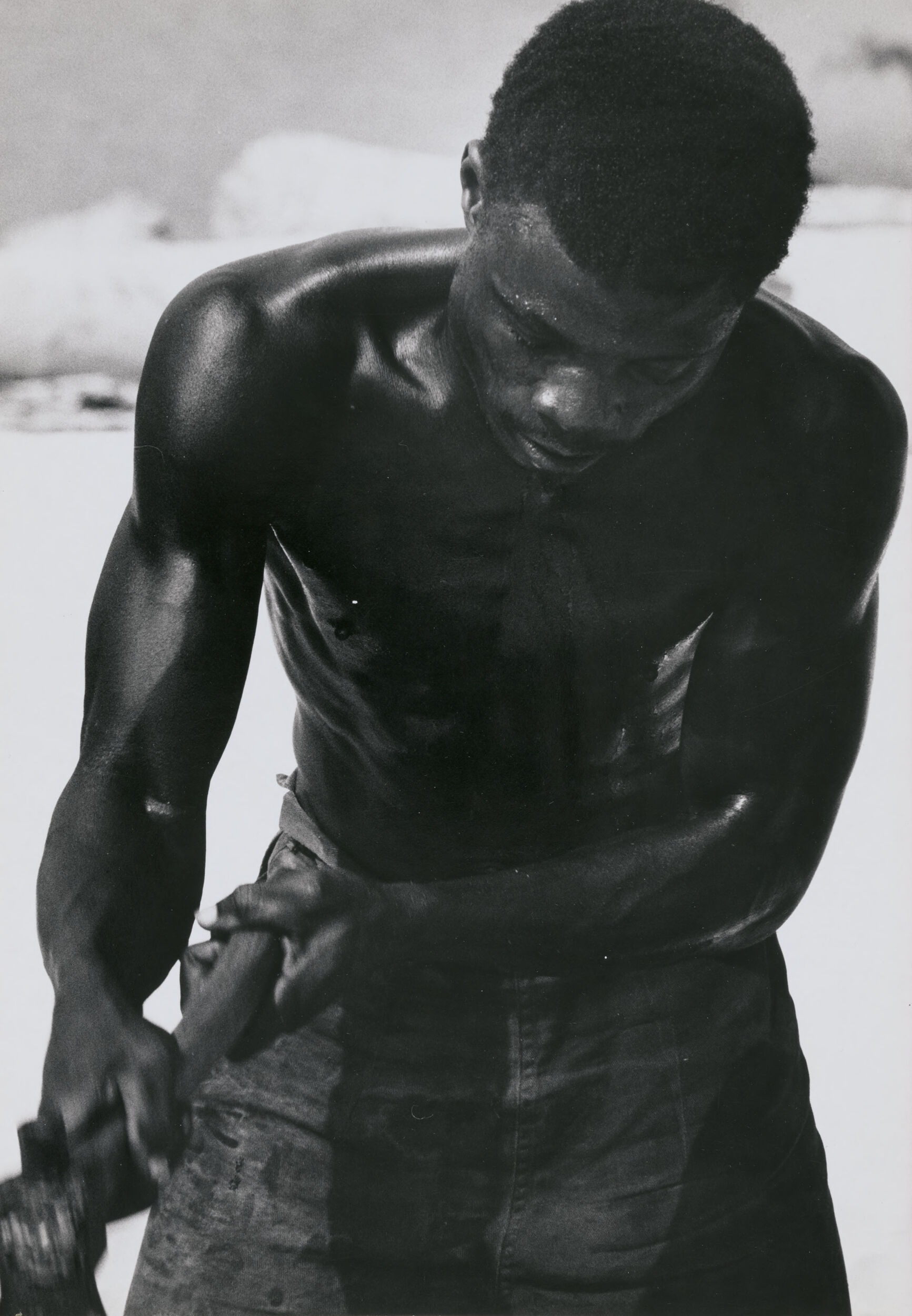

The image features a strong, muscular male figure engaged in physical labor. He is shirtless, highlighting the contours and definition of his muscles. He appears to be using a tool or object with both hands, possibly indicating involvement in a manual task or work. The background is slightly out of focus but shows a light, neutral setting. The photograph is in black and white, which emphasizes the contrast and shadows on the man's body, adding to the dramatic and intense atmosphere of the scene.

Created by gpt-4 on 2024-11-28

This is a black-and-white photograph showing an individual from a rear perspective, providing a view of their back and shoulders. The person is shirtless and appears to have a muscular build. They are wearing what seems to be a pair of dark pants or jeans, with the material looking rugged or possibly wet. The lighting and shadows highlight the contours of the person's back muscles and glistening skin, possibly from sweat or water. It looks like they might be holding something in their right hand, but the object is not clearly visible. Also worth noting is the contrast between the person's skin tone and the lighter background, which could be a natural or a textured surface behind them.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-26

This is a powerful black and white photograph showing the muscular upper body of a shirtless worker. The image captures the physical intensity of manual labor, with sweat glistening on the person's skin and their strong arms and torso prominently displayed. They appear to be looking down, focused on some task, wearing what looks like work pants. The composition emphasizes the sculptural quality of the human form and the dignity of physical labor. The lighting and contrast in the black and white photo creates dramatic shadows that highlight the musculature and physical strength of the subject.

Created by claude-3-haiku-20240307 on 2024-11-27

The image depicts a shirtless man with a muscular, athletic build. He has a dark complexion and short, curly dark hair. The man is facing away from the camera, with his head turned slightly to the side. The background appears to be a natural setting, possibly a beach or other outdoor location. The image has a stark, black-and-white tone, highlighting the contrast of the man's dark skin against the lighter background. The overall impression is of a powerful, physical presence.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

This image is a black-and-white photograph of a shirtless man, likely a laborer, engaged in manual work. The man has dark skin and short hair, and is wearing dark pants. He is bent over, with his head down and his hands on his thighs. His body appears to be glistening with sweat, suggesting that he has been working hard.

In the background, there are some indistinct objects that appear to be tools or equipment, but they are not clearly visible. The overall atmosphere of the image suggests that the man is working in a hot and physically demanding environment, possibly outdoors or in a factory setting. The image conveys a sense of hard work and dedication, as well as a sense of exhaustion and fatigue.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-25

The image depicts a shirtless black man with short hair, wearing jeans, engaged in physical labor. He is bent over, with his hands on his knees, and appears to be sweating. The background is out of focus, but it seems to be an outdoor setting, possibly a construction site or a field. The overall atmosphere of the image suggests that the man is working hard and is likely a laborer or manual worker.

Google Gemini

Created by gemini-2.0-flash on 2025-04-30

Here's a description of the image:

The image is a black and white photograph featuring a muscular, shirtless Black man. He is cropped from the torso up, looking down towards his hands, which are holding an object, perhaps a tool, that is partially out of focus. His skin appears to glisten, potentially with sweat. He is wearing what appears to be work pants. The background is a light, somewhat blurred expanse, possibly an outdoor setting. The photograph emphasizes the physical strength and labor aspect of the man.

Created by gemini-2.0-flash-lite on 2025-04-30

Here is a description of the image:

The image is a black and white photograph of a man with dark skin. He appears to be shirtless, with his upper body prominently displayed. His skin has a visible sheen, suggesting he might be sweating or wet. His face is partly obscured, but he seems to be looking down with a focused expression. The man's arms are muscular and he is holding something in his hands. He is wearing pants that are dark and possibly wet or damp. The background is mostly out of focus and light-toned, giving the impression of a wide open space like a beach or a field. The focus is definitely on the man and the play of light on his body. The overall aesthetic is that of a strong, working individual.