Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

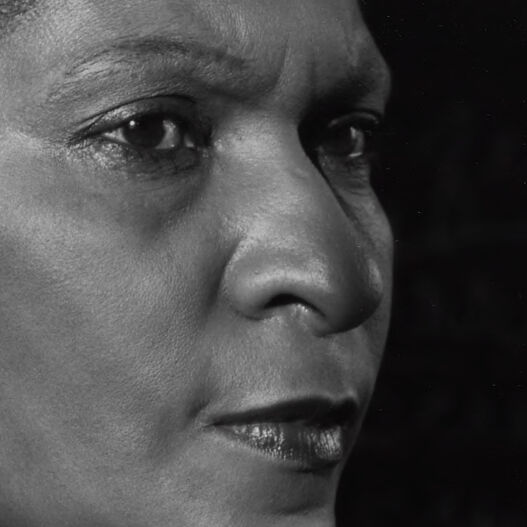

AWS Rekognition

| Age | 35-52 |

| Gender | Female, 92.1% |

| Happy | 0.5% |

| Angry | 5.5% |

| Surprised | 2% |

| Sad | 3% |

| Disgusted | 0.7% |

| Calm | 85.6% |

| Confused | 2.6% |

Feature analysis

Amazon

| Person | 99.1% | |

Categories

Imagga

| people portraits | 76.6% | |

| paintings art | 17.8% | |

| food drinks | 5% | |

| events parties | 0.3% | |

| text visuals | 0.1% | |

| pets animals | 0.1% | |

Captions

Microsoft

created on 2018-03-20

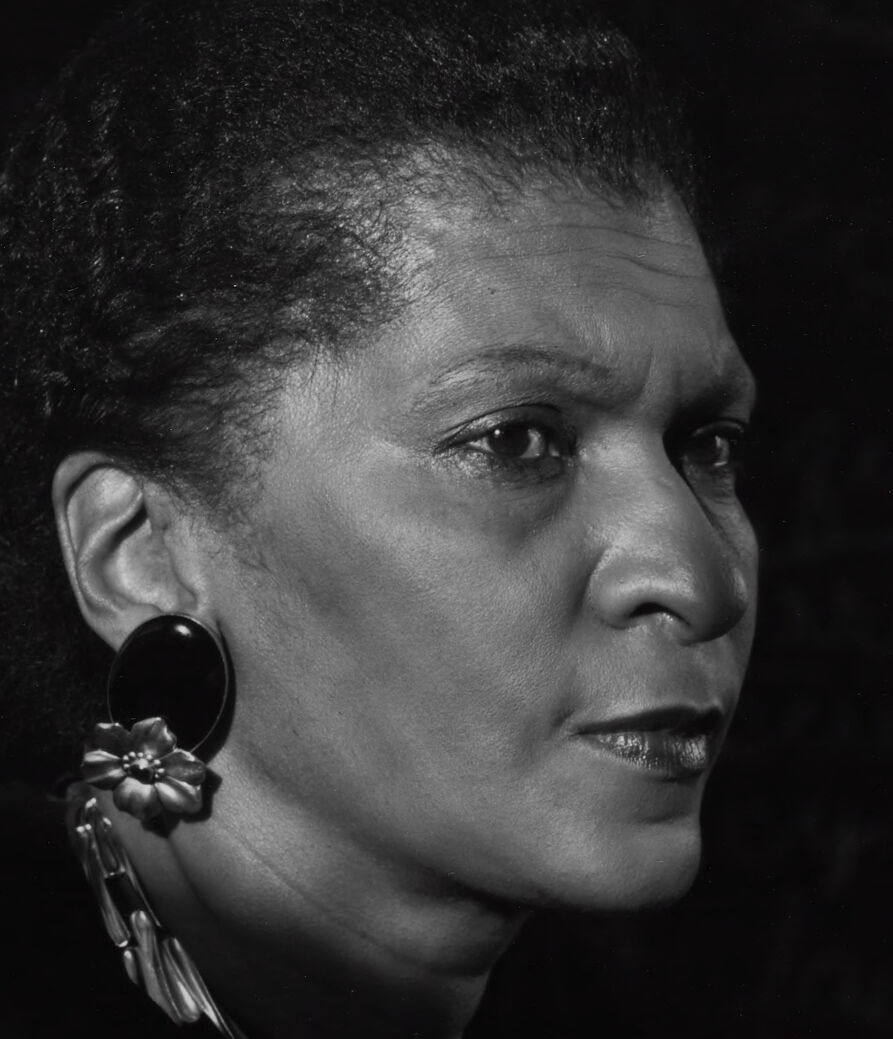

| a man that is standing in the dark | 76% | |

| a man standing in a dark room | 72.4% | |

| a man in a dark room | 72.3% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-30

The image is a black and white photograph featuring a person wearing a dark garment with a high neck. The person is adorned with large, elegant earrings and a chunky, twisted necklace. The background appears to contain some handwritten text or chalk markings, suggesting a scholastic or thoughtful setting. Notably, there is a distinct shadow cast on the wall behind the person. The photograph is signed by the artist in the bottom right corner.

Created by gpt-4 on 2024-11-28

The image features a person in profile view wearing a black top and adorned with prominent earrings and a neckpiece. The earrings have a circular design, and the neckpiece is a chunky chain style. The individual is set against a background that has what appears to be cursive writing in a chalk-like texture, suggesting a chalkboard or a wall with writing on it. The photograph is monochromatic, and there is a visible signature in the bottom right corner.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-26

This is a striking black and white portrait photograph. The subject is shown in profile against a dark background, wearing a black garment and elegant jewelry including a statement necklace and dramatic dangling earrings. The lighting creates beautiful contrast and highlights the strong facial features and thoughtful expression. The composition is artistic and intimate, with professional studio-quality lighting that emphasizes the subject's dignified presence. There appears to be some text or signage blurred in the background. The photograph has a signature or marking in the bottom right corner, suggesting this is a professional portrait, likely from the late 20th century based on the styling and photographic technique.

Created by claude-3-haiku-20240307 on 2024-11-27

The image depicts a close-up portrait of a woman with a serious expression on her face. She appears to be an elderly African American woman with short, dark hair and large, dangling earrings. The background is dark, drawing the viewer's attention to the subject's pensive gaze and contemplative expression. The image has a high-contrast, black-and-white aesthetic, which adds to the somber and introspective mood of the photograph.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

This image is a black-and-white photograph of a woman, possibly a teacher or professor, in front of a chalkboard. The woman is positioned on the left side of the image, facing to the right. She has dark hair pulled back and is wearing a dark top with a large, round earring on her left ear and a chunky necklace. The background is a dark room with a chalkboard behind the woman. There are some illegible words written on the chalkboard in white chalk. The overall atmosphere of the image suggests that it was taken in an educational setting, possibly a classroom or office.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-26

The image is a black-and-white photograph of Maya Angelou, an American poet, author, and civil rights activist. The image is a portrait of Maya Angelou, with her head turned to the right. She has short, curly hair and is wearing a dark-colored top, a chunky necklace, and large, round earrings. The background of the image is dark, but there appears to be a chalkboard or blackboard behind her, with some writing on it that is not legible. The overall atmosphere of the image suggests that it was taken in a classroom or educational setting, possibly during one of Maya Angelou's lectures or workshops.