Machine Generated Data

Tags

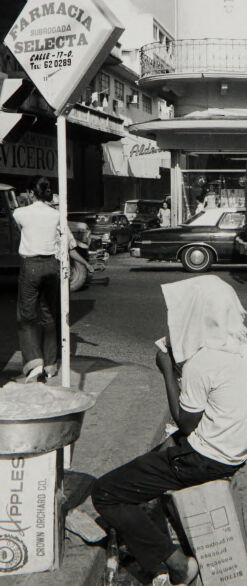

Amazon

created on 2019-11-15

Clarifai

created on 2019-11-15

| people | 99.8 | |

|

| ||

| monochrome | 99.1 | |

|

| ||

| street | 99 | |

|

| ||

| man | 96 | |

|

| ||

| adult | 95.9 | |

|

| ||

| group together | 95.6 | |

|

| ||

| two | 95.5 | |

|

| ||

| group | 95.4 | |

|

| ||

| woman | 92.1 | |

|

| ||

| one | 91.9 | |

|

| ||

| three | 89.3 | |

|

| ||

| black and white | 86.8 | |

|

| ||

| vehicle | 85.6 | |

|

| ||

| several | 80.4 | |

|

| ||

| transportation system | 80.3 | |

|

| ||

| portrait | 80.2 | |

|

| ||

| child | 77.7 | |

|

| ||

| four | 77.1 | |

|

| ||

| boy | 75.3 | |

|

| ||

| city | 74.5 | |

|

| ||

Imagga

created on 2019-11-15

| barbershop | 39.2 | |

|

| ||

| shop | 39.1 | |

|

| ||

| mercantile establishment | 26.9 | |

|

| ||

| interior | 24.8 | |

|

| ||

| office | 23.4 | |

|

| ||

| indoors | 21.9 | |

|

| ||

| people | 21.2 | |

|

| ||

| man | 20.8 | |

|

| ||

| kitchen | 20.8 | |

|

| ||

| home | 19.9 | |

|

| ||

| chair | 19.4 | |

|

| ||

| person | 18.5 | |

|

| ||

| place of business | 17.9 | |

|

| ||

| business | 17 | |

|

| ||

| work | 16.5 | |

|

| ||

| furniture | 15.7 | |

|

| ||

| salon | 15.7 | |

|

| ||

| modern | 15.4 | |

|

| ||

| equipment | 15.3 | |

|

| ||

| house | 15 | |

|

| ||

| working | 15 | |

|

| ||

| male | 14.9 | |

|

| ||

| adult | 14.2 | |

|

| ||

| room | 14.2 | |

|

| ||

| job | 14.1 | |

|

| ||

| men | 13.7 | |

|

| ||

| architecture | 13.3 | |

|

| ||

| lifestyle | 13 | |

|

| ||

| barber chair | 12.7 | |

|

| ||

| building | 12.5 | |

|

| ||

| city | 12.5 | |

|

| ||

| table | 11.4 | |

|

| ||

| urban | 11.4 | |

|

| ||

| transportation | 10.8 | |

|

| ||

| seat | 10.6 | |

|

| ||

| decor | 10.6 | |

|

| ||

| restaurant | 10.3 | |

|

| ||

| floor | 10.2 | |

|

| ||

| inside | 10.1 | |

|

| ||

| professional | 10.1 | |

|

| ||

| occupation | 10.1 | |

|

| ||

| station | 9.7 | |

|

| ||

| hospital | 9.6 | |

|

| ||

| apartment | 9.6 | |

|

| ||

| counter | 9.6 | |

|

| ||

| women | 9.5 | |

|

| ||

| industry | 9.4 | |

|

| ||

| window | 9.3 | |

|

| ||

| machine | 9.2 | |

|

| ||

| indoor | 9.1 | |

|

| ||

| center | 9.1 | |

|

| ||

| industrial | 9.1 | |

|

| ||

| design | 9 | |

|

| ||

| computer | 8.8 | |

|

| ||

| establishment | 8.8 | |

|

| ||

| travel | 8.4 | |

|

| ||

| black | 8.4 | |

|

| ||

| old | 8.4 | |

|

| ||

| worker | 8.3 | |

|

| ||

| appliance | 8.3 | |

|

| ||

| street | 8.3 | |

|

| ||

| technology | 8.2 | |

|

| ||

| light | 8 | |

|

| ||

| lamp | 7.6 | |

|

| ||

| happy | 7.5 | |

|

| ||

| commercial | 7.5 | |

|

| ||

| style | 7.4 | |

|

| ||

| safety | 7.4 | |

|

| ||

| smiling | 7.2 | |

|

| ||

| helmet | 7.2 | |

|

| ||

Google

created on 2019-11-15

| Photograph | 97.2 | |

|

| ||

| White | 95.5 | |

|

| ||

| Black-and-white | 92.4 | |

|

| ||

| Snapshot | 90.6 | |

|

| ||

| Standing | 88.8 | |

|

| ||

| Monochrome | 83.8 | |

|

| ||

| Photography | 79.4 | |

|

| ||

| Monochrome photography | 69.6 | |

|

| ||

| Room | 65.7 | |

|

| ||

| Stock photography | 64 | |

|

| ||

| Photographic paper | 62.8 | |

|

| ||

| Window | 61 | |

|

| ||

| Street | 57.4 | |

|

| ||

| Style | 53.5 | |

|

| ||

Microsoft

created on 2019-11-15

| text | 98.7 | |

|

| ||

| street | 98.2 | |

|

| ||

| outdoor | 96.6 | |

|

| ||

| clothing | 95.8 | |

|

| ||

| black and white | 90.9 | |

|

| ||

| person | 86.2 | |

|

| ||

| people | 85.2 | |

|

| ||

| footwear | 83 | |

|

| ||

| man | 70.6 | |

|

| ||

| vehicle | 67.5 | |

|

| ||

| waste container | 66.7 | |

|

| ||

| monochrome | 65.6 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 37-55 |

| Gender | Male, 54.9% |

| Happy | 45% |

| Calm | 55% |

| Disgusted | 45% |

| Surprised | 45% |

| Fear | 45% |

| Sad | 45% |

| Confused | 45% |

| Angry | 45% |

AWS Rekognition

| Age | 28-44 |

| Gender | Female, 50.2% |

| Angry | 49.5% |

| Sad | 49.8% |

| Happy | 49.5% |

| Disgusted | 49.5% |

| Calm | 50.1% |

| Confused | 49.5% |

| Surprised | 49.5% |

| Fear | 49.5% |

Feature analysis

Categories

Imagga

| paintings art | 38.9% | |

|

| ||

| interior objects | 34.1% | |

|

| ||

| streetview architecture | 19.5% | |

|

| ||

| people portraits | 5% | |

|

| ||

| pets animals | 1.1% | |

|

| ||

Captions

Microsoft

created on 2019-11-15

| a person standing in front of a building | 73.5% | |

|

| ||

| a man and a woman standing in front of a building | 53.5% | |

|

| ||

| a person standing next to a building | 53.4% | |

|

| ||

Text analysis

Amazon

SELECTA

CROWN

ORCHARD

Mountain

620289

PMACIA

PPLES

PMACIA BUEADGADA

SELECTA Tu CA-17-0 620289

Growe

CO.

BUEADGADA

CA-17-0

Tu

Co

FA

SELECTA

CALLE-

Alda

ea

nvtad

esea

PPLES

CO.

ARMACIA

FA

SELECTA

CALLE-

Alda

ea nvtad

esea

NOLUS

PPLES

CROWN ORCHARD CO.

ARMACIA

NOLUS

CROWN

ORCHARD