Machine Generated Data

Tags

Amazon

created on 2019-06-05

Clarifai

created on 2019-06-05

Imagga

created on 2019-06-05

| classroom | 71.9 | |

|

| ||

| room | 56.9 | |

|

| ||

| man | 32.4 | |

|

| ||

| newspaper | 26.9 | |

|

| ||

| person | 26.2 | |

|

| ||

| people | 25.7 | |

|

| ||

| male | 24.1 | |

|

| ||

| product | 22 | |

|

| ||

| education | 19.9 | |

|

| ||

| adult | 19.5 | |

|

| ||

| book jacket | 17.9 | |

|

| ||

| blackboard | 17.9 | |

|

| ||

| creation | 17.2 | |

|

| ||

| old | 16.7 | |

|

| ||

| portrait | 16.2 | |

|

| ||

| school | 15.9 | |

|

| ||

| jacket | 15.9 | |

|

| ||

| teacher | 15.3 | |

|

| ||

| student | 13.4 | |

|

| ||

| black | 13.2 | |

|

| ||

| business | 12.8 | |

|

| ||

| vintage | 12.4 | |

|

| ||

| group | 12.1 | |

|

| ||

| computer | 12 | |

|

| ||

| men | 12 | |

|

| ||

| world | 12 | |

|

| ||

| indoor | 11.9 | |

|

| ||

| office | 11.7 | |

|

| ||

| class | 11.6 | |

|

| ||

| couple | 11.3 | |

|

| ||

| happy | 11.3 | |

|

| ||

| aged | 10.9 | |

|

| ||

| professional | 10.7 | |

|

| ||

| book | 10.6 | |

|

| ||

| wrapping | 10.6 | |

|

| ||

| businessman | 10.6 | |

|

| ||

| college | 10.4 | |

|

| ||

| senior | 10.3 | |

|

| ||

| handsome | 9.8 | |

|

| ||

| home | 9.6 | |

|

| ||

| daily | 9.5 | |

|

| ||

| sitting | 9.4 | |

|

| ||

| face | 9.2 | |

|

| ||

| human | 9 | |

|

| ||

| looking | 8.8 | |

|

| ||

| hair | 8.7 | |

|

| ||

| smiling | 8.7 | |

|

| ||

| love | 8.7 | |

|

| ||

| studying | 8.6 | |

|

| ||

| youth | 8.5 | |

|

| ||

| casual | 8.5 | |

|

| ||

| one | 8.2 | |

|

| ||

| retro | 8.2 | |

|

| ||

| team | 8.1 | |

|

| ||

| interior | 8 | |

|

| ||

| boy | 7.8 | |

|

| ||

| students | 7.8 | |

|

| ||

| lesson | 7.8 | |

|

| ||

| teaching | 7.8 | |

|

| ||

| antique | 7.8 | |

|

| ||

| retired | 7.8 | |

|

| ||

| books | 7.7 | |

|

| ||

| desk | 7.7 | |

|

| ||

| adults | 7.6 | |

|

| ||

| learn | 7.6 | |

|

| ||

| dark | 7.5 | |

|

| ||

| study | 7.5 | |

|

| ||

| covering | 7.3 | |

|

| ||

| letter | 7.3 | |

|

| ||

| art | 7.2 | |

|

| ||

| smile | 7.1 | |

|

| ||

| working | 7.1 | |

|

| ||

| work | 7.1 | |

|

| ||

| child | 7 | |

|

| ||

| indoors | 7 | |

|

| ||

| modern | 7 | |

|

| ||

| together | 7 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

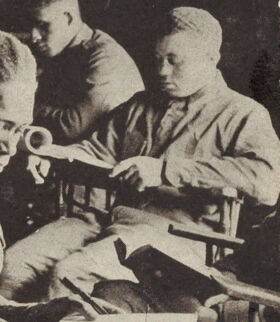

| Age | 35-52 |

| Gender | Male, 54.9% |

| Calm | 52.9% |

| Angry | 45.2% |

| Surprised | 45.1% |

| Confused | 45.2% |

| Disgusted | 45% |

| Happy | 45.1% |

| Sad | 46.5% |

AWS Rekognition

| Age | 35-52 |

| Gender | Male, 54.8% |

| Angry | 45.1% |

| Surprised | 45.1% |

| Sad | 45.4% |

| Disgusted | 45% |

| Confused | 45.1% |

| Happy | 45.1% |

| Calm | 54.2% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 57% |

| Happy | 0% |

| Confused | 0% |

| Calm | 99.1% |

| Surprised | 0% |

| Sad | 0.8% |

| Disgusted | 0% |

| Angry | 0.1% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 90.4% |

| Calm | 8.8% |

| Angry | 2% |

| Surprised | 0.9% |

| Confused | 2.5% |

| Sad | 84.3% |

| Happy | 1% |

| Disgusted | 0.6% |

AWS Rekognition

| Age | 48-68 |

| Gender | Male, 89.7% |

| Sad | 9.5% |

| Disgusted | 2% |

| Confused | 15.5% |

| Happy | 15.3% |

| Calm | 43.4% |

| Surprised | 6.5% |

| Angry | 7.8% |

AWS Rekognition

| Age | 38-59 |

| Gender | Male, 52.6% |

| Calm | 48.8% |

| Disgusted | 45.2% |

| Sad | 49.8% |

| Angry | 45.8% |

| Happy | 45.1% |

| Surprised | 45.2% |

| Confused | 45.1% |

Microsoft Cognitive Services

| Age | 29 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| people portraits | 74% | |

|

| ||

| food drinks | 10.1% | |

|

| ||

| interior objects | 7.6% | |

|

| ||

| events parties | 4.2% | |

|

| ||

| paintings art | 3.1% | |

|

| ||

Captions

Microsoft

created on 2019-06-05

| a group of people sitting at a table | 91% | |

|

| ||

| a group of people sitting around a table | 90.9% | |

|

| ||

| an old photo of a group of people sitting at a table | 89.6% | |

|

| ||