Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 42-60 |

| Gender | Female, 52.1% |

| Angry | 3.3% |

| Surprised | 0.5% |

| Disgusted | 0.4% |

| Confused | 1.1% |

| Calm | 36.5% |

| Fear | 3.5% |

| Happy | 0.6% |

| Sad | 54.1% |

Feature analysis

Amazon

| Person | 98.6% | |

Categories

Imagga

| paintings art | 100% | |

Captions

Microsoft

created on 2020-05-02

| a black and white photo of a man | 84.6% | |

| a vintage photo of a man | 84.3% | |

| black and white photo of a man | 78.6% | |

OpenAI GPT

Created by gpt-4 on 2025-03-05

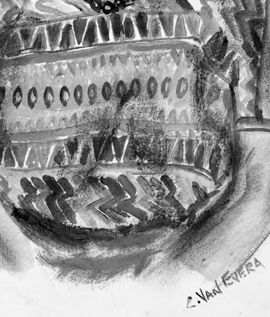

The image showcases a black and white drawing of a person dressed in a patterned top and adorned with a necklace. The person's hairstyle appears to be in braids or twists pulled back from the face. The drawing captures the upper body and arms of the person, which rest in front of them. The drawing displays shading and textural details indicative of fabric folds and jewelry. There is a signature at the bottom right that attributes the work to an artist, although the full name is not entirely clear from this view.

Created by gpt-4o-2024-05-13 on 2025-03-05

The image is a black and white portrait of a person with short, dark hair. They are wearing a patterned shirt with various geometric designs and a beaded necklace. The person is positioned with their arms slightly in front of them. The drawing is signed "C. VAN HETEREN" at the bottom right.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2025-03-03

The image appears to be a black and white sketch or drawing depicting the portrait of a middle-aged person with an Asian appearance. The subject is wearing a patterned, tribal-style shirt and has a serious, contemplative expression on their face. The drawing style is detailed and precise, capturing the features and details of the subject's face and clothing. The overall impression is one of cultural representation and introspection.

Created by claude-3-5-sonnet-20241022 on 2025-03-03

This is a black and white portrait drawing or sketch of someone wearing a patterned short-sleeve shirt with geometric designs including zigzags, triangles, and other traditional or tribal-style motifs. The person is also wearing what appears to be a beaded necklace. The artwork appears to be done in charcoal or graphite, showing good control of shading and detail. The background is plain white, focusing attention on the subject. The signature "S. Van Patter" can be seen at the bottom of the drawing.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-11

The image depicts a black-and-white pencil drawing of an older woman with dark hair, wearing a patterned blouse and a necklace. The woman is drawn in a realistic style, with attention to detail in her facial features and clothing. She has a serious expression on her face, with her mouth slightly open and her eyes looking directly at the viewer. The woman's hair is pulled back, revealing her forehead and eyebrows. Her blouse is short-sleeved and features a geometric pattern, with a mix of light and dark shades. A thick chain necklace adorns her neck, adding a touch of elegance to her overall appearance. The background of the image is plain white, which helps to focus attention on the woman's portrait. The artist's signature, "A. Vidal Rivera," is written in small letters at the bottom right corner of the image, indicating the creator of the artwork. Overall, the image presents a striking and detailed portrait of an older woman, showcasing the artist's skill and attention to detail in capturing the subject's likeness and expression.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-11

The image is a pencil drawing of a woman holding a large bowl. The woman has dark hair pulled back and is wearing a patterned shirt with a necklace. She is holding a large bowl in front of her, which appears to be made of clay or ceramic material. The bowl has a decorative pattern on it, with lines and shapes that resemble traditional pottery designs. The background of the image is white, with a black border around the edges. There is some text at the bottom of the image, but it is not legible. Overall, the image appears to be a portrait of a woman holding a bowl, possibly created by an artist or as part of a cultural or historical project.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-01-11

The image depicts a portrait drawing of an elderly woman. The drawing is in black and white, giving it a timeless and classic feel. The woman is dressed in traditional attire, with a patterned top that features intricate designs. She has short, gray hair and is wearing a necklace. The woman's facial expression is serious, and she appears to be looking directly at the viewer. The drawing is signed in the lower right corner, indicating the artist's name. The image conveys a sense of dignity and respect for the elderly woman, highlighting her traditional attire and serious expression.

Created by amazon.nova-pro-v1:0 on 2025-01-11

The image is a drawing of a person. The person is an adult female, wearing a patterned top and a necklace. The person has a serious expression on her face, and her hands are clasped together in front of her. The person's hair is tied back, and she is wearing a necklace. The person's face is slightly tilted to the side, and she is looking to the left. The background is plain white.