Machine Generated Data

Tags

Amazon

created on 2020-05-02

Clarifai

created on 2020-05-02

Imagga

created on 2020-05-02

| sketch | 100 | |

|

| ||

| drawing | 100 | |

|

| ||

| representation | 100 | |

|

| ||

| money | 28.1 | |

|

| ||

| art | 28 | |

|

| ||

| cash | 27.5 | |

|

| ||

| currency | 26.9 | |

|

| ||

| dollar | 26 | |

|

| ||

| sculpture | 24.9 | |

|

| ||

| paper | 23.5 | |

|

| ||

| statue | 21.9 | |

|

| ||

| ancient | 21.6 | |

|

| ||

| banking | 20.2 | |

|

| ||

| close | 20 | |

|

| ||

| bank | 19.7 | |

|

| ||

| bill | 19 | |

|

| ||

| financial | 18.7 | |

|

| ||

| finance | 18.6 | |

|

| ||

| dollars | 18.4 | |

|

| ||

| wealth | 18 | |

|

| ||

| stone | 17.7 | |

|

| ||

| old | 17.4 | |

|

| ||

| business | 17 | |

|

| ||

| religion | 16.1 | |

|

| ||

| savings | 15.9 | |

|

| ||

| architecture | 15.6 | |

|

| ||

| hundred | 15.5 | |

|

| ||

| history | 15.2 | |

|

| ||

| one | 14.9 | |

|

| ||

| finances | 14.5 | |

|

| ||

| culture | 13.7 | |

|

| ||

| face | 13.5 | |

|

| ||

| us | 13.5 | |

|

| ||

| loan | 13.4 | |

|

| ||

| god | 13.4 | |

|

| ||

| exchange | 13.4 | |

|

| ||

| temple | 13.3 | |

|

| ||

| carving | 12.7 | |

|

| ||

| monument | 12.1 | |

|

| ||

| rich | 12.1 | |

|

| ||

| banknotes | 11.7 | |

|

| ||

| bills | 11.7 | |

|

| ||

| artistic | 11.3 | |

|

| ||

| franklin | 10.8 | |

|

| ||

| design | 10.7 | |

|

| ||

| pay | 10.6 | |

|

| ||

| detail | 10.5 | |

|

| ||

| antique | 10.4 | |

|

| ||

| ornament | 10.3 | |

|

| ||

| pattern | 10.3 | |

|

| ||

| church | 10.2 | |

|

| ||

| closeup | 10.1 | |

|

| ||

| texture | 9.7 | |

|

| ||

| marble | 9.7 | |

|

| ||

| decoration | 9.4 | |

|

| ||

| religious | 9.4 | |

|

| ||

| famous | 9.3 | |

|

| ||

| economy | 9.3 | |

|

| ||

| investment | 9.2 | |

|

| ||

| vintage | 9.1 | |

|

| ||

| human | 9 | |

|

| ||

| style | 8.9 | |

|

| ||

| color | 8.9 | |

|

| ||

| concepts | 8.9 | |

|

| ||

| carved | 8.8 | |

|

| ||

| capital | 8.5 | |

|

| ||

| historic | 8.3 | |

|

| ||

| figure | 8.2 | |

|

| ||

| fantasy | 8.1 | |

|

| ||

| funds | 7.8 | |

|

| ||

| banknote | 7.8 | |

|

| ||

| spirituality | 7.7 | |

|

| ||

| historical | 7.5 | |

|

| ||

| effect | 7.3 | |

|

| ||

| graphic | 7.3 | |

|

| ||

| digital | 7.3 | |

|

| ||

| success | 7.2 | |

|

| ||

| black | 7.2 | |

|

| ||

| futuristic | 7.2 | |

|

| ||

| travel | 7 | |

|

| ||

Google

created on 2020-05-02

| Drawing | 94 | |

|

| ||

| Sketch | 90.3 | |

|

| ||

| Art | 86.2 | |

|

| ||

| Facial hair | 76.7 | |

|

| ||

| Figure drawing | 76.4 | |

|

| ||

| Artwork | 75.9 | |

|

| ||

| Painting | 71.9 | |

|

| ||

| Visual arts | 69.9 | |

|

| ||

| Illustration | 69.1 | |

|

| ||

| Stock photography | 68.5 | |

|

| ||

| Self-portrait | 65.1 | |

|

| ||

| Jaw | 63 | |

|

| ||

| Beard | 59 | |

|

| ||

| Black-and-white | 56.4 | |

|

| ||

| Still life | 54.5 | |

|

| ||

Microsoft

created on 2020-05-02

| sketch | 99.8 | |

|

| ||

| drawing | 99.7 | |

|

| ||

| art | 93 | |

|

| ||

| text | 90.2 | |

|

| ||

| child art | 78.8 | |

|

| ||

| painting | 75.9 | |

|

| ||

| human face | 69.3 | |

|

| ||

| abstract | 67.3 | |

|

| ||

| illustration | 58.3 | |

|

| ||

| cartoon | 54.4 | |

|

| ||

| linedrawing | 33.3 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

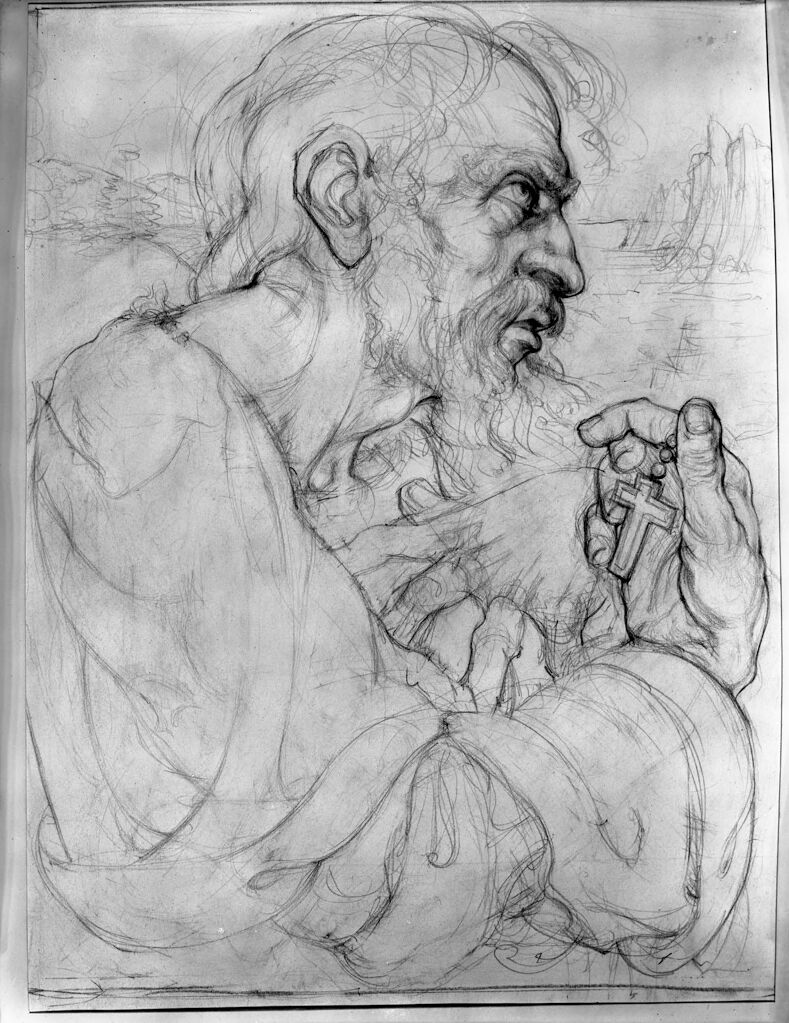

| Age | 36-52 |

| Gender | Male, 91.1% |

| Calm | 13.7% |

| Confused | 6.6% |

| Angry | 68.5% |

| Surprised | 0.7% |

| Disgusted | 9.6% |

| Happy | 0.1% |

| Sad | 0.6% |

| Fear | 0.2% |

Feature analysis

Categories

Imagga

| paintings art | 99.2% | |

|

| ||

Captions

Microsoft

created on 2020-05-02

| a drawing of a book | 37.6% | |

|

| ||

| a drawing of a person | 37.5% | |

|

| ||

| a drawing of a person | 37.4% | |

|

| ||