Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 23-38 |

| Gender | Female, 95.1% |

| Disgusted | 1.4% |

| Surprised | 0.9% |

| Angry | 1.1% |

| Sad | 2.8% |

| Confused | 1.9% |

| Happy | 0.6% |

| Calm | 91.3% |

Feature analysis

Amazon

| Person | 99.5% | |

Categories

Imagga

| people portraits | 97.1% | |

| pets animals | 2% | |

| paintings art | 0.5% | |

| events parties | 0.2% | |

| food drinks | 0.1% | |

Captions

Microsoft

created on 2018-03-23

| a person sitting on a bench | 85.9% | |

| a man and a woman sitting on a bench | 75.9% | |

| a person sitting on a bench talking on a cell phone | 61.2% | |

Anthropic Claude

Created by claude-3-haiku-20240307 on 2024-12-31

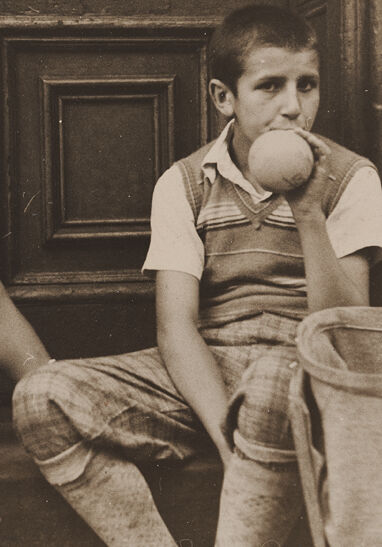

The image shows two young boys sitting on the steps of a building. One boy is sitting on the steps while the other is sitting next to him, appearing to be holding some sort of small object, possibly a ball. The boys are dressed in casual clothing from what appears to be an earlier time period, with the boy on the left wearing shorts and the boy on the right wearing a striped shirt. The background shows an old wooden door and wall, giving the image an antique and atmospheric feel.

Created by claude-3-opus-20240229 on 2024-12-31

The black and white photograph shows two young boys sitting on the steps of what appears to be an old wooden door or entryway. The boy on the left is seated slightly lower and has a serious expression on his face. The boy on the right, who looks a bit older, is holding a ball or round object up to his mouth and has a more relaxed, contemplative look. Both boys are wearing t-shirts and shorts that look typical of mid-20th century children's clothing styles. The worn, weathered wood and stone surroundings give the image a nostalgic, timeless quality, capturing a candid moment from the boys' childhood.

Created by claude-3-5-sonnet-20241022 on 2024-12-31

This is a vintage black and white photograph showing two young children sitting on what appears to be a doorstep or stoop. They're both wearing casual summer clothing - one in a white shirt and shorts, the other in a sleeveless sweater vest and pants. The older child is holding what looks like an apple or ball up to their mouth. Behind them is a large wooden door with decorative panels. The photograph has the characteristic sepia tones and grainy quality typical of mid-20th century photography, giving it a nostalgic feel. The casual, relaxed pose of the children suggests this was likely an informal, candid shot of everyday life.