Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

Imagga

AWS Rekognition

| Age | 53-61 |

| Gender | Male, 99.8% |

| Sad | 100% |

| Surprised | 6.5% |

| Calm | 6.3% |

| Fear | 6% |

| Confused | 1.8% |

| Angry | 0.8% |

| Happy | 0.5% |

| Disgusted | 0.4% |

Feature analysis

Amazon

| Person | 99.3% | |

Categories

Imagga

| interior objects | 97% | |

| pets animals | 2.5% | |

Captions

Microsoft

created by unknown on 2018-05-10

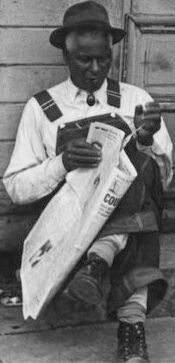

| a person sitting on a bench in front of a building | 87.9% | |

| a person sitting on a bench | 87.8% | |

| a person sitting on a bench next to a building | 87.7% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-28

| a photograph of two men sitting on the steps of a building | -100% | |

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-24

This image is a black-and-white photograph of two men sitting on the steps of a building. The man on the left is wearing a hat, a white shirt, and overalls, and is holding a newspaper. The man on the right is wearing a hat, a jacket, and pants, and has his arms crossed. The background of the image shows a wooden building with a door and windows. The overall atmosphere of the image suggests that the men are taking a break or resting outside the building.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-24

The image depicts two men sitting on the steps of a building, with one man reading a newspaper and the other man looking at him. The man on the left is wearing a white shirt, dark pants, and a hat, while the man on the right is wearing a dark jacket, pants, and a hat. The building behind them has a wooden door and windows, and appears to be old and worn. The overall atmosphere of the image suggests a quiet, everyday moment in a small town or rural area.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-24

The black-and-white image features two men sitting on the steps of a building. The man on the left is wearing a hat and holding a newspaper, while the man on the right is wearing a cap and has his arms crossed. The building has a door with a lock, and a window is on the right side of the door. The men appear to be waiting for something or someone.

Created by amazon.nova-lite-v1:0 on 2025-05-24

The image shows two men sitting on a concrete step outside a building. The man on the left is holding a newspaper and appears to be reading it, while the man on the right is sitting with his arms crossed and looking to the side. Both men are wearing hats and shoes. The building has wooden walls and glass windows, and there is a door with a metal handle in the middle. The image has a black-and-white filter applied to it.

Google Gemini

Created by gemini-2.0-flash on 2025-05-22

In this black and white photograph, two men sit on the stoop of a building with a weathered and aged façade. The building's features include large, multi-paned windows flanking a central double door, all enclosed in a wooden frame with visible peeling paint and the texture of aged wood. The door is secured with a simple latch.

To the left, a man wearing a hat, suspenders over a long-sleeved shirt, and boots is engrossed in reading a newspaper. He is seated with his legs crossed, seemingly focused on the articles before him.

Beside him, another man, also wearing a cap and what appears to be overalls, sits with his arms crossed and legs outstretched. His expression is somber, suggesting a moment of quiet contemplation.

Both men are dressed in work clothes, implying they may be laborers taking a break. The setting is austere and perhaps indicative of a time of economic hardship, as reflected in the rundown appearance of the building and the simple clothing of the individuals. The high-contrast black and white tones of the image add to the sense of stark reality and emphasize the texture of the building and the subjects' clothing.

Created by gemini-2.0-flash-lite on 2025-05-22

Certainly! Here's a description of the image:

Overall Impression:

The photograph is a black and white image capturing a moment in time. It appears to be an older photograph, likely from the early to mid-20th century, given the style and the subject matter. The setting seems to be a street in a rural or small-town environment.

Key Elements:

Subject: The central focus is two African-American men. One is seated and reading a newspaper, while the other is seated next to him, appearing contemplative.

Setting: They are sitting in front of what seems to be a weathered wooden building with a door in the center and two windows on either side. There's a set of steps or a small platform they're sitting on. The overall condition of the building and the surroundings suggests a place of economic hardship.

Clothing: The men are dressed in work clothing, suggesting they may be laborers or of modest means. The man reading has on overalls, a hat, and appears to be dressed up. The second man is wearing a newsboy cap and looks more worn and weary.

Mood/Atmosphere: The image evokes a sense of quiet reflection and possibly the difficulties faced by people in that era. The men's body language and the worn environment contribute to a somber mood.

Overall, the photograph is likely a documentary piece that offers a glimpse into the lives of working-class African-American men during a specific historical period.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-25

The image is a black-and-white photograph depicting two men sitting on the steps of a wooden building. The building appears to be old, with weathered wooden planks and large double doors.

The man on the left is wearing a hat and a light-colored shirt with darker stripes on the sleeves. He is holding and reading a newspaper, which is spread out in front of him. He is also wearing dark pants and shoes.

The man on the right is dressed in a cap, a dark jacket, and dark pants. He is sitting with his arms crossed over his knees and appears to be smoking a pipe. His posture is relaxed, and he is looking slightly downward.

The setting appears to be outdoors, possibly in a rural or small-town environment, given the simplicity and rustic nature of the building. The overall mood of the photograph is calm and reflective, capturing a quiet moment in the lives of these two individuals.