Machine Generated Data

Tags

Amazon

created on 2021-12-15

Clarifai

created on 2023-10-15

Imagga

created on 2021-12-15

| kin | 55.4 | |

|

| ||

| balcony | 35.2 | |

|

| ||

| statue | 35 | |

|

| ||

| architecture | 27.3 | |

|

| ||

| sculpture | 26.9 | |

|

| ||

| building | 20.4 | |

|

| ||

| old | 19.5 | |

|

| ||

| monument | 18.7 | |

|

| ||

| history | 17 | |

|

| ||

| marble | 16.6 | |

|

| ||

| ancient | 16.4 | |

|

| ||

| art | 16.3 | |

|

| ||

| people | 16.2 | |

|

| ||

| tourism | 15.7 | |

|

| ||

| landmark | 15.3 | |

|

| ||

| stone | 15.2 | |

|

| ||

| historical | 15 | |

|

| ||

| home | 14.3 | |

|

| ||

| structure | 13.3 | |

|

| ||

| travel | 12.7 | |

|

| ||

| couple | 12.2 | |

|

| ||

| man | 12.1 | |

|

| ||

| famous | 12.1 | |

|

| ||

| column | 11.9 | |

|

| ||

| house | 11.7 | |

|

| ||

| city | 10.8 | |

|

| ||

| religion | 10.7 | |

|

| ||

| vintage | 10.7 | |

|

| ||

| world | 10.3 | |

|

| ||

| wall | 10.3 | |

|

| ||

| historic | 10.1 | |

|

| ||

| family | 9.8 | |

|

| ||

| portrait | 9.7 | |

|

| ||

| grandfather | 9.6 | |

|

| ||

| bride | 9.6 | |

|

| ||

| women | 9.5 | |

|

| ||

| love | 9.5 | |

|

| ||

| symbol | 9.4 | |

|

| ||

| happiness | 9.4 | |

|

| ||

| culture | 9.4 | |

|

| ||

| face | 9.2 | |

|

| ||

| male | 9.2 | |

|

| ||

| picket fence | 9.2 | |

|

| ||

| detail | 8.8 | |

|

| ||

| antique | 8.6 | |

|

| ||

| two | 8.5 | |

|

| ||

| window | 8.4 | |

|

| ||

| church | 8.3 | |

|

| ||

| wedding | 8.3 | |

|

| ||

| person | 8.3 | |

|

| ||

| fence | 8.2 | |

|

| ||

| new | 8.1 | |

|

| ||

| facade | 7.9 | |

|

| ||

| carved | 7.8 | |

|

| ||

| heritage | 7.7 | |

|

| ||

| summer | 7.7 | |

|

| ||

| sky | 7.6 | |

|

| ||

| sibling | 7.5 | |

|

| ||

| happy | 7.5 | |

|

| ||

| style | 7.4 | |

|

| ||

| exterior | 7.4 | |

|

| ||

| lady | 7.3 | |

|

| ||

| tourist | 7.2 | |

|

| ||

| memorial | 7.2 | |

|

| ||

| life | 7.2 | |

|

| ||

| marimba | 7.1 | |

|

| ||

| day | 7.1 | |

|

| ||

Google

created on 2021-12-15

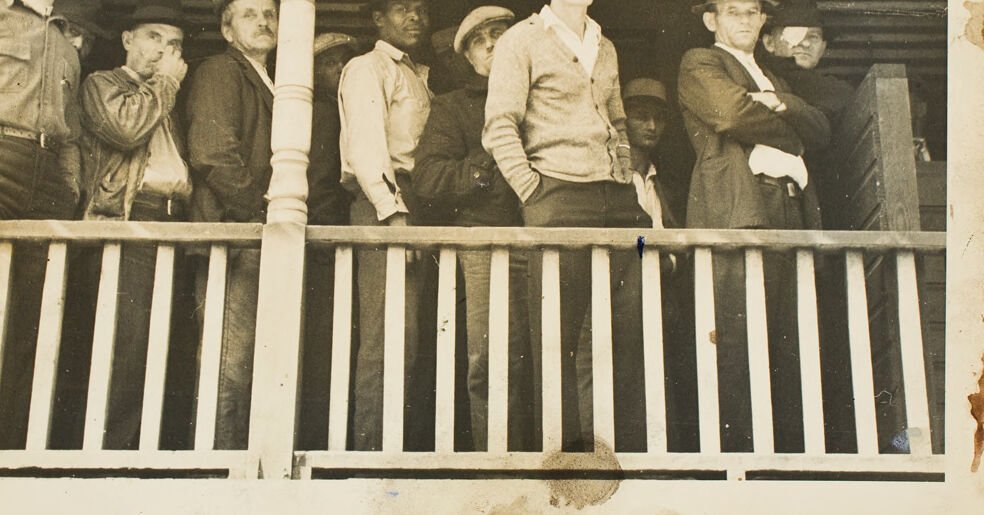

| Wood | 86.2 | |

|

| ||

| Fence | 84.3 | |

|

| ||

| Rectangle | 80.4 | |

|

| ||

| Tints and shades | 77.3 | |

|

| ||

| Vintage clothing | 75.7 | |

|

| ||

| Suit | 74.2 | |

|

| ||

| History | 67.5 | |

|

| ||

| Font | 67.4 | |

|

| ||

| Art | 67 | |

|

| ||

| Visual arts | 64.1 | |

|

| ||

| Monochrome | 62.8 | |

|

| ||

| Room | 61.5 | |

|

| ||

| Sitting | 60.9 | |

|

| ||

| Family reunion | 59.8 | |

|

| ||

| Collection | 58.2 | |

|

| ||

| Metal | 56.6 | |

|

| ||

| Child | 54.3 | |

|

| ||

| Photographic paper | 52.4 | |

|

| ||

| Family | 52.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

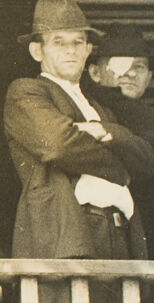

| Age | 23-37 |

| Gender | Male, 98.5% |

| Calm | 98.2% |

| Confused | 0.5% |

| Happy | 0.5% |

| Sad | 0.5% |

| Angry | 0.1% |

| Surprised | 0.1% |

| Fear | 0% |

| Disgusted | 0% |

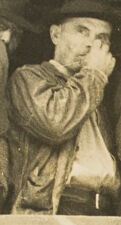

AWS Rekognition

| Age | 32-48 |

| Gender | Male, 98.4% |

| Calm | 85.8% |

| Angry | 8.7% |

| Sad | 2.1% |

| Fear | 1.2% |

| Confused | 1% |

| Surprised | 0.9% |

| Happy | 0.2% |

| Disgusted | 0.1% |

AWS Rekognition

| Age | 23-35 |

| Gender | Male, 98.4% |

| Angry | 35.2% |

| Calm | 33% |

| Confused | 21.5% |

| Happy | 3.9% |

| Sad | 3.1% |

| Disgusted | 1.6% |

| Surprised | 1.1% |

| Fear | 0.6% |

AWS Rekognition

| Age | 35-51 |

| Gender | Female, 90.5% |

| Calm | 72.5% |

| Sad | 24.2% |

| Angry | 1.8% |

| Fear | 0.8% |

| Surprised | 0.3% |

| Confused | 0.2% |

| Happy | 0.1% |

| Disgusted | 0.1% |

AWS Rekognition

| Age | 36-54 |

| Gender | Male, 97.3% |

| Calm | 98.7% |

| Surprised | 0.4% |

| Happy | 0.3% |

| Confused | 0.3% |

| Angry | 0.2% |

| Sad | 0.1% |

| Fear | 0% |

| Disgusted | 0% |

AWS Rekognition

| Age | 22-34 |

| Gender | Male, 94.6% |

| Calm | 92% |

| Happy | 3.8% |

| Sad | 1.5% |

| Angry | 0.8% |

| Confused | 0.7% |

| Disgusted | 0.5% |

| Surprised | 0.5% |

| Fear | 0.2% |

AWS Rekognition

| Age | 27-43 |

| Gender | Male, 95.6% |

| Calm | 87.1% |

| Sad | 6.2% |

| Happy | 2.2% |

| Confused | 1.8% |

| Angry | 1.4% |

| Disgusted | 0.5% |

| Fear | 0.4% |

| Surprised | 0.4% |

AWS Rekognition

| Age | 32-48 |

| Gender | Male, 96.2% |

| Calm | 93.7% |

| Angry | 2.4% |

| Sad | 1.4% |

| Happy | 1.1% |

| Confused | 0.7% |

| Surprised | 0.3% |

| Fear | 0.3% |

| Disgusted | 0.1% |

AWS Rekognition

| Age | 8-18 |

| Gender | Male, 71% |

| Calm | 95.8% |

| Happy | 2.5% |

| Sad | 0.9% |

| Confused | 0.3% |

| Angry | 0.2% |

| Disgusted | 0.1% |

| Surprised | 0.1% |

| Fear | 0% |

AWS Rekognition

| Age | 24-38 |

| Gender | Male, 93.9% |

| Calm | 41.3% |

| Surprised | 35.9% |

| Confused | 13.4% |

| Sad | 4.8% |

| Angry | 2.8% |

| Fear | 1.1% |

| Disgusted | 0.4% |

| Happy | 0.2% |

Microsoft Cognitive Services

| Age | 44 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 37 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 26 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 45 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 34 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 23 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Likely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very likely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Likely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Likely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 95.7% | |

|

| ||

| streetview architecture | 1.8% | |

|

| ||

| people portraits | 1.6% | |

|

| ||

Captions

Microsoft

created on 2021-12-15

| a group of people sitting on a bench posing for the camera | 94.7% | |

|

| ||

| a group of people sitting on a bench in front of a building | 92.6% | |

|

| ||

| a group of people sitting on a bench | 92.5% | |

|

| ||