Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 86.1% |

| Calm | 28% |

| Surprised | 1.3% |

| Happy | 0.9% |

| Sad | 65.4% |

| Disgusted | 1.1% |

| Confused | 1.9% |

| Angry | 1.4% |

Feature analysis

Amazon

| Painting | 99.7% | |

Categories

Imagga

| paintings art | 94.3% | |

| interior objects | 2.2% | |

| macro flowers | 1.8% | |

| food drinks | 1.1% | |

| people portraits | 0.3% | |

| cars vehicles | 0.1% | |

| pets animals | 0.1% | |

| text visuals | 0.1% | |

| beaches seaside | 0.1% | |

Captions

Microsoft

created on 2018-03-16

| a painting of a person | 77.2% | |

| a painting of a person lying on a leather surface | 39.6% | |

| a painting of a person lying on the ground | 39.5% | |

OpenAI GPT

Created by gpt-4 on 2024-01-27

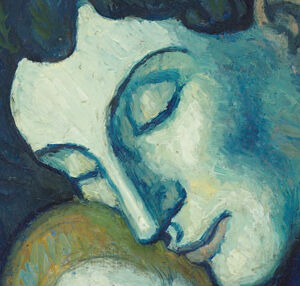

This is a vivid oil painting depicting a figure cloaked in deep blue garments seated against a muted background of contrasting color tones. The painting captures rich shades of blue enveloping the subject, creating a sense of depth and movement within the folds of the fabrics draped around the form. The background consists of vertical strokes in a cool palette, juxtaposed against the warmth of the figure's clothing. The artist has signed the painting in the upper right corner.

Anthropic Claude

Created by claude-3-haiku-48k-20240307 on 2024-03-29

The image depicts a female figure, likely a mother, cradling a child in her arms. They are both shrouded in a large, blue cloak or garment that dominates the composition. The figures are rendered in a stylized, almost cubist manner, with angular features and bold, expressive brushwork. The background suggests a forested or natural setting, with hazy, abstracted forms in shades of blue and green. The overall mood is one of tenderness and protective embrace, with the mother's nurturing gesture towards the child at the center of the painting.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-26

This image is a painting of a woman and child, created by Pablo Picasso in 1901. The painting is titled "Mother and Child" and is an example of Picasso's early work, which was influenced by the Blue Period. **Key Features:** * **Color Palette:** The painting features a predominantly blue color palette, with shades of blue and green used to create a somber and melancholic mood. * **Composition:** The composition of the painting is simple, with the woman and child seated in a quiet, introspective pose. * **Style:** The style of the painting is characterized by bold brushstrokes and a focus on form and structure, which are hallmarks of Picasso's early work. * **Emotional Tone:** The emotional tone of the painting is one of sadness and introspection, which is reflected in the somber color palette and the quiet, contemplative pose of the woman and child. **Overall:** "Mother and Child" is a beautiful and evocative painting that showcases Picasso's early style and technique. The painting's somber color palette and introspective mood create a sense of sadness and contemplation, making it a powerful and moving work of art.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-24

The image depicts a painting by Pablo Picasso, titled "Mother and Child" (1901). This artwork is a representation of Picasso's early work in the Blue Period, characterized by its somber color palette and focus on themes of poverty, suffering, and social isolation. The painting features a mother and child in a blue-toned setting, emphasizing the emotional depth and intimacy of the scene. The use of blue dominates the palette, creating a sense of melancholy and introspection. The mother is depicted with her head resting on the child's head, conveying a sense of protection and love. The child's face is turned away from the viewer, adding to the sense of privacy and vulnerability. The background is minimal, with only a few brushstrokes suggesting the presence of a room or a space. Overall, the painting captures a poignant moment of maternal love and care, set against a backdrop of social and economic hardship.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-01-04

The painting depicts a mother and child. The mother is sitting on the floor and holding the child close to her chest. Both of them are sleeping. The mother is wearing a blue robe, and the child is wearing a white shirt. The background is painted in blue and green. The painting is titled "Mother and Child" by Pablo Picasso.

Created by amazon.nova-lite-v1:0 on 2025-01-04

The image depicts a painting titled "Mother and Child" by Pablo Picasso. The painting is a poignant representation of a mother embracing her child, both of whom are rendered in shades of blue. The mother is depicted in a seated position, with her arms wrapped around the child, who is resting against her chest. The mother's face is turned towards the child, and her expression conveys a sense of tenderness and love. The child's face is also turned towards the mother, and its expression is one of contentment and peace. The painting is framed in a simple, rectangular frame, and the background is a solid blue color that complements the overall color scheme of the painting.