Machine Generated Data

Tags

Amazon

created on 2022-07-01

Clarifai

created on 2025-01-09

| people | 99.8 | |

|

| ||

| adult | 98 | |

|

| ||

| one | 98 | |

|

| ||

| music | 97.4 | |

|

| ||

| administration | 97.3 | |

|

| ||

| man | 96.6 | |

|

| ||

| leader | 96.6 | |

|

| ||

| portrait | 95.5 | |

|

| ||

| musician | 95.1 | |

|

| ||

| wear | 92.5 | |

|

| ||

| profile | 91.4 | |

|

| ||

| two | 85.6 | |

|

| ||

| singer | 84.4 | |

|

| ||

| art | 84 | |

|

| ||

| actor | 81 | |

|

| ||

| writer | 79.5 | |

|

| ||

| microphone | 78.8 | |

|

| ||

| monochrome | 77.7 | |

|

| ||

| book series | 75.6 | |

|

| ||

| group | 74.3 | |

|

| ||

Imagga

created on 2022-07-01

Google

created on 2022-07-01

| Art | 78.4 | |

|

| ||

| Collar | 77.2 | |

|

| ||

| Blazer | 77.1 | |

|

| ||

| Vintage clothing | 73.4 | |

|

| ||

| Monochrome photography | 71.7 | |

|

| ||

| Suit | 69.2 | |

|

| ||

| Monochrome | 68.2 | |

|

| ||

| Formal wear | 68.2 | |

|

| ||

| Stock photography | 64.7 | |

|

| ||

| Pianist | 62.9 | |

|

| ||

| Recreation | 62 | |

|

| ||

| Paper product | 60.3 | |

|

| ||

| Paper | 60 | |

|

| ||

| Uniform | 58.6 | |

|

| ||

| White-collar worker | 57.7 | |

|

| ||

| History | 56.6 | |

|

| ||

| Retro style | 54.7 | |

|

| ||

| Jazz pianist | 53.7 | |

|

| ||

| Portrait | 51.7 | |

|

| ||

| Sitting | 51.6 | |

|

| ||

Microsoft

created on 2022-07-01

| person | 96.4 | |

|

| ||

| text | 96 | |

|

| ||

| man | 93.9 | |

|

| ||

| indoor | 89.5 | |

|

| ||

| black and white | 52 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

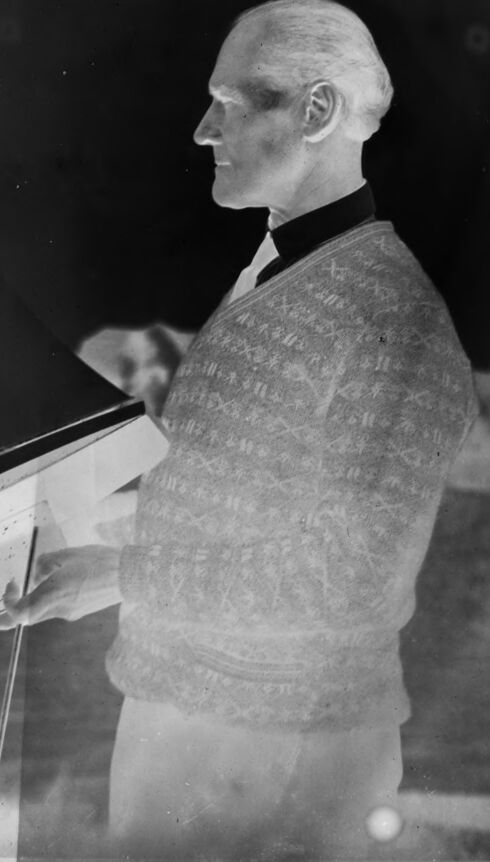

| Age | 50-58 |

| Gender | Male, 90.8% |

| Calm | 97.7% |

| Surprised | 6.3% |

| Fear | 5.9% |

| Sad | 2.9% |

| Happy | 0% |

| Angry | 0% |

| Confused | 0% |

| Disgusted | 0% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Amazon

Person

| Person | 97.9% | |

|

| ||

Categories

Imagga

| paintings art | 52.6% | |

|

| ||

| events parties | 26.5% | |

|

| ||

| pets animals | 6.1% | |

|

| ||

| people portraits | 6% | |

|

| ||

| streetview architecture | 3.4% | |

|

| ||

| food drinks | 2.6% | |

|

| ||

| interior objects | 2.3% | |

|

| ||

Captions

Microsoft

created on 2022-07-01

| a man standing in front of a laptop | 68.9% | |

|

| ||

| a man standing in front of a computer | 68.8% | |

|

| ||

| a man that is standing in front of a laptop | 62.4% | |

|

| ||

Text analysis

電動

A

408

10*760110

電動

A

408

10

*

760110