Machine Generated Data

Tags

Color Analysis

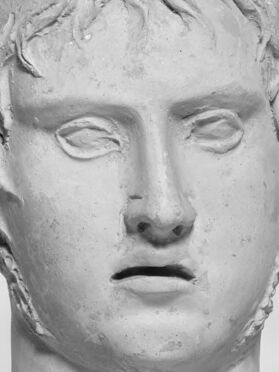

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 21-29 |

| Gender | Male, 99.8% |

| Angry | 36.1% |

| Disgusted | 22.7% |

| Confused | 14.2% |

| Fear | 12.3% |

| Surprised | 7.1% |

| Sad | 5.6% |

| Calm | 5.3% |

| Happy | 1% |

Feature analysis

Amazon

| Person | 93.8% | |

Categories

Imagga

| paintings art | 97.6% | |

| people portraits | 2.4% | |

Captions

Microsoft

created by unknown on 2022-06-10

| an old photo of a man | 82.3% | |

| a man posing for a photo | 77.5% | |

| old photo of a man | 77.4% | |

Clarifai

created by general-english-image-caption-blip on 2025-04-29

| a photograph of a head and shoulders of a statue of a man | -100% | |

OpenAI GPT

Created by gpt-4 on 2025-02-18

This is a black and white photograph of a classical-style sculpture. The sculpture appears to be a bust, typically depicting the upper part of the human figure including the head, neck, and a portion of the shoulders. The sculpture is positioned on a dark, polished base which enhances its display. The figure has detailed, draped clothing on its shoulders suggesting a historical or significant figure often seen in classical busts. The material used for the sculpture is not clear from the image, but it resembles stone or marble, which are common materials for such art. The craftsmanship of the hair and clothing indicates a high level of skill, implying that it might be an artwork of considerable age or made to replicate that era's style.

Created by gpt-4o-2024-05-13 on 2025-02-18

This image depicts a marble sculpture of a head with detailed ear carvings and a neck, mounted on a rectangular plinth. The background is a plain, neutral tone which helps to highlight the artistic details of the sculpture's form and texture.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2025-02-18

The image shows a black and white photograph of a classical marble sculpture head. The head has an intricate hairstyle with curls and waves, characteristic of ancient Greek or Roman sculpting. The expression on the face is somber and contemplative, with a slightly furrowed brow and downcast eyes. The sculpture appears to be a bust, mounted on a simple black pedestal. The image captures the detailed craftsmanship and timeless beauty of this classical work of art.

Created by claude-3-opus-20240229 on 2025-02-18

The image shows a sculpture of a human head made of white marble or a similar material. The sculpture appears to be from ancient Greece or Rome based on the classical style. The face has idealized, serene features with wavy hair pulled back from the forehead. The eyes are blank without pupils and the lips are pursed. The head is mounted on a dark pedestal or base against a plain light background. Overall, it is a striking ancient portrait bust typical of Greco-Roman art depicting an unnamed figure.

Created by claude-3-5-sonnet-20241022 on 2025-02-18

This is a black and white photograph of a classical marble or stone bust sculpture. The sculpture appears to be from ancient Roman or Greek times, showing the head and neck of a young figure with short, wavy hair styled in a typical classical manner. The sculpture has idealized features characteristic of Greco-Roman art, with a serene expression and smooth facial features. The bust is mounted on what appears to be a dark, square display base. The image has a neutral background and appears to be a museum or gallery documentation photograph. The sculpture shows excellent craftsmanship in its detailed carving and preservation, though there appears to be some weathering or age-related wear visible on the surface of the stone.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-14

The image presents a black-and-white photograph of a marble bust of a man's head, likely originating from ancient Greece or Rome. The bust is displayed on a dark pedestal, set against a light-colored background.

Key Features:

- Marble Bust: The bust is crafted from white marble, featuring a smooth texture and subtle imperfections. It depicts the head of a man with short hair, his eyes closed, and a neutral expression.

- Pedestal: The bust is mounted on a dark, rectangular pedestal, which provides a striking contrast to the light-colored background.

- Background: The background of the image is a light color, allowing the marble bust to take center stage. The overall effect is one of simplicity and elegance, highlighting the beauty of the ancient artwork.

Artistic and Historical Significance:

- Ancient Origins: The bust is likely a replica of an ancient Greek or Roman sculpture, showcasing the artistic skills of the time.

- Marble Material: The use of marble as the primary material for the bust reflects the value placed on this stone in ancient times.

- Expression: The neutral expression on the man's face adds to the sense of serenity and calmness conveyed by the image.

Overall Impression:

The image presents a serene and elegant representation of an ancient marble bust, highlighting the beauty of the artwork and the cultural significance of the time period from which it originates.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-14

The image depicts a marble bust of a man's head, which appears to be an ancient Greek or Roman sculpture. The bust is positioned on a black pedestal, and the background is white.

Key Features:

- Marble Bust: The bust is crafted from marble and features a man's head with short hair.

- Pedestal: The bust is placed on a black pedestal, which provides a striking contrast to the white background.

- Background: The background of the image is white, which helps to highlight the details of the bust.

- Ancient Style: The style of the bust suggests that it may be an ancient Greek or Roman sculpture.

Overall Impression:

The image presents a striking and elegant representation of an ancient marble bust. The use of a black pedestal and a white background effectively highlights the details of the bust, creating a visually appealing image.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-18

The image shows a black-and-white photograph of a sculpture of a head, possibly of a man. The sculpture is placed on a black pedestal. The head is carved in stone and depicts a man with short hair and a serious expression. The man's eyes are closed, and his mouth is slightly open. The sculpture appears to be an ancient Greek statue, possibly of a god or a hero. The image is captured from a low angle, and the sculpture is the main focus of the image.

Created by amazon.nova-pro-v1:0 on 2025-02-18

The image is a black-and-white photograph of a sculpture of a human head. The sculpture is a bust, meaning it depicts only the head and neck of a person. The head is positioned on a pedestal, which is rectangular in shape and has a flat top surface. The sculpture is made of stone, and it appears to be weathered, suggesting it may be an ancient artifact. The head is of a young man with short hair and a serious expression. The eyes are closed, and the mouth is slightly open, giving the impression of contemplation or thought. The sculpture is well-lit, and the details of the face are clearly visible. The background of the image is plain and does not distract from the sculpture. Overall, the image conveys a sense of history and artistry, highlighting the beauty and craftsmanship of the sculpture.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-02

Certainly! Here's a description of the image:

Overall Impression:

The image is a black and white photograph of a classical sculpture. The subject is a bust, likely made of stone or plaster, depicting a human head. It is placed on a black rectangular pedestal against a plain, bright background.

Details:

- Sculpture: The bust appears to be of a young man. The features are idealized and exhibit classical Greek or Roman influences. The hair is short and styled in a somewhat curly manner. The facial features are detailed, including closed eyes.

- Material & Texture: The sculpture appears to be made of a material that is lighter in tone and may have a somewhat textured surface.

- Pedestal: The black pedestal the sculpture is mounted on provides a strong contrast, drawing attention to the bust.

- Lighting: The lighting is fairly even, creating soft shadows and highlighting the form of the sculpture.

- Framing: The photograph is well-framed, showing the bust in its entirety with a neutral background.

- Style: The aesthetic is elegant and formal.

Let me know if you'd like a more detailed analysis or have any other questions about the image!

Created by gemini-2.0-flash on 2025-05-02

The image shows a grey clay sculpture of a man's head and neck. The sculpture is set upon a glossy black plinth. The face of the sculpture is relatively smooth and well-defined, with a prominent nose and an expression that is both neutral and dignified. The hair, styled in short, wavy strands, frames the forehead and adds a touch of informality to the otherwise stoic countenance. The eyes are slightly closed and have small slits that might be the irises. The backdrop is a muted white, which helps to highlight the texture and features of the sculpture. The image has a classic feel, typical of photographs of ancient art or historical artifacts.