Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

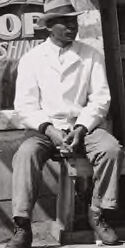

| Age | 35-52 |

| Gender | Male, 52.1% |

| Sad | 46.3% |

| Disgusted | 47% |

| Angry | 45.5% |

| Happy | 45.3% |

| Surprised | 45.3% |

| Calm | 50.4% |

| Confused | 45.2% |

Feature analysis

Amazon

| Person | 99.9% | |

Categories

Imagga

| cars vehicles | 99.7% | |

| paintings art | 0.1% | |

| food drinks | 0.1% | |

Captions

Microsoft

created on 2018-03-16

| a truck is parked in front of a store | 84% | |

| a truck is parked in front of a store window | 79.9% | |

| a car parked in front of a store | 79.8% | |

Anthropic Claude

Created by claude-3-haiku-20240307 on 2024-12-31

The image appears to be a black and white photograph of a typical small-town street scene from an earlier time period, likely the early to mid-20th century. It shows an old wooden building with a "Barber Shop" sign and a vintage automobile parked in front. Several men are seated on benches outside the shop, dressed in the clothing styles of that era. The overall scene conveys a sense of a quiet, rural community from a bygone era.

Created by claude-3-opus-20240229 on 2024-12-31

The black and white image depicts a group of men, likely from the early to mid 20th century based on their clothing and the vintage car, gathered outside a rustic wooden building with signs for a barber shop and shoe shining service. The men are sitting and standing around casually, some wearing hats. An old automobile is parked in front of the dilapidated structure, which has weathered boards and tattered curtains or laundry hanging in the windows. The scene captures a slice of everyday life from a bygone era in what appears to be a rural or small town setting.

Created by claude-3-5-sonnet-20241022 on 2024-12-31

This is a black and white historical photograph showing a street scene outside a barber shop. Several people are sitting on what appears to be a bench or ledge outside the establishment, which has a "BARBER SHOP SHOE SHINE" sign visible. A vintage car, likely from the 1920s or early 1930s, is parked in front of the shop. The building appears weathered, with wooden siding and posts supporting an overhang. There are some advertisements or posters visible on the wall, including what appears to be a Camel cigarette advertisement. Laundry or clothing can be seen hanging above. The scene captures everyday life during what appears to be the Great Depression era in America.