Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 43-51 |

| Gender | Male, 100% |

| Calm | 97.8% |

| Surprised | 6.4% |

| Fear | 5.9% |

| Sad | 2.2% |

| Angry | 0.6% |

| Confused | 0.3% |

| Disgusted | 0.3% |

| Happy | 0.2% |

Feature analysis

Amazon

| Person | 99.6% | |

Categories

Imagga

| paintings art | 91.2% | |

| people portraits | 8.4% | |

Captions

Microsoft

created by unknown on 2019-02-26

| an old photo of a person | 49.5% | |

| a person taking a selfie in a room | 35.8% | |

| a person in a room | 35.7% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-24

| a photograph of a portrait of a man in a suit and tie | -100% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2025-01-28

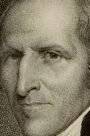

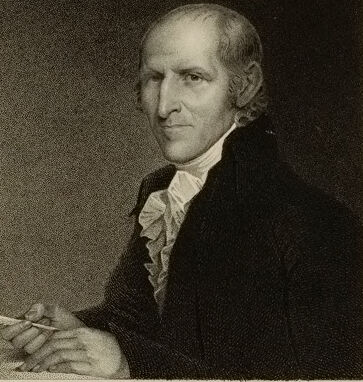

The image depicts an engraving of an individual dressed in formal attire, including a dark coat, a white cravat, and ruffled shirt. The individual's hands are clasped together. Below the portrait, the name "TIMOTHY PICKERING" is inscribed, followed by a signature-style script of "Pickering." The engraving appears to be of historical nature and is framed by a simple border on a light background. Additional text at the bottom reads "Engraved by Wm. E. Marshall from a drawing by H. Inman, after Stuart" and "Given to the Grapy. with by L. Plass."

Created by gpt-4 on 2025-01-28

The image is a classic portrait in black and white, depicting a person dressed in period clothing which suggests it is from an earlier century. This individual is wearing a dark jacket with a high collar and what appears to be a white shirt or cravat. The subject's attire and the style of the print suggest formality and distinction, characteristics common in historical portraits of notable figures.

Below the portrait, there is text that seems to imply the significance or recognition of the person depicted. The words are presented in a traditional font that matches the era suggested by the clothing, underscoring the historical context of the image. There's also a handwritten signature that contributes to the personal and authentic nature of the portrait. The appearance of the paper itself, with its clean background and aged coloration, provides a further indication that this is a reproduction of an older print or engraving.

The portrait is centered on a larger sheet of paper, which frames the image nicely and provides space for the text below the image, adding to the overall formal presentation.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2025-01-28

This is a historical engraved portrait from what appears to be the late 18th or early 19th century. The artwork shows a figure in formal attire of that period, including a dark coat and a white ruffled shirt or cravat. The portrait is rendered in a classical style typical of the era, with careful attention to shading and detail. Below the portrait is a signature reading "T Pickering." The overall composition is formal and dignified, as was common for portrait engravings of prominent figures from this time period. The image quality suggests this is a reproduction of a historical document or artwork.

Created by claude-3-opus-20240229 on 2025-01-28

The image shows a black and white portrait engraving or etching of an elderly gentleman from an earlier historical era, likely the 18th or early 19th century based on his clothing and hairstyle. He has white hair pulled back, and is wearing a dark suit coat with a ruffled collar or cravat. His right hand is positioned near his chest. Below the portrait is a printed signature that appears to read "P. Pickering". The portrait is presented on an off-white background or paper.

Created by claude-3-haiku-20240307 on 2025-01-28

The image appears to be a portrait of an older man wearing formal attire, likely from the 19th century. The man has a distinguished appearance with a stern expression on his face. He is wearing a dark coat and a white ruffled shirt, suggesting he is from a higher social class. The portrait is presented in a black and white style, giving it an antique feel.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-01-28

The image is a portrait of an older man, likely from the 18th or early 19th century. The man is depicted wearing a dark jacket with a white ruffled shirt underneath, and he holds a quill pen in his right hand. He appears to be seated at a desk or table, although the surface is not visible in the image.

The background of the portrait is a dark color, which helps to highlight the subject's features. The overall effect of the image is one of dignity and seriousness, suggesting that the man was a person of importance or authority.

At the bottom of the image, there is some text that reads "Given to the Gray walls by L. Throop." This suggests that the portrait was given to an institution or organization called "Gray walls" by someone named L. Throop. The exact meaning of this text is unclear, but it may indicate that the portrait was donated to a museum or other cultural institution.

Overall, the image is a formal portrait of an older man, likely from the 18th or early 19th century. It suggests that the man was a person of importance or authority, and it provides some clues about the context in which the portrait was created.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-01-28

The image depicts a historical portrait of Thomas Jefferson, the third President of the United States, known for his significant contributions to American independence and governance. The portrait is a black-and-white engraving, likely from the early 19th century, showcasing Jefferson in a formal and dignified manner. He is seated with a composed expression, wearing a dark suit with a white shirt and a cravat around his neck. The engraving is bordered by a simple frame, and the background is plain, focusing attention on Jefferson's figure. The portrait is signed by the artist, "T.S. Wood," and includes a handwritten dedication at the bottom, "Given to the Gray Library by L. Thomas." This dedication suggests that the portrait was a gift to a library named the Gray Library, possibly indicating its historical significance and the intention to preserve it for educational purposes. The image captures Jefferson's legacy as a statesman and his enduring impact on American history.

Created by amazon.nova-pro-v1:0 on 2025-01-28

The image is a portrait of a man. The man is wearing a black coat with a white shirt and a white ruffled collar. He is also wearing a white necktie. The man is holding a pen in his right hand and a piece of paper in his left hand. The man's face is slightly turned to the right, and he is looking at something in front of him.