Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 23-38 |

| Gender | Female, 50.3% |

| Happy | 45% |

| Confused | 45.1% |

| Calm | 45% |

| Disgusted | 54.6% |

| Angry | 45.1% |

| Sad | 45.1% |

| Surprised | 45.1% |

Feature analysis

Amazon

| Person | 98.1% | |

Categories

Imagga

| paintings art | 46.6% | |

| streetview architecture | 42.6% | |

| nature landscape | 10% | |

| beaches seaside | 0.6% | |

| interior objects | 0.1% | |

| macro flowers | 0.1% | |

Captions

Microsoft

created on 2018-04-19

| a vintage photo of a person | 79.8% | |

| a vintage photo of a book | 57.8% | |

| a vintage photo of a group of people looking at a book | 40.9% | |

OpenAI GPT

Created by gpt-4 on 2024-12-11

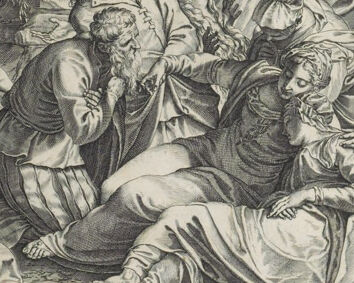

This image appears to be a detailed black and white engraving depicting the crucifixion of Jesus. Jesus is shown nailed to the cross, which is placed at the center of the composition, with a sign labeled "INRI" above His head. His body is lean and muscular, and there is an expression of suffering on His face. Surrounding the cross is a crowd of various figures engaged in diverse activities and expressions of emotion. The people on the ground display a range of responses; some are in active movement, while others appear distressed or mournful. In the foreground, there are individuals who seem to be grieving; a group is shown clustered close together in sorrow. Other figures are depicted in the act of casting lots for Jesus' garments, as indicated by their focused attention on pieces of fabric. Additionally, soldiers and onlookers are shown in various states of engagement, some observing the crucifixion, and others involved in conversation or actions. The scene is set against a dramatic sky with clouds, which adds to the overall intensity of the scene. The attention to detail in the clothing, physical expressions, and the drama unfolding suggests that this is a work of a skilled artist, aiming to capture the solemn and tragic nature of the crucifixion narrative. Engravings such as this were commonly used for religious education and reflection. The text below the image is likely to be explanatory or scriptural references, but it's too small to read clearly in this representation.

Created by gpt-4o-2024-05-13 on 2024-12-11

This is a detailed and dramatic depiction of the Crucifixion of Jesus Christ, a common theme in Christian art. The central figure, Jesus, is shown nailed to the cross, wearing a crown of thorns and a loincloth. Above his head is the inscription "INRI," which stands for "Iesus Nazarenus, Rex Iudaeorum" (Jesus of Nazareth, King of the Jews). Surrounding Jesus are various other figures, each engaged in different activities related to the crucifixion event: 1. To Jesus' right and left are the two thieves who were crucified alongside him. One thief is in the process of being nailed to his cross. 2. In the foreground, several mourners are depicted grieving at the sight of the crucifixion. Among them, a woman who appears to have fainted, possibly the Virgin Mary, is being supported by others. 3. Soldiers and other onlookers are present, some holding spears and others engaged in conversation or actions related to the crucifixion. 4. A figure on horseback seems to be supervising the proceedings or delivering instructions. The background consists of a barren landscape, which is conventional in scenes of the crucifixion, adding to the somber tone of the artwork. The overall scene is highly expressive, capturing the anguish, pain, and solemnity associated with this pivotal moment in Christian theology. The style suggests that this is a historical piece, possibly a detailed engraving or etching with intricate line work characteristic of Renaissance or later European religious artwork.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2024-12-11

The image depicts a dramatic religious scene, centered on a large crucified figure with outstretched wings, presumably representing Christ. Surrounding the central figure are numerous figures, some kneeling and embracing the crucified one, while others appear to be engaged in various actions, including carrying away the body. The scene takes place in an outdoor setting with mountains and other natural elements in the background. The overall composition and rendering suggest this is an artistic rendering of a religious subject, likely inspired by Christian iconography and themes.

Created by claude-3-5-sonnet-20241022 on 2024-12-11

This is a historical engraving depicting the crucifixion scene. The central figure shows Christ on the cross, surrounded by a chaotic and dramatic scene of figures in various states of emotion and action. The artwork is rendered in black and white, showing great detail and skilled line work typical of Renaissance or Baroque period engravings. The composition is dynamic, with figures positioned around the base of the cross, some appearing to be in mourning while others are engaged in more aggressive actions. In the background, there's a mountainous landscape, and various figures can be seen carrying implements and moving about the scene. The style appears to be from the 16th or 17th century, with characteristic dramatic lighting and emotional intensity typical of religious art from this period. The inscription "INRI" is visible at the top of the cross, and there appears to be text in Latin at the bottom of the print. The engraving demonstrates sophisticated artistic technique in its detailed rendering of fabrics, facial expressions, and the overall dramatic atmosphere of this pivotal biblical scene.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-12-11

The image depicts a scene from the Crucifixion of Jesus Christ, as depicted in the painting "The Crucifixion" by Albrecht Dürer. The painting shows Jesus Christ on the cross, surrounded by a group of people, including his mother Mary, John the Apostle, and other figures. The scene is set against a backdrop of a rocky landscape, with a dark and ominous sky. In the foreground, Jesus is shown in the center of the composition, his body contorted in agony as he hangs from the cross. His arms are outstretched, and his head is tilted back, with his eyes closed in a moment of intense suffering. His legs are bent at the knees, and his feet are nailed to the cross. To the left of Jesus, Mary is shown kneeling on the ground, her hands clasped together in prayer. She is dressed in a long, flowing robe, and her face is contorted in grief. John the Apostle is shown standing behind Mary, his arms wrapped around her shoulders in a gesture of comfort and support. In the background, a group of soldiers and other figures are shown gathered around the cross, looking on with a mixture of curiosity and indifference. Some of them are shown holding spears or other weapons, while others are shown simply observing the scene. The overall mood of the painting is one of intense drama and emotion, capturing the moment of Jesus' crucifixion in a powerful and evocative way. The use of dark colors and dramatic lighting adds to the sense of tension and foreboding, creating a sense of urgency and importance around the event. The painting is a masterpiece of Renaissance art, showcasing Dürer's skill and technique as a painter. It is a powerful and moving depiction of one of the most significant events in Christian history, and it continues to be admired and studied by art lovers around the world today.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-11

The image is a black and white drawing of the crucifixion of Jesus Christ. **Key Elements:** * **Jesus on the Cross:** Jesus is depicted in the center of the image, nailed to a cross with his arms outstretched and his head tilted back. He is wearing a loincloth and has a crown of thorns on his head. * **Crowd of People:** A crowd of people is gathered around the cross, some of whom are kneeling or lying on the ground. They appear to be mourning or praying. * **Horses and Soldiers:** In the background, there are horses and soldiers, who are likely Roman soldiers who have been sent to guard the crucifixion. * **Landscape:** The landscape behind the cross is barren and rocky, with hills and mountains in the distance. **Overall Impression:** The image conveys a sense of sadness and tragedy, as Jesus is being crucified for his beliefs. The crowd of people around him adds to the sense of drama and emotion, while the horses and soldiers in the background provide a sense of context and setting. The image is likely intended to evoke feelings of sympathy and compassion in the viewer.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-26

This image is a black-and-white engraving of Jesus Christ on the cross. The cross is placed on a hill, and Jesus is nailed to it with his arms outstretched. The engraving is titled "The Passion of Christ." The image is surrounded by people who are either kneeling or standing, and some of them are holding weapons. In the distance, there are mountains and a river.

Created by amazon.nova-pro-v1:0 on 2025-02-26

The image is a black-and-white illustration of Jesus Christ on the cross. The scene is set in a mountainous area with a dark sky. Jesus is nailed to the cross, and soldiers are around him. Some soldiers are holding sticks, and others are kneeling on the ground. In the foreground, a group of people, including a woman and a man, are kneeling and praying. The illustration has a watermark in the bottom left corner.