Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 17-29 |

| Gender | Male, 50.2% |

| Calm | 49.6% |

| Disgusted | 49.6% |

| Sad | 49.5% |

| Angry | 50.3% |

| Confused | 49.5% |

| Fear | 49.6% |

| Happy | 49.5% |

| Surprised | 49.5% |

Feature analysis

Amazon

| Person | 98.9% | |

Categories

Imagga

| paintings art | 99.2% | |

Captions

Microsoft

created by unknown on 2019-10-29

| a black sign with white text | 33.6% | |

| a black sign with white letters | 30.6% | |

| a white sign with black text | 30.5% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-24

| a photograph of a drawing of a man sitting in a chair | -100% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2025-01-29

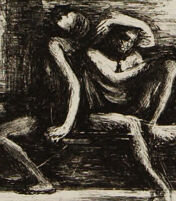

This image is a lithograph by the artist George Bellows, created in 1921 and titled "Electrocution." The artwork is rendered in black and white, depicting a stark scene that likely illustrates a moment of execution or its aftermath.

In the composition, two despondent figures are seated near the center of the image, with one figure resting their head in their hand, conveying a sense of distress or mourning. Another figure stands to the right, appearing to be cloaked, and possibly symbolizes an authoritative or somber presence. A fourth figure is positioned in the background, visible through a large window opening, leaning with an air of detachment or contemplation. The setting has an austere and confining feel, with rough, textural lines depicting the interior space's brick walls and floor, adding to the grim atmosphere of the scene.

Created by gpt-4 on 2025-01-29

This image appears to be a black and white etching or print. The composition shows an interior scene with strong contrasts between light and shadow. The artistic style is expressive, with visible etching lines that give texture to the image.

In the foreground on the left, there is a seated figure with their head resting on one hand, suggesting a posture of deep thought or sorrow. To the right and in the background, there's a window through which another figure is visible; this person is seated and seems to be looking into the room or possibly through another window on the opposite side. The atmosphere is somber and contemplative.

There is a signature at the bottom right-hand side of the print, suggesting the artist's name and possibly a date, although it's not legible in the image provided. The bottom left seems to have the title of the work or another form of text, but that too is not clear enough to discern.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2025-01-29

This appears to be a dark, moody etching or drawing showing an interior scene. The composition shows several figures in what seems to be a stark, prison-like setting with bare walls. There's a figure slumped or resting against a wall in the foreground, another figure standing in shadow, and someone visible through what appears to be a window or opening in the wall. The artwork employs heavy crosshatching and strong contrast between light and dark areas to create a somber, oppressive atmosphere. The style and subject matter suggest this might be a commentary on confinement or isolation. The technique appears to be done in pen or pencil, creating fine lines to build up the shadows and textures.

Created by claude-3-opus-20240229 on 2025-01-29

The image depicts a black and white sketch or etching of an interior scene. In the foreground, two figures are visible - one crouching or sitting on the ground, and the other standing and looking out a window or opening. The interior space has brick or stone walls and appears somewhat run-down or decrepit. Outside the window, more brick structures are visible, suggesting an urban or city environment. The sketch has a rough, hand-drawn quality with cross-hatched shading to convey depth and shadow.

Created by claude-3-haiku-20240307 on 2025-01-29

The image appears to be a black and white etching or drawing depicting a scene with several figures. In the foreground, there is a crouching or seated figure, in a distressed or pained posture. Behind this figure, there are two other figures, one standing and one appearing to be entering or exiting a doorway or alcove. The setting appears to be a dimly lit interior space with shadowy architectural elements in the background. The overall mood of the image is somber and evocative, suggesting a sense of psychological or emotional tension.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-16

The image is a black and white sketch of a scene depicting three individuals in a room. The sketch is rendered in a sketchy style, with bold lines and shading that gives it a dynamic and expressive quality.

In the foreground, there is a man sitting on a bench, his head resting in his hands. He appears to be in a state of deep contemplation or distress. To the right of the man, there is a woman standing, her back turned to him. She seems to be engaged in conversation with someone outside the frame, her body language suggesting a sense of urgency or concern.

In the background, there is a third figure, partially obscured by the woman. This figure appears to be a man, and he seems to be leaning against a wall or a pillar, his eyes fixed intently on the woman.

The overall atmosphere of the sketch is one of tension and drama, with the three figures seemingly caught up in a complex web of emotions and interactions. The sketch's use of bold lines and shading creates a sense of energy and movement, drawing the viewer's eye through the scene and inviting them to explore the relationships between the characters.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-16

The image is a black-and-white sketch of a scene with three people in a room. The room has a large window on the back wall, and the walls are made of stone or brick.

In the foreground, there is a man sitting on the floor with his head in his hands. He is wearing a loincloth and appears to be in distress. To the right of the man, there is another person standing in the doorway, but their face is not visible. In the background, through the window, there is a woman sitting on a ledge, looking out at the viewer.

The overall atmosphere of the image is one of sadness and despair. The man's posture and facial expression suggest that he is experiencing emotional pain, and the woman's gaze seems to be directed at him with concern or sympathy. The use of dark shading and bold lines adds to the somber mood of the image.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-01-29

The image is a monochromatic sketch of a scene, possibly created using charcoal or pencil. It depicts an indoor setting with a rectangular frame, giving the impression of a window or a doorway. The scene is set in a room with a high ceiling and a simple, unadorned interior. There are three people in the scene. Two of them are sitting on a bench, with one leaning against the other, and the other one is standing in the doorway.

Created by amazon.nova-lite-v1:0 on 2025-01-29

The image is a black-and-white drawing that depicts a scene inside a room. The room appears to be a prison cell, with a person sitting on a bench and another person standing in the corner. The person sitting on the bench is facing away from the viewer, with their head down and hands resting on their knees. The person standing in the corner is looking out of a window, with their back turned towards the viewer. The room is dimly lit, with shadows cast on the walls. The drawing has a simple and minimalist style, with no details or embellishments.