Machine Generated Data

Tags

Amazon

created on 2019-10-30

Clarifai

created on 2019-10-30

Imagga

created on 2019-10-30

| cadaver | 71.6 | |

|

| ||

| statue | 33.6 | |

|

| ||

| sculpture | 32.6 | |

|

| ||

| religion | 27.8 | |

|

| ||

| ancient | 27.7 | |

|

| ||

| stone | 23.7 | |

|

| ||

| art | 23.5 | |

|

| ||

| old | 22.3 | |

|

| ||

| religious | 20.6 | |

|

| ||

| culture | 20.5 | |

|

| ||

| architecture | 20.3 | |

|

| ||

| temple | 19.7 | |

|

| ||

| god | 19.1 | |

|

| ||

| carving | 17 | |

|

| ||

| history | 17 | |

|

| ||

| spiritual | 15.4 | |

|

| ||

| ruler | 14.5 | |

|

| ||

| holy | 14.4 | |

|

| ||

| face | 14.2 | |

|

| ||

| monument | 14 | |

|

| ||

| close | 13.7 | |

|

| ||

| money | 13.6 | |

|

| ||

| figure | 13.2 | |

|

| ||

| dollar | 13 | |

|

| ||

| antique | 13 | |

|

| ||

| cash | 12.8 | |

|

| ||

| one | 11.9 | |

|

| ||

| historic | 11.9 | |

|

| ||

| currency | 11.7 | |

|

| ||

| portrait | 11.7 | |

|

| ||

| pray | 11.6 | |

|

| ||

| historical | 11.3 | |

|

| ||

| travel | 11.3 | |

|

| ||

| head | 10.9 | |

|

| ||

| man | 10.8 | |

|

| ||

| bank | 10.7 | |

|

| ||

| people | 10.6 | |

|

| ||

| spirituality | 10.6 | |

|

| ||

| bill | 10.5 | |

|

| ||

| church | 10.2 | |

|

| ||

| finance | 10.1 | |

|

| ||

| grandfather | 10.1 | |

|

| ||

| vintage | 9.9 | |

|

| ||

| famous | 9.3 | |

|

| ||

| male | 9.2 | |

|

| ||

| banking | 9.2 | |

|

| ||

| dollars | 8.7 | |

|

| ||

| prayer | 8.7 | |

|

| ||

| us | 8.7 | |

|

| ||

| peace | 8.2 | |

|

| ||

| building | 7.9 | |

|

| ||

| paper | 7.8 | |

|

| ||

| carved | 7.8 | |

|

| ||

| museum | 7.8 | |

|

| ||

| hundred | 7.7 | |

|

| ||

| worship | 7.7 | |

|

| ||

| heritage | 7.7 | |

|

| ||

| pay | 7.7 | |

|

| ||

| faith | 7.7 | |

|

| ||

| capital | 7.6 | |

|

| ||

| person | 7.6 | |

|

| ||

| east | 7.5 | |

|

| ||

| business | 7.3 | |

|

| ||

| detail | 7.2 | |

|

| ||

| wealth | 7.2 | |

|

| ||

| hair | 7.1 | |

|

| ||

Google

created on 2019-10-30

| Painting | 92.5 | |

|

| ||

| Art | 87.2 | |

|

| ||

| Visual arts | 72.2 | |

|

| ||

| Stock photography | 71.8 | |

|

| ||

| Portrait | 66.6 | |

|

| ||

| Prophet | 65.1 | |

|

| ||

| Still life | 61.1 | |

|

| ||

| Illustration | 60.2 | |

|

| ||

| Gentleman | 59.6 | |

|

| ||

| Self-portrait | 59 | |

|

| ||

| History | 57.6 | |

|

| ||

| Facial hair | 55.5 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

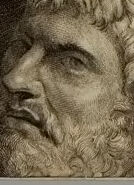

| Age | 34-50 |

| Gender | Male, 99.4% |

| Disgusted | 0% |

| Confused | 0% |

| Fear | 0% |

| Happy | 0% |

| Angry | 0.4% |

| Sad | 0.5% |

| Calm | 99% |

| Surprised | 0% |

AWS Rekognition

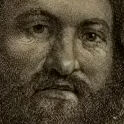

| Age | 32-48 |

| Gender | Male, 99.1% |

| Sad | 42.6% |

| Calm | 54% |

| Angry | 1% |

| Happy | 0.3% |

| Fear | 0.2% |

| Confused | 1.4% |

| Disgusted | 0.1% |

| Surprised | 0.4% |

Microsoft Cognitive Services

| Age | 40 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 45 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Likely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 99.3% | |

|

| ||

Captions

Microsoft

created on 2019-10-30

| a person sitting on a book | 51.4% | |

|

| ||

| a person sitting on top of a book | 50.7% | |

|

| ||

| a person holding a book | 50.6% | |

|

| ||