Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 32-48 |

| Gender | Male, 99.5% |

| Sad | 1.2% |

| Disgusted | 0% |

| Surprised | 0.1% |

| Happy | 0.1% |

| Angry | 0.6% |

| Fear | 0% |

| Confused | 0.1% |

| Calm | 97.9% |

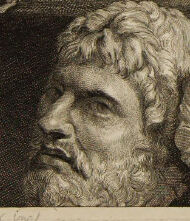

Feature analysis

Amazon

| Painting | 97.4% | |

Categories

Imagga

| paintings art | 98% | |

Captions

Microsoft

created on 2019-11-16

| a vintage photo of a man | 90.1% | |

| a vintage photo of a man holding a book | 66.6% | |

| an old photo of a man | 66.5% | |

OpenAI GPT

Created by gpt-4 on 2025-02-13

This image is a monochromatic etching or engraving depiciting an opulent scene from a historical or classical era. The individual in the foreground is clothed in a lavish, fur-lined robe that suggests high social status or wealth. The person's left hand rests on a table covered with a multitude of coins, some spill over the edge, which implies abundant wealth or a transaction. On this table, there's also a luxurious fabric beneath the coins and a small animal figure to the left, lying on its back, possibly indicative of excess or indulgence. In the background, on the left side of the image, there are classical statues—two of which depict nude figures in dynamic poses—implying that the setting may be a space of culture or collection, such as a gallery or a personal trove of art and antiquities. The presence of these classical elements may also suggest the patronage or appreciation of fine arts by the individual or the setting's historical context, often associated with wealth and higher social echelons. The etching is finely detailed, and the play with light and shadow contributes to the depth and texture, enhancing the visual richness of the scene. A signature or a marking on the bottom right corner suggests an artist's proof, and another inscription, possibly a title or an attribution, is visible in the lower middle section of the print.

Created by gpt-4o-2024-05-13 on 2025-02-13

The image portrays an individual dressed in luxurious, heavy garments with fur trim, sitting at a table laden with small artifacts and coins. The background displays classical statues, emphasizing an atmosphere of art and antiquity. The person seems to be holding an object, possibly another artifact, indicative of the individual's role as a collector or connoisseur of art and historical pieces. The overall setting suggests a deep appreciation for classical and artistic treasures.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2025-02-13

The image appears to be a black and white engraving or etching depicting a bearded, older man sitting in a chair surrounded by various nude figures. The man has a serious, contemplative expression on his face and is dressed in a fur-trimmed robe or cloak. The nude figures in the background seem to be either allegorical or artistic representations, rather than specific individuals. The overall scene has a brooding, introspective quality to it.

Created by claude-3-opus-20240229 on 2025-02-13

The image depicts a seated man with a beard, wearing a fur-lined coat or robe. He is surrounded by various classical statues and sculptural fragments, including a nude female torso, a small cherub or putto figure, and what appears to be an animal skull or skeleton. The man has a pensive, thoughtful expression and is holding some kind of writing implement, suggesting he may be an artist, philosopher or scholar contemplating the artworks and objects around him. The overall scene has a somber, meditative atmosphere, rendered in shades of brown and gray. It seems to be an etching or engraving print based on the linework and tonal qualities.

Created by claude-3-5-sonnet-20241022 on 2025-02-13

This is a historical art print or engraving, appearing to be from the Renaissance period. It shows a portrait of a wealthy merchant or collector dressed in fine fur-trimmed robes, surrounded by various objects that suggest his interests and status. In the background, there are classical nude sculptures and figures. On the table before him are coins or medallions, and what appears to be a sculpted head or bust with a beard. The composition suggests this is someone of cultural and financial means, likely a patron of the arts. The artwork is rendered in a detailed monochromatic style, typical of engravings from this era. The overall tone is rich with dark shadows and fine details, demonstrating the skill of the engraver.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-15

The image depicts a black-and-white engraving of a man surrounded by various sculptures and coins. The man is seated at a table, wearing a dark coat with fur trim and holding a small object in his hand. He has long hair and a beard, and is looking directly at the viewer. In the background, there are several sculptures of nude figures, as well as a few coins scattered on the table. The overall atmosphere of the image is one of contemplation and study, suggesting that the man is a scholar or artist who is deeply engaged in his work. The engraving is signed by the artist, "G. W. F. Heine," and dated "1838." It is likely that this image was created as part of a larger series of engravings, possibly for a book or publication. The level of detail and craftsmanship evident in the engraving suggests that it was created by a skilled artist who was familiar with the techniques of engraving.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-15

The image depicts a sepia-toned print of a man surrounded by various sculptures and busts. The man is seated, wearing a dark robe with fur trim, and holds an object in his right hand. He has long hair and a beard. In the background, several sculptures are visible, including a statue of a woman, a bust of a man, and other figures. The overall atmosphere of the image suggests that it may be a portrait of an artist or collector, surrounded by their work or interests. The print itself appears to be old, with a yellowed border and some signs of wear and tear. It may be a reproduction of an original artwork, or a unique piece created by an artist. Overall, the image presents a fascinating glimpse into the world of art and culture, and invites the viewer to explore its many details and nuances.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-13

The image is a black-and-white drawing, likely an etching, of a man sitting at a table. The man is wearing a fur-lined coat and has a beard and mustache. He is holding a coin in his hand and looking at something in front of him. Behind him, there are two statues of naked women, one of whom is holding a book. There is also a statue of a man holding a child. The drawing is signed "C.V.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F. F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.F.

Created by amazon.nova-pro-v1:0 on 2025-02-13

The image shows a portrait of a man, possibly a famous artist. He is sitting on a chair and looking to the left. He is wearing a coat with a fur collar. On the table in front of him are some objects, including a book and a coin. Behind him are statues of naked people, including a man with a beard and a woman. The image has a watermark in the bottom right corner.