Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 12-22 |

| Gender | Female, 99.4% |

| Calm | 99.2% |

| Fear | 0% |

| Angry | 0.1% |

| Disgusted | 0% |

| Sad | 0.1% |

| Happy | 0.5% |

| Surprised | 0% |

| Confused | 0% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 98% | |

Categories

Imagga

created on 2019-11-16

| paintings art | 85% | |

| pets animals | 11.4% | |

| people portraits | 3.5% | |

Captions

Microsoft

created by unknown on 2019-11-16

| a vintage photo of a baby | 42.6% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-05-04

a photograph of a woman holding a baby in a circle

Created by general-english-image-caption-blip-2 on 2025-07-10

a woman holding two children in her arms

OpenAI GPT

Created by gpt-4 on 2025-02-13

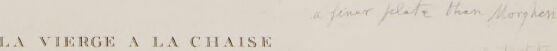

This is an image of an engraving that depicts a seated figure holding a child on their lap. The figure is wearing a long, flowing garment with intricate patterns and a head covering. The child appears to be sitting comfortably against the figure. The seated figure is also positioned beside a column or balustrade, indicating an interior setting. Toward the right side, there is another child, partially obscured, who is standing and appears to be interacting with the seated figure or the child on their lap.

The engraving sits within an oval frame and is presented on a rectangular sheet with inscriptions below. These inscriptions include the artwork's title "LA VIERGE À LA CHAISE," which translates to "The Virgin with the Chair," suggesting that the central figure is the Virgin Mary from Christian belief.

The rendering of the figures shows significant detail through fine lines and shading, showcasing the artist's skill in creating texture and depth. The name "Raffaello Sanzio" is mentioned in the engraving, which implies that the original artwork this engraving reproduces was created by Raphael, the renowned Italian Renaissance artist.

Created by gpt-4o-2024-05-13 on 2025-02-13

This image is a finely detailed engraving titled "La Vierge a LA Chaise," which means "The Virgin of the Chair." It depicts a seated figure in an intricately patterned garment holding an infant on their lap. The figure is adorned with a headscarf and robes, emphasizing the rich texture and folds of the fabric. To the right, another small figure can be seen, partially standing and holding what appears to be a cross. The engraving is beautifully rendered with a focus on intricate details and delicate shading, creating a sense of depth and realism. The image has a classical and serene composition, typical of Renaissance artwork.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2025-02-13

The image depicts a mother cradling her young child in her arms, with another child standing next to them. The mother appears to be wearing a traditional dress or costume and has a pensive, thoughtful expression on her face as she gazes down at the child she is holding. The children in the image have a peaceful, serene quality about them. The image conveys a sense of maternal care and affection.

Created by claude-3-opus-20240229 on 2025-02-13

The image depicts a detailed engraving or print of the Virgin Mary tenderly holding the infant Jesus on her lap, as a young John the Baptist looks on adoringly. Mary is wearing ornate robes and a headdress, while cradling the baby Jesus who is nestled against her. The artwork has a soft, gentle quality to it, capturing an intimate moment between the mother and child figures. The fine lines and shading create a sense of depth and realism in this religious portrait.

Created by claude-3-5-sonnet-20241022 on 2025-02-13

This is a classical art print titled "La Vierge a la Chaise" (The Madonna of the Chair), which appears to be an engraving or etching. The circular composition shows a tender maternal scene featuring a woman in Renaissance-style clothing with a head covering, holding a child. Another young figure appears in the background. The artwork includes fine detail work typical of the engraving medium, with careful attention to fabric textures and shading. There's an ornate chair or vessel visible in the scene. The print has a sophisticated use of line work to create depth and dimension, and the overall composition is contained within a perfect circle, known as a tondo format. The image caption and credits appear below the artwork in French.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-24

This is a classical engraving titled "La Vierge a la Chaise" (The Madonna of the Chair). It's a circular composition showing a tender maternal scene in a Renaissance style. The main figure wears an ornate headdress and patterned clothing, holding a child while another young figure looks on from the side. The artwork features fine line work characteristic of engraving techniques, with careful attention to the rendering of fabric textures and subtle shadows. There's a decorative urn or vessel visible in the composition. The overall tone is gentle and intimate, capturing a moment of maternal affection. The image appears to be a reproduction of a famous Renaissance painting, translated into the medium of engraving.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-17

The image is a black and white engraving of a woman holding a baby, with a child standing next to her. The woman is wearing a headscarf and a dress with a patterned design. She is holding the baby in her arms, who is looking at the viewer. The child standing next to her has their hand on the woman's shoulder and is looking at the viewer as well.

The background of the engraving is a circle with a striped border around it. The engraving is titled "La Vierge a la Chaise" which translates to "The Virgin on the Chair". The engraving appears to be a depiction of the Virgin Mary holding the baby Jesus, with a young child standing next to her. The engraving is likely a religious or devotional piece, and may have been created in the 19th century.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-17

The image is a black-and-white print of a woman holding a baby, with another child standing beside her. The woman is wearing a headscarf and a dress with a patterned bodice, and she has a serious expression on her face. She is holding the baby in her arms, and the baby is looking up at her with a curious expression. The other child is standing to the right of the woman, looking at the baby with a gentle expression. The background of the image is dark, which helps to highlight the figures in the foreground.

At the bottom of the image, there is text that reads "LA VIERGE A LA CHAISE" in small letters. This suggests that the image may be a representation of the Virgin Mary holding the baby Jesus, with another child (possibly Saint John the Baptist) standing beside her. The overall mood of the image is one of serenity and devotion, and it appears to be a religious or spiritual artwork.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-13

The image is a black-and-white print of a painting titled "La Vierge a la Chaise." The painting depicts a serene scene of a woman, likely Mary, sitting on a chair with a baby Jesus in her lap. The baby Jesus is looking up at the viewer while resting on Mary's lap. Another child, possibly John the Baptist, stands beside them, holding a cross and looking down. The image is framed by a decorative border with a textured pattern.

Created by amazon.nova-pro-v1:0 on 2025-02-13

The image is a black and white print of a painting. It features a woman holding a child. The woman is wearing a headscarf and a long dress. The child is sitting on her lap, and another child is standing next to her. The child is wearing a dress and holding a cross. The painting is titled "La Vierge a la Chaise" and is signed by the artist.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-01

Here's a description of the image:

Overall Impression:

The image is a black and white engraving or etching. It depicts a religious scene, likely of the Madonna and Child with other figures. The style is classical, with a strong emphasis on light and shadow to create volume and depth.

Composition:

- The central focus is on a Madonna figure holding the Christ child. They are positioned inside a circular frame.

- To the right of the child is another figure, likely a young John the Baptist, with a halo and small cross.

- The figures are closely grouped, creating a sense of intimacy and closeness.

- There is a partial view of a chair arm/back.

Details:

- Madonna: She is depicted as a young woman, wearing a head covering and adorned clothing. Her expression is gentle.

- Christ Child: He is shown as a chubby baby held in the mother's arms.

- John the Baptist: Has a halo and carries a small cross.

- Technique: The artwork is created using fine lines, probably engraved, to capture the form and texture of the figures and their drapery. The artists' technique demonstrates an understanding of form and light.

Additional Information (from OCR):

- Title: "LA VIERGE A LA CHAISE" (The Madonna of the Chair)

- Artists: The image states “Dessine par De Labrie”, “Grave à Magat par J.G. Muller”

- Additional Notes: It also mentions “a finer plate than Morghens,”, and is marked "first plate".

Overall, this appears to be a well-executed print of a religious composition. The style is classical, and the attention to detail is impressive.

Created by gemini-2.0-flash on 2025-05-01

Here is a description of the image:

The image is an antique monochromatic engraving of a classical painting. The artwork is contained within a large circle against a background of thin, horizontal lines.

At the center is a woman, likely the Virgin Mary, wearing a turban-like headpiece and patterned clothing. She is seated and cradling a plump baby, assumed to be the infant Jesus. The baby is looking out with a slightly worried expression. To the right of the baby, and partially obscured behind the woman, is a young boy holding a simple cross. The boy has a halo and is believed to be Saint John the Baptist.

The scene is intimate and conveys a sense of tender protection. The engraving captures the soft features and gentle gazes of the figures, which are characteristic of Renaissance art.

At the bottom of the print, there are inscriptions and writings in what appears to be French, identifying the artist and giving details about the artwork. Handwritten notes and annotations are present as well, suggesting the print may have been part of a collector's item or historical record.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-08

The image depicts a classic religious artwork titled "La Vierge à la Chaise" (The Virgin in the Chair). It is a detailed engraving that portrays the Virgin Mary seated and holding the infant Jesus in her lap. The composition is set within a circular frame.

Here are the detailed elements of the image:

Central Figures:

- Virgin Mary: She is shown seated, wearing a head covering and a detailed, flowing robe with floral patterns. She holds the infant Jesus close to her.

- Infant Jesus: The child is depicted as a plump baby, resting comfortably in Mary's arms. His gaze is directed outward, possibly towards the viewer.

- Young John the Baptist: To the right of Mary, a young boy, likely representing John the Baptist, is kneeling. He is holding a cross and appears to be in a reverent pose, gazing at the infant Jesus.

Background and Setting:

- The background is relatively plain, focusing attention on the central figures.

- There is a vase or decorative object placed on the left side of the image, adding to the classical aesthetic.

Inscriptions:

- At the bottom of the image, there are inscriptions in French. The title "LA VIERGE A LA CHAISE" is prominently displayed.

- Additional text indicates that the engraving was created by Gilles Rousselet based on a painting by Raphael.

- There are also other inscriptions that mention the publisher and the date, indicating the artwork's provenance and historical context.

Artistic Style:

- The engraving is highly detailed, showcasing the intricate work typical of classical engravings.

- The use of shading and line work creates depth and texture, giving the figures a three-dimensional quality.

Overall, the image is a beautifully rendered engraving that captures a serene and intimate moment between the Virgin Mary, the infant Jesus, and the young John the Baptist, reflecting the religious and artistic traditions of the period.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-20

This is a black and white etching titled "La Vierge à la Chaise," which translates to "The Virgin in a Chair." The image depicts a seated woman, likely representing the Virgin Mary, cradling a baby, presumably the infant Jesus, in her lap. To the right of the woman, there is another young child, possibly an angel, holding a small cross. The woman is dressed in a dark robe with a patterned scarf or shawl, and there is a chair or low bench partially visible on the left side of the composition.

The style of the image suggests it is a classical or Renaissance-inspired artwork, with attention to detail in the facial features, the clothing, and the overall composition. The etching technique is evident in the fine lines and shading used to create the image. The text at the bottom of the image indicates that it is a print after a work by Raphael, and it was engraved by M. Gilbert.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-20

This image is a detailed black-and-white engraving of a circular composition, depicting the Virgin Mary with the Christ Child and the infant Saint John the Baptist. Mary is seated on a chair, holding the Christ Child in her arms. The Christ Child is holding a bird, which is a traditional symbol in Christian iconography. Saint John the Baptist is standing to the right, holding a cross. The background is dark, which highlights the figures. The engraving is rich in texture and shading, creating a sense of depth and volume. The title "LA VIERGE A LA CHAISE" is written below the circular composition, indicating that this is a print of Raphael's famous painting "The Madonna of the Chair." The artist's signature and other details are also present at the bottom of the image.