Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 19-31 |

| Gender | Male, 54.2% |

| Sad | 46.2% |

| Happy | 45.1% |

| Surprised | 45.1% |

| Disgusted | 45.1% |

| Calm | 52.3% |

| Confused | 45.1% |

| Angry | 45.8% |

| Fear | 45.3% |

Feature analysis

Amazon

| Person | 95.8% | |

Categories

Imagga

| streetview architecture | 86.8% | |

| paintings art | 13.1% | |

Captions

Microsoft

created on 2019-11-07

| an old photo of a person | 53.4% | |

| old photo of a person | 49.2% | |

| an old photo of a person in a room | 49.1% | |

OpenAI GPT

Created by gpt-4 on 2025-02-03

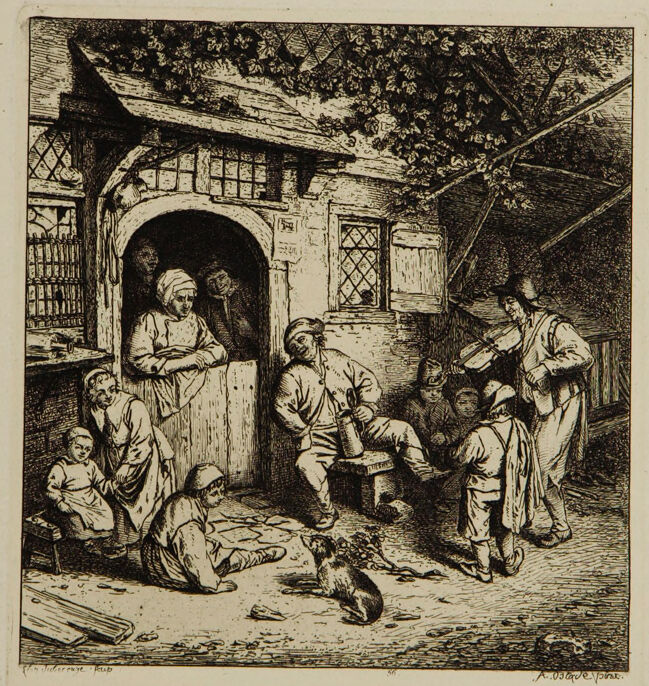

This image depicts an etching or engraving style artwork. It portrays a rustic courtyard scene with several figures in what appears to be a casual, daily life moment. On the left, there is an individual sitting next to some wooden planks and tools, conveying some type of labor or craft is occurring. Central in the scene is a group of individuals gathered around a basin, with one person pouring liquid from a jug, possibly signifying communal activity or a shared meal. To the right, there is an open doorway leading to a dark interior, and beside it stands a figure. There are trees and foliage above, suggesting an outdoor, possibly rural setting. The image has a quite detailed and textured representation, typical of etching work, which gives a sense of depth and realism to the scene. The artwork is mounted on a white background with ample margins around the etching.

Created by gpt-4o-2024-05-13 on 2025-02-03

This is a detailed etching depicting a rural scene with several figures outside a rustic building. The setting appears to be an inn or a cottage with a thatched or gabled roof and diamond-paned windows. A variety of activities are taking place: one figure stands in a doorway, others are seated or standing in conversation, and one person is tending to a child. In the foreground, a dog is present, adding to the casual, daily-life atmosphere of the scene. The overall composition is rich with texture and intricate architectural details, contributing to the sense of a lively social gathering.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2025-02-03

This is a black and white etching or engraving that appears to depict a rustic scene outside a cottage or tavern. The artwork shows several figures gathered around the entrance of a building with an arched doorway and diamond-paned windows. The building has a somewhat weathered, rustic appearance with vines or foliage growing over the top. The scene includes people in peasant or working-class clothing from what appears to be the 17th or 18th century. Some are seated while others are standing, suggesting an informal gathering or social scene. There's a small cat or dog in the foreground of the image. The artistic style shows fine detail and crosshatching typical of period etchings, with careful attention paid to the textures of the building's stonework, the wooden door, and the surrounding environment. The composition creates a sense of depth and atmosphere through the use of light and shadow. The overall mood of the piece feels quite intimate and authentic to daily life of the period, capturing what appears to be a casual moment of social interaction among common people.

Created by claude-3-opus-20240229 on 2025-02-03

The image depicts a courtyard scene from the past, rendered in a detailed etching or engraving style. In the courtyard, there are several figures gathered, appearing to be a family or household. The central adult figure seems to be telling a story or giving instructions to the children sitting and standing around. The architecture shows wooden beams, ornate doorways and windows typical of an old European style home or inn. Vines grow across the roof, adding to the rustic charm of the setting. The entire scene has an intimate, storytelling atmosphere, providing a glimpse into domestic life from a bygone era.

Created by claude-3-haiku-20240307 on 2025-02-03

The image appears to be an engraved illustration or print depicting a rural or village scene. It shows a group of people gathered outside an old, rustic-looking building with a thatched roof and wooden beams. The people are engaged in various activities, including what appears to be a gathering or gathering of some kind. The scene has a sense of activity and community, with people of different ages and roles present. The overall style and composition suggest this is a historical or traditional depiction of a village setting.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-13

The image depicts a vintage black-and-white illustration of a group of people gathered in front of a building, likely a tavern or inn. The scene is set in a rural or village setting, with a dirt road leading up to the entrance of the building. A man sits on a bench outside the door, playing a stringed instrument, while several others sit or stand around him, engaged in conversation or watching the musician. A dog lies on the ground nearby. The building has a wooden facade with a large arched doorway and a smaller door to the right. A window above the doorway features a decorative lattice pattern. The illustration is rendered in a detailed, realistic style, with intricate textures and shading that give the scene a sense of depth and atmosphere. The overall mood of the image is one of warmth and conviviality, suggesting a lively gathering of friends and neighbors enjoying each other's company on a pleasant evening.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-13

The image depicts a black-and-white illustration of a group of people gathered outside a building, with the title "The Fiddler" written in small letters at the bottom right corner. The scene is set in front of a stone building with a large arched doorway and a window on either side. A man sits on a bench playing a fiddle, while others sit or stand around him, some looking at him and others engaged in their own activities. In the foreground, a dog lies on the ground, and a few objects are scattered about, including what appears to be a piece of wood or a tool. The overall atmosphere of the scene is one of casual gathering and socializing, with the fiddler providing entertainment for the group. The illustration style is detailed and realistic, with intricate lines and shading that give the scene a sense of depth and texture. The use of black and white adds a sense of simplicity and timelessness to the image, making it feel like a snapshot from another era.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-02-03

The image is a black-and-white etching depicting a scene of people gathered outside a rustic building. The etching is framed with a thin white border. The building appears to be a small, old-fashioned shop or house with a thatched roof and a wooden door. There are several people in the scene, engaged in various activities. In the foreground, there are two children sitting on the ground, one of whom is holding a small dog. Next to them, a man is seated on a stool, holding a jug and looking downward, possibly deep in thought or engaged in conversation. In the middle ground, a woman stands in the doorway of the building, leaning against the frame and observing the scene. She appears to be engaged in conversation with another person, possibly a customer or a passerby. To the right of the woman, another man stands, holding a bundle or package, and appears to be either entering or exiting the building. He is dressed in traditional attire, suggesting a historical or rural setting. In the background, there is a tree with branches hanging over the building, adding a natural element to the scene. The overall atmosphere of the etching is one of quiet, everyday life, with people engaged in simple, routine activities. The etching style gives the image a timeless quality, evoking a sense of nostalgia or historical charm.

Created by amazon.nova-lite-v1:0 on 2025-02-03

This image is an etching titled "The Village Tavern" by the Dutch artist Adriaen van Ostade. It depicts a scene of villagers gathered around a tavern, with a man sitting on a bench, a woman standing in the doorway, and a man leaning against a tree. The tavern is surrounded by trees and has a thatched roof. The etching is in black and white and has a vintage look.