Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 12-22 |

| Gender | Female, 92.9% |

| Sad | 1.8% |

| Confused | 0.2% |

| Disgusted | 0% |

| Happy | 0.1% |

| Fear | 0% |

| Calm | 97.8% |

| Surprised | 0% |

| Angry | 0.1% |

Feature analysis

Amazon

| Painting | 97.2% | |

Categories

Imagga

| paintings art | 99.5% | |

Captions

Microsoft

created on 2020-04-24

| a group of people looking at a book | 62.6% | |

| a group of people sitting on a bench | 62.5% | |

| a group of people sitting on a book | 54.8% | |

OpenAI GPT

Created by gpt-4 on 2024-12-04

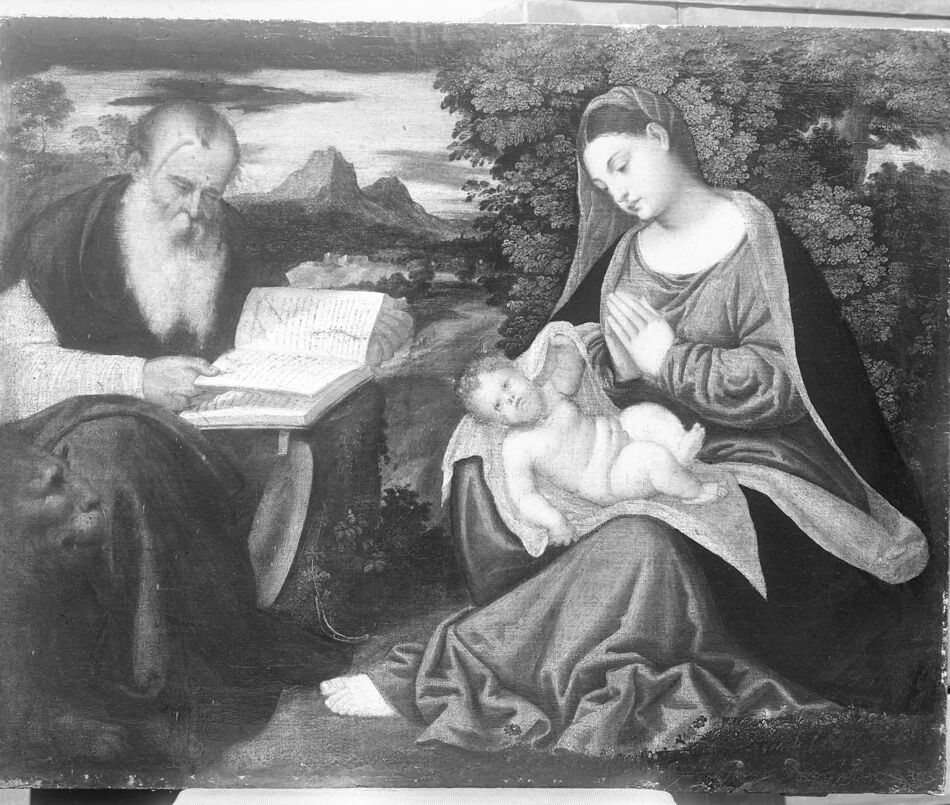

The image appears to be a black and white photograph of a painting. On the left side, there is a bearded figure in historical clothing, sitting with an open book on his lap, deeply engaged in reading. The person's gaze is fixed on the pages of the book, suggesting concentration or study. The setting includes a natural landscape background with trees, a calm body of water in the middle distance, and a mountainous region far back. The right side of the painting presents an individual wearing draped clothing, suggesting a figure from a historical or religious context. This figure is sitting with a calm posture and seems to be interacting tenderly with an object in their lap, which is not detailed due to the blurring. Overall, the artwork has a serene atmosphere and is characteristic of classical religious scenes, often found in historical artworks. The attention to the drapery of the clothing and the portrayal of the natural elements in the landscape indicate a skilled hand, possibly from the Renaissance or a similar period that appreciated detailed representation in art.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-29

This appears to be a classical religious painting depicting a scene with three figures in an outdoor setting. On the left, there's an elderly figure with a long white beard reading from what appears to be a holy book or scripture. On the right, there's a woman in flowing robes holding an infant. The scene is set against a landscape background with mountains and trees. The composition and style suggests this is likely a Renaissance-era religious artwork, painted in the traditional black and white or monochromatic style common in historical paintings.

Created by claude-3-haiku-20240307 on 2024-11-27

The image depicts a religious or biblical scene. It shows an older man, who appears to be a prophet or wise figure, intently reading from a large book. Next to him is a woman, likely the Virgin Mary, who is holding a sleeping infant, presumably the baby Jesus. The scene takes place in a pastoral landscape with mountains and trees in the background. The overall tone of the image is somber and contemplative, capturing a moment of reverence and spiritual contemplation.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-29

The image is a black-and-white photograph of a painting depicting the Holy Family. The painting features Mary, Joseph, and the infant Jesus in a serene outdoor setting. Mary is seated on the right side of the painting, wearing a long dress and a head covering. She is holding the infant Jesus in her lap, with her hands clasped together in prayer. Joseph is seated on the left side of the painting, wearing a long robe and a head covering. He is reading from an open book, which rests on a small table or pedestal in front of him. In the background, there are trees and mountains visible, suggesting a peaceful and natural setting. The overall atmosphere of the painting is one of tranquility and devotion, capturing a moment of intimacy and reverence between the Holy Family. The photograph itself appears to be an old print, with visible signs of aging and wear. The edges of the print are rough and torn, and there are scratches and marks on the surface. Despite its age, the photograph still conveys the beauty and significance of the original painting, inviting the viewer to contemplate the sacred scene it depicts.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-24

The image depicts a painting of the Holy Family, featuring Mary, Joseph, and Jesus. Mary is seated on the right side of the painting, wearing a long robe and holding the baby Jesus in her lap. Joseph is seated on the left side of the painting, wearing a dark robe and holding an open book. The baby Jesus is naked and lying on a cloth. The background of the painting features a landscape with trees, mountains, and a body of water. The overall atmosphere of the painting is one of serenity and devotion, capturing a moment of quiet contemplation and prayer.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-01-05

The image depicts a painting of the Holy Family, featuring St. Joseph, the Virgin Mary, and the infant Jesus. St. Joseph is seated on the left side of the painting, holding an open book and gazing at it. The Virgin Mary is seated on the right side, holding the infant Jesus in her lap. The infant Jesus is looking towards St. Joseph, and the Virgin Mary is looking towards the infant Jesus. The painting is set in a landscape with trees and mountains in the background.

Created by amazon.nova-pro-v1:0 on 2025-01-05

The image is a black-and-white photograph of a painting. The painting depicts a man and a woman sitting on the ground. The man, who is on the left side, is an old man with a white beard. He is holding a book in his hands and seems to be reading it. The woman, who is on the right side, is a young woman with a veil. She is holding a baby and seems to be praying.