Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 25-39 |

| Gender | Female, 50.6% |

| Sad | 27% |

| Surprised | 0.2% |

| Fear | 0.1% |

| Happy | 0.4% |

| Angry | 0.4% |

| Calm | 71.4% |

| Disgusted | 0.1% |

| Confused | 0.4% |

Feature analysis

Amazon

| Person | 97.9% | |

Categories

Imagga

| streetview architecture | 100% | |

Captions

Microsoft

created on 2020-04-24

| a vintage photo of an old building | 50.6% | |

OpenAI GPT

Created by gpt-4 on 2024-12-04

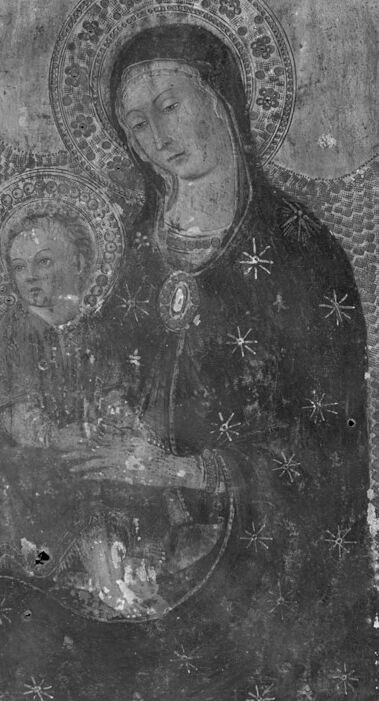

The image shows a black and white picture of what appears to be an icon or a religious painting, characterized by distinct ornamentation and decorative elements that are common in such artifacts. The borders of the artwork are intricately patterned with geometric designs and possibly inscriptions or motifs. The central figure is depicted wearing garments with stars, indicating a celestial or sacred theme, often associated with representations of holy figures in religious iconography. The overall appearance suggests that the work might be old, given the visible damage and weathering, which could be due to age, handling, or environmental factors. The photograph itself seems to have captured the texture and condition of the artwork, emphasizing its historical and artistic value.

Anthropic Claude

Created by claude-3-5-sonnet-20241022 on 2024-12-29

This appears to be a medieval religious artwork, specifically a painting that appears to be Madonna and Child. The painting shows two figures with halos (depicted as circular rings around their heads) against a dark background. The larger figure wears a dark garment decorated with star-like patterns, while the smaller figure is shown in close proximity. The artwork has an ornate border pattern and shows signs of age, with some wear and deterioration visible in the image. The style is characteristic of medieval Christian iconography, with the flat, two-dimensional representation typical of that period's artistic conventions. The black and white photograph appears to be documenting this historical artwork, possibly for archival or study purposes.

Created by claude-3-haiku-20240307 on 2024-11-27

The image appears to be a black and white photograph of an ornately decorated religious icon or painting. It depicts the Virgin Mary holding the infant Jesus Christ, both surrounded by intricate patterns and designs. The Virgin Mary is shown with a halo or nimbus around her head, and the infant Jesus also has a halo. The overall composition and style of the artwork suggests it is an example of religious iconography or devotional artwork from a historical period.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-30

The image depicts a black-and-white photograph of an old, worn painting of the Virgin Mary holding the infant Jesus. The painting is rectangular in shape and features a border around the edges, which is decorated with intricate designs and patterns. The border is faded and worn, suggesting that the painting is quite old. In the center of the painting, the Virgin Mary is depicted wearing a dark robe with a halo around her head. She is holding the infant Jesus in her arms, who is also wearing a halo. The Virgin Mary's face is serene and gentle, while the infant Jesus looks up at her with a curious expression. The background of the painting is a light color, which provides a subtle contrast to the darker tones of the Virgin Mary's robe. The overall effect of the painting is one of reverence and devotion, as if the artist intended to convey a sense of awe and wonder at the sacred scene being depicted. The photograph itself appears to be quite old, with visible signs of wear and tear. The edges of the photo are rough and torn, and there are several scratches and marks on the surface. Despite its age, the photograph remains a powerful and evocative image that captures the essence of the original painting.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-25

The image depicts a black and white photograph of an old painting of the Virgin Mary holding the infant Jesus. The painting is on a rectangular canvas, with a border around it that appears to be made of a woven material, possibly linen or cotton. The border is decorated with intricate patterns and designs, including flowers, leaves, and geometric shapes. The Virgin Mary is depicted wearing a long, dark robe with a hood over her head. She is holding the infant Jesus in her arms, who is dressed in a simple tunic. The background of the painting is a light-colored wall or surface, which appears to be made of stone or plaster. The overall effect of the painting is one of serenity and devotion. The Virgin Mary's gentle expression and the infant Jesus' peaceful demeanor convey a sense of calm and tranquility. The intricate details of the border and the Virgin Mary's robes add to the sense of luxury and opulence, suggesting that this painting may have been created for a wealthy patron or for display in a place of worship. It's worth noting that the painting appears to be quite old, with visible signs of wear and tear. The surface is cracked and faded in places, and there are small holes and tears in the canvas. Despite these imperfections, the painting remains a beautiful and moving work of art that continues to inspire devotion and admiration.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-01-05

The image is a black-and-white photograph of a religious painting. The painting depicts a woman, presumably the Virgin Mary, holding a child, presumably Jesus Christ, in her arms. The woman is wearing a long, flowing robe, and the child is wearing a robe as well. The painting is surrounded by a decorative border, which features intricate patterns and designs. The border is also adorned with stars, which add to the overall aesthetic of the painting.

Created by amazon.nova-lite-v1:0 on 2025-01-05

The image is a black and white painting of the Virgin Mary and her child Jesus. The painting is framed with a decorative border, and the background is a dark gray color. The Virgin Mary is depicted holding the child Jesus in her arms, and both of them are looking towards the right side of the image. The child Jesus is wearing a white robe, and the Virgin Mary is wearing a black robe with a white veil covering her head. The painting appears to be old and worn out, with some scratches and damage visible on the surface.