Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Imagga

AWS Rekognition

| Age | 18-24 |

| Gender | Female, 98.1% |

| Sad | 99.8% |

| Fear | 10.9% |

| Surprised | 8.1% |

| Happy | 5% |

| Calm | 5% |

| Angry | 2.1% |

| Confused | 2% |

| Disgusted | 1.4% |

Feature analysis

Amazon

| Birthday Cake | 73% | |

Categories

Imagga

| food drinks | 90% | |

| paintings art | 9.8% | |

Captions

Microsoft

created by unknown on 2022-06-18

| a vase sitting on a table | 61.3% | |

| a vase sitting on top of a table | 59% | |

| a vase sitting on a counter | 53.8% | |

Clarifai

created by general-english-image-caption-blip on 2025-04-29

| a photograph of a vase with a cupidus on it | -100% | |

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-26

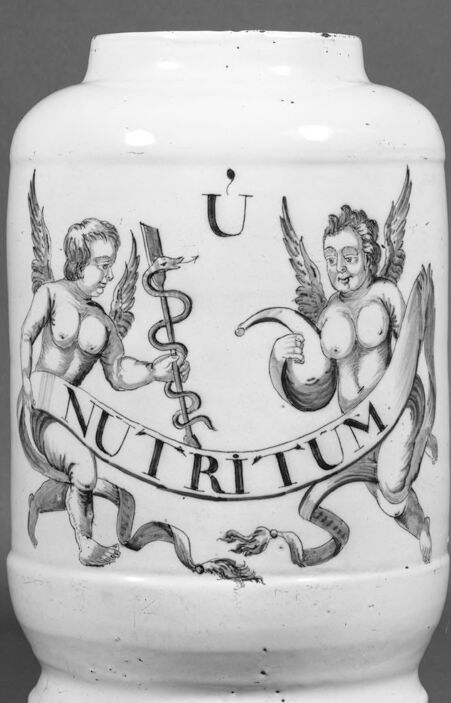

The image depicts a white ceramic vase with a wide mouth and a narrow neck, featuring an illustration of two cherubs. The cherubs are positioned on either side of a banner that reads "NUTRITUM" in black letters. One cherub holds a staff adorned with a snake, while the other grasps a horn. Above the banner, the letter "U" is visible.

The vase is set against a gray background, suggesting that it may be part of a museum or art collection. The overall design and style of the vase and its illustration evoke a sense of classicism and elegance, reminiscent of ancient Greek or Roman art.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-26

The image depicts a white ceramic jar with a wide base and narrow neck, adorned with an illustration of two winged figures holding a staff and a ribbon. The staff features a snake wrapped around it, while the ribbon bears the inscription "NUTRITUM" in bold letters. The background of the image is a plain gray color, suggesting that it may be a product photo or an archival image.

- The jar itself appears to be made of ceramic material, with a smooth and glossy finish.

- The illustration on the jar is rendered in a detailed and intricate style, with the two figures depicted in a dynamic pose.

- The staff and ribbon are also illustrated in a detailed manner, with the snake wrapped around the staff adding a sense of movement and energy to the scene.

- The inscription "NUTRITUM" on the ribbon suggests that the jar may have been used to store food or other edible items.

- The gray background of the image provides a neutral and unobtrusive setting for the jar, allowing the viewer to focus on the details of the illustration and the jar itself.

Overall, the image presents a visually appealing and informative depiction of a ceramic jar with an interesting illustration. The level of detail in the illustration and the clarity of the image suggest that it may be a high-quality product photo or an archival image.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-26

The image shows a ceramic container with a cylindrical shape and a flat bottom. The container has a white color and is decorated with a black and white design. The design features two winged figures holding a banner with the word "NUTRITUM" written on it. The winged figures are depicted as angels, with their wings spread out behind them. The container appears to be a vintage piece, possibly from the early 20th century.

Created by amazon.nova-lite-v1:0 on 2025-05-26

The image features a vintage ceramic jar with a monochromatic design. The jar has a cylindrical shape with a rounded base and a slightly flared top. The design on the jar is an intricate black-and-white illustration. It depicts two winged cherubs, each holding a staff entwined with a serpent, symbolizing medical knowledge and healing. The cherubs are positioned on either side of a banner that reads "NUTRITIUM" in bold, uppercase letters. The banner is held by the cherubs, suggesting a connection between nourishment and health. The jar appears to be made of ceramic, with a smooth, glossy finish. The image is presented in black and white, enhancing the contrast and details of the design.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-22

The image shows a white, cylindrical apothecary jar. The jar is decorated with a black and white illustration. Two winged cherubs flank a central element. The cherub on the left holds a staff with a serpent coiled around it. The cherub on the right holds what appears to be a ribbon. A banner with the word "NUTRITUM" is draped between the cherubs. At the top of the jar, there is a small letter "U" with a dot above it. The jar has a slightly wider base and a flared rim. The overall style of the jar suggests it could be quite old, possibly from the 18th or 19th century.

Created by gemini-2.0-flash on 2025-05-22

Here is a description of the image:

The image is a black-and-white photograph of an antique ceramic jar. The jar is cylindrical with a wide mouth and a flared base. Its surface is white and features a painted design of two winged cherubs.

The cherub on the left is depicted holding a staff intertwined with a snake, a symbol often associated with medicine. The cherub on the right holds a curved, unidentified object. A banner with the word "NUTRITUM" is draped between the two cherubs. Above the design is a symbol that resembles the letter "U" with a dot or small mark above it.

The photograph appears to have been taken in a studio setting, with a neutral background. The jar is positioned in the center of the frame, and the lighting emphasizes the details of the painted design.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-27

The image depicts a ceramic jar with intricate decorations. The jar is cylindrical with a slightly tapered top and a flat base. The decorations include two cherubic figures, one on each side, holding a banner that reads "NUTRITUM." The cherubs are depicted as winged, childlike figures, often symbolizing innocence and purity.

Between the cherubs, there is a caduceus, a symbol associated with medicine and commerce, consisting of a winged staff with two serpents winding around it. Above the caduceus, there is a letter "U" with an accent mark. The entire design is rendered in a monochromatic style, likely in black or dark gray, against the white background of the jar. The jar appears to be made of ceramic or porcelain, and the overall style suggests it might be an antique or vintage item, possibly used for pharmaceutical or medicinal purposes.