Machine Generated Data

Tags

Color Analysis

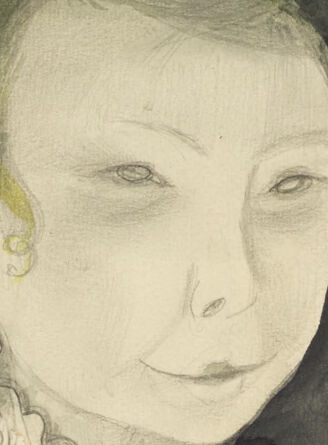

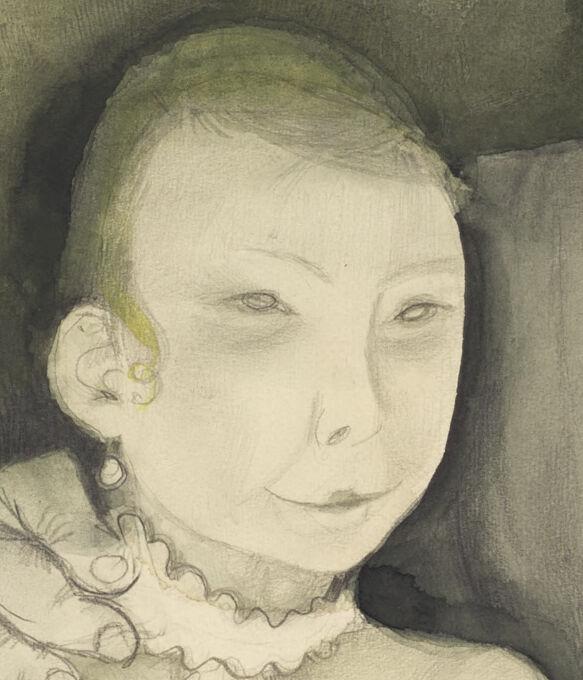

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 16-27 |

| Gender | Male, 53.2% |

| Happy | 1.4% |

| Angry | 5.3% |

| Calm | 64% |

| Confused | 5.3% |

| Disgusted | 14.4% |

| Sad | 6.2% |

| Surprised | 3.4% |

AWS Rekognition

| Age | 26-44 |

| Gender | Female, 87.1% |

| Happy | 11.2% |

| Angry | 7.3% |

| Disgusted | 10.6% |

| Sad | 9.5% |

| Surprised | 11.4% |

| Confused | 14.1% |

| Calm | 35.9% |

Microsoft Cognitive Services

| Age | 22 |

| Gender | Female |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 92.4% | |

| pets animals | 4.2% | |

| people portraits | 2.6% | |

| interior objects | 0.3% | |

| beaches seaside | 0.2% | |

| streetview architecture | 0.2% | |

| food drinks | 0.1% | |

Captions

Microsoft

created on 2018-02-10

| a vintage photo of a group of people posing for the camera | 96.1% | |

| a vintage photo of a group of people posing for a picture | 96% | |

| a group of people posing for a photo | 95.9% | |

Azure OpenAI

Created by gpt-4 on 2024-11-17

This is a monochromatic artwork that appears to be rendered in watercolor or a similar wash technique. It depicts two seated figures, one seems to dress in a formal outfit including a suit and tie while the other appears to wear a dress with lace details on the cuffs and neckline. They are seated on what looks like an upholstered couch with a tufted design. The background is mostly plain with uneven washes of color, suggesting an undefined space. In the lower right corner, there is a signature that reads "H. Mammen."

Anthropic Claude

Created by claude-3-haiku-20240307 on 2024-11-17

The image appears to be a black and white drawing or painting depicting two figures. One figure is a younger woman with a serious expression, wearing a high-collared blouse and jewelry. The other figure is an older man with glasses, also wearing a suit. The two figures are seated together in a somber, reflective pose. The background appears to be hazy and dreamlike, with a suggestion of a mountainous landscape in the distance.

Meta Llama

Created on 2024-11-29

The image depicts a portrait of a man and a woman, possibly a couple, with the woman sitting and the man standing behind her. The man is dressed in a suit and tie, while the woman wears a dress with a ruffled collar. The background of the painting is a muted green color, which provides a subtle contrast to the subjects' attire. The overall atmosphere of the painting is one of intimacy and quiet contemplation, as if the couple is lost in thought or engaged in a private conversation. The artist's use of muted colors and gentle brushstrokes creates a sense of calmness and serenity, drawing the viewer into the quiet moment captured in the painting.