Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 42-60 |

| Gender | Male, 50.3% |

| Fear | 49.5% |

| Surprised | 49.5% |

| Sad | 49.5% |

| Confused | 49.5% |

| Calm | 50.3% |

| Happy | 49.5% |

| Angry | 49.6% |

| Disgusted | 49.5% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 78.7% | |

Categories

Imagga

created on 2020-04-25

| paintings art | 99.3% | |

Captions

Microsoft

created by unknown on 2020-04-25

| a close up of an old building | 46.4% | |

| a close up of a building | 46.3% | |

| close up of an old building | 38.7% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-05-12

a photograph of a drawing of a group of people in a boat

Created by general-english-image-caption-blip-2 on 2025-06-29

a drawing of a group of people in a boat

OpenAI GPT

Created by gpt-4 on 2024-12-14

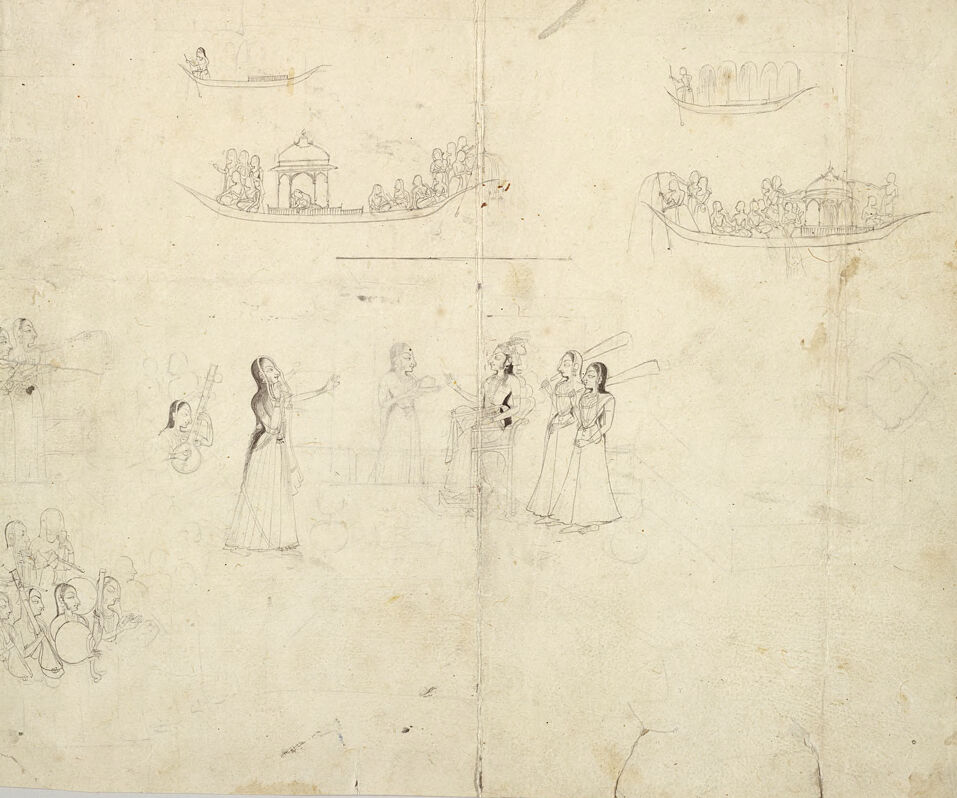

This is an old, faded pencil or pen sketch on what appears to be a worn piece of paper. The image is divided into two different scene sketches:

The top half of the image depicts a series of boats adorned with figures. From left to right:

- The first boat contains a single figure rowing.

- The second boat is larger and more elaborate with a small structure or pavilion on it, containing several figures inside and one standing at the prow.

- The third boat looks to be a simple canoe with one figure rowing and another seated at the back.

- The fourth boat is ornate and features a covered area with several figures gathered underneath.

The bottom half of the image presents a group of individuals engaged in what looks like a theatrical or musical performance. On the left, there is a seated group of four figures, seemingly an audience, facing what may be performers. In the middle, a prominent figure with outstretched arms is flanked by two other individuals, one of whom appears to be playing a stringed instrument. On the right, two figures are interacting with each other, possibly part of the same scene as the ones in the middle.

The paper has a central vertical fold line, indicating it may have been folded in the past, and it shows signs of age with various creases and discoloration. The drawing style is suggestive of a draft or preliminary composition, possibly for a larger, more finished work.

Created by gpt-4o-2024-05-13 on 2024-12-14

This image is a sketch featuring several scenes and figures drawn in a delicate, outline style. The central part depicts a group of people, including several women standing and seemingly engaging in conversation or performing. One woman appears to be singing or holding an object up toward the others.

In the upper portion of the sketch, there are boats filled with figures, possibly suggesting a procession or a ceremonial event on the water. Each boat is depicted intricately, with many passengers and detailed canopies.

There are also additional groups of people sketched towards the left and lower parts of the paper, seemingly observing or participating in the various activities.

The sketch is on aged paper, showing signs of wear and tear, including folds, stains, and slightly torn edges. Overall, this sketch appears to be an illustrative depiction of a communal or celebratory event, possibly in a historical or cultural context.

Anthropic Claude

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-24

This appears to be a historical sketch or drawing on aged paper. The image shows several boats or gondolas at the top of the composition, each carrying multiple figures and featuring decorative canopies or structures. Below these boats, there are several figures sketched in what appears to be period dress, possibly from the medieval or renaissance era, given their long robes and clothing styles. The drawing is done in a simple line style, and the paper has a yellowed, aged appearance typical of old documents. The sketches appear to be preliminary or study drawings, with some figures more detailed than others. The overall composition suggests this might be a study for a larger work or documentation of a historical scene or event.

Created by claude-3-haiku-20240307 on 2024-12-14

The image appears to be a sketch or drawing depicting various scenes from what looks like a traditional Indian or South Asian cultural or religious event. The main section in the center shows a group of people, possibly women, gathered together. In the background, there are several small boat-like structures with figures inside, suggesting a river or body of water setting. The overall style and imagery suggest this is a detailed illustration of a cultural or religious festival or ceremony.

Created by claude-3-5-sonnet-20241022 on 2024-12-14

This appears to be a historical sketch or drawing, likely from South Asia, showing multiple scenes. At the top of the image, there are several boats drawn with covered structures (possibly canopies or pavilions) and multiple figures seated in them. In the middle and lower portions of the drawing, there are figures shown in traditional dress, wearing what appear to be long robes or gowns. The style seems to be from Indian miniature painting tradition, though this is a preliminary sketch or line drawing rather than a finished painting. The scene might be depicting some kind of social gathering or ceremonial event. The drawing is done in simple lines on what appears to be aged paper, with some slight discoloration and wear visible on the surface.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-12-14

The image is a sketch of a scene with people in boats and on land, possibly depicting a festival or celebration.

- The sketch is drawn on a piece of paper that appears to be old and worn.

- The paper has a yellowish-brown color and is torn at the edges.

- There are several creases and folds in the paper, suggesting that it may have been folded or rolled up at some point.

- The sketch itself is quite detailed, with many figures and objects depicted.

- There are several people in boats, some of whom are rowing or sailing.

- There are also people on land, standing or sitting in groups.

- Some of the figures are holding instruments or other objects, such as a guitar or a drum.

- The scene appears to be set in a natural environment, with trees and hills visible in the background.

- The style of the sketch is reminiscent of traditional Indian art, with intricate lines and patterns.

- The figures are depicted in a stylized manner, with exaggerated features and poses.

- The background is also stylized, with bold lines and shapes used to create a sense of depth and texture.

- Overall, the sketch appears to be a charming and evocative depiction of a festive scene.

- It suggests a sense of joy and celebration, with people coming together to enjoy music and company.

- The sketch may be a representation of a specific cultural or religious tradition, but it is also a universal image that could be interpreted in many different ways.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-14

This image presents a sketch of a scene featuring women in traditional Indian attire, likely from the 18th or 19th century. The sketch is rendered in pencil on aged paper, with visible signs of wear and tear, including tears and discoloration.

The central focus of the sketch is a group of women gathered around a seated figure, possibly a musician or storyteller. One woman stands out, holding a musical instrument, while another appears to be engaged in conversation with the seated individual. The surrounding women are depicted in various poses, some standing and others sitting, all dressed in traditional Indian clothing.

The background of the sketch features several boats, each carrying people, which adds a sense of movement and activity to the scene. The overall atmosphere of the sketch is one of serenity and tranquility, capturing a moment in time from a bygone era.

The sketch's style and technique suggest that it may have been created by an artist from the Mughal or Rajput school of Indian art, known for their intricate and detailed depictions of everyday life and mythology. The use of pencil as the medium allows for a level of subtlety and nuance in the rendering of the figures and their surroundings.

Overall, this sketch provides a fascinating glimpse into the cultural and artistic traditions of India during the 18th or 19th century, and its delicate and intricate details make it a valuable addition to any collection of Indian art.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-01-13

The image is a sketch on a piece of paper. The sketch shows a group of people on a boat. Some people are standing, and some are sitting. There is a man standing in front of the group, and a woman is standing behind him. A few people are sitting on the boat, and a few are standing. There are some musical instruments beside the woman.

Created by amazon.nova-lite-v1:0 on 2025-01-13

The image is a sketch or drawing that appears to be on an aged, textured paper. The sketch is a monochromatic representation with various figures and scenes. The sketch is divided into three sections, with two sections on the top and one section at the bottom. The top sections depict people in boats, while the bottom section shows a group of people standing, possibly performing a play or a ritual. The boats in the top sections are detailed with people sitting and standing, suggesting a river scene. The figures in the bottom section are drawn in a more detailed manner, showing their clothing and facial expressions. The sketch is a blend of different scenes, possibly representing a narrative or a cultural event.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-13

The image appears to be a pencil sketch of a cultural scene, possibly from South Asia. It depicts various elements:

- Boats: There are several boats shown, some with what appear to be canopies or structures, and figures aboard. These boats could be river or lake boats, which is typical of the context.

- Figures: There are numerous figures in the scene. Some are performing or interacting with each other. Some wear traditional clothing, suggesting a cultural setting. A central figure appears to be singing or speaking, gesturing with their hand. Other figures seem to be playing instruments or attending the scene.

- Setting: The overall impression suggests a festival, celebration, or a gathering of some kind.

- Medium and style: The sketch is in pencil, with a light touch and detailed linework.

- Condition of the paper: The paper appears aged, with some creases and marks, suggesting it is an older piece.

Overall, the image portrays a historical cultural scene, most likely South Asian, with boats and figures in traditional attire.

Created by gemini-2.0-flash on 2025-05-13

The image is a pencil sketch on a sheet of paper. The artwork is a collection of figures, some in boats and others appearing to be engaged in a performance of some kind.

In the upper portion of the sketch, there are three boats, each filled with figures. The two larger boats have a pavilion-like structure in the center and are heavily populated. A smaller boat, with a single figure, is also present.

Below the boats, the primary focus of the sketch appears to be a performance. A central female figure is depicted with her arms outstretched, seemingly singing or speaking. To her left, there is a musician with a stringed instrument, and a group of individuals are seated, possibly playing instruments or singing along. To her right, there are three figures, including one seated on a chair, possibly observing or directing the performance. The details of the figures are not fully rendered, but their gestures and postures suggest engagement in a collective activity.

The paper has some tears and imperfections around the edges, and the pencil lines are light, giving the sketch a preliminary or draft-like quality.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-01

The image is a sketch featuring multiple scenes, likely from a historical or cultural context. The sketches appear to be drawn in pencil on aged, yellowed paper, indicating that the drawing is quite old. Here is a detailed breakdown of the scenes depicted:

- Top Left: A small boat with a single figure on board, possibly rowing or navigating.

- Top Center: A larger boat with a structure resembling a pavilion or a small temple in the center. Several figures are depicted on board, some seated and others standing, suggesting a gathering or a ceremonial event.

- Top Right: Another boat with a canopy-like structure, carrying multiple figures. The figures seem to be engaged in some activity, possibly a celebration or a ritual.

- Bottom Left: A group of figures, some seated and others standing, possibly engaged in a conversation or a meeting. The figures are depicted in traditional attire, suggesting a historical or cultural scene.

- Bottom Center: A single figure in a long dress, possibly performing a dance or a ritual. The figure is depicted with an outstretched arm, indicating movement or gesture.

- Bottom Right: A group of figures, some standing and others seated, engaged in what appears to be a musical performance. One figure is holding an instrument, possibly a stringed instrument, while others seem to be singing or playing other instruments.

The sketches are detailed and provide a glimpse into the attire, activities, and possibly the social or cultural practices of the time. The aged paper and the style of the drawings suggest that this could be a historical document or artwork.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-10

The image is a pencil sketch on a piece of paper, showing various scenes of people and boats. The sketch appears to be done in a sketchbook, as indicated by the creases and folded edges of the paper. The scenes are drawn in a rudimentary style, possibly indicating an early sketch or a casual drawing.

- Top Left: A small fishing boat with a single person.

- Top Middle: A larger boat with several people, some seated and some standing. There is a structure resembling a pagoda or dome in the center.

- Top Right: Another boat with people, similar to the one in the top middle section.

- Middle Left: A smaller group of people, possibly playing musical instruments.

- Middle Right: A larger group of people, including one person standing with a raised hand, possibly singing or speaking, and others standing around.

- Bottom Left: A smaller group of people, possibly playing musical instruments similar to the ones in the middle left section.

The overall scene suggests a depiction of a gathering or event with musical performances and possibly a ceremonial or cultural context, given the presence of the pagoda-like structure.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-10

This image appears to be a pencil sketch on aged paper, possibly from the 19th or early 20th century. The drawing is divided into sections with various scenes and figures.

In the upper part of the image, there are two scenes depicting people on boats. Each boat has a small pavilion or canopy structure, and the boats are filled with numerous figures, some of whom appear to be seated and others standing. The figures are drawn in a detailed manner, showing their clothing and postures.

In the lower part of the image, there is a scene with several figures, possibly musicians and performers. Some of the figures are holding musical instruments, such as stringed instruments and possibly a drum. One figure appears to be singing or speaking, as indicated by the hand gestures and the posture. There is also a figure sitting on a chair, possibly a person of importance or a leader.

The overall style of the drawing suggests it might be a study or a preliminary sketch for a more detailed painting or illustration. The paper shows signs of aging, with discoloration and some creases, indicating it has been handled and stored for a considerable amount of time.