Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 19-36 |

| Gender | Male, 54.8% |

| Sad | 45.2% |

| Confused | 46% |

| Happy | 46.2% |

| Calm | 50.9% |

| Surprised | 45.5% |

| Angry | 45.4% |

| Disgusted | 45.8% |

Feature analysis

Amazon

| Person | 42.3% | |

Categories

Imagga

| paintings art | 96% | |

| text visuals | 3.4% | |

Captions

Microsoft

created by unknown on 2019-07-06

| a fabric surface | 40.5% | |

| a close up of a fabric surface | 40.4% | |

| a close up of fabric | 37.1% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-18

| a photograph of a woman in a kimono kimono kimono kimono kimono kimono | -100% | |

OpenAI GPT

Created by gpt-4 on 2024-12-09

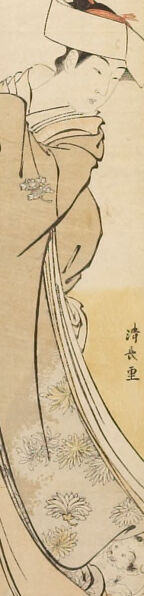

This is a tall, narrow painting or print showing a Japanese-style artwork. The composition is dominated by the figure of a woman depicted in profile. She is wearing traditional Japanese clothing which drapes in elegant folds and is decorated with intricate patterns that resemble flowers or plants. The sleeves of her garment are particularly long and flow gracefully down the image.

The woman's hair is styled in a manner consistent with certain periods of Japanese history, adorned with what appears to be a hair accessory or ornament. She seems to be looking downwards with a gentle or contemplative expression. The color palette is subdued, with neutral tones and hints of color in the woman's clothing and hair ornament.

In the background, there is a portion of a bamboo grove, which further emphasizes the Japanese aesthetic and may suggest a sense of stillness or connection to nature. The artwork conveys a sense of elegance and poise, which is characteristic of many traditional Japanese paintings that focus on the beauty of the subject and their attire.

On the right edge of the artwork, there is Japanese text, likely containing the signature of the artist or information relevant to the scene depicted. The style suggests that this may be a work from a historic period, possibly Edo period, with its linear yet delicate brushwork.

Created by gpt-4o-2024-05-13 on 2024-12-09

This image appears to be a traditional Japanese ukiyo-e painting, depicting a graceful woman in an elegant pose. She is dressed in a kimono with intricate patterns and holds her clothing delicately. Her head is adorned with a hat, and the overall composition includes delicate lines and soft, subdued colors that are characteristic of this art style. Above her, there are bamboo branches, adding a touch of nature to the scene. The image has a vertical orientation, which is typical for hanging scrolls in Japanese art.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2024-12-09

This image appears to be an East Asian style painting, likely of a Japanese origin. The main focus of the painting is a depiction of a woman wearing a traditional Japanese kimono, standing amid a bamboo forest. The woman's figure is rendered in a graceful and elegant manner, capturing the fluid movements and delicate details of the kimono fabric. The bamboo trees in the background create a serene and natural backdrop, enhancing the overall aesthetic of the painting. The style and composition suggest this is a work of traditional Japanese art, perhaps from the Ukiyo-e or Yamato-e artistic traditions.

Created by claude-3-5-sonnet-20241022 on 2024-12-09

This is a traditional Japanese woodblock print (hashira-e or pillar print) that appears to be from the Edo period. It shows an elegant figure in flowing robes standing beneath bamboo leaves. The kimono is decorated with delicate floral patterns and has graceful, sweeping lines. The composition is vertical, characteristic of hashira-e format, with bamboo leaves at the top creating a natural canopy above the scene. The figure is wearing traditional Japanese dress with an ornate hairstyle and hair ornament. The artwork uses a subtle color palette with muted tones and demonstrates the refined aesthetic typical of Japanese ukiyo-e prints. The artist's signature appears to be visible in the lower portion of the print.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-12-09

The image is a traditional Japanese woodblock print, likely created by Utagawa Kunisada, depicting a woman in a kimono. The woman is shown from the side, facing left, with her head turned slightly towards the viewer. She wears a long, flowing kimono with a floral pattern and has a white obi tied around her waist. Her hair is styled in an updo, and she wears a white hat with a red ribbon.

In the background, there are some bamboo leaves and a small building, possibly a temple or shrine. The overall atmosphere of the print is one of serenity and elegance, with the woman's gentle expression and the peaceful surroundings creating a sense of calm.

The print is signed by the artist in the lower right corner, and the title is written in Japanese characters above the woman's head. The print is likely part of a series of works by Kunisada, who was known for his portraits of women and his use of vibrant colors and intricate details.

Overall, the image is a beautiful example of traditional Japanese art, showcasing the artist's skill and attention to detail.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-09

The image depicts a traditional Japanese art piece, likely a woodblock print, featuring a woman in a kimono. The woman is positioned on the left side of the image, facing right, with her head turned slightly towards the viewer. She wears a long-sleeved kimono adorned with a floral pattern and has her hair styled in an updo. A small bird perches on her head, adding a touch of elegance to the scene.

In the background, a few bamboo stalks are visible, along with some Japanese characters written vertically. The overall atmosphere of the image exudes serenity and refinement, characteristic of traditional Japanese art. The use of muted colors and delicate lines creates a sense of subtlety and restraint, while the woman's pose and expression convey a sense of grace and poise.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-02-27

The image is an artwork that depicts a person in traditional Japanese clothing, possibly a kimono. The person is wearing a white hat and has a long, flowing robe with intricate designs. The robe is adorned with a floral pattern, and the person's hands are holding the fabric in a way that suggests they are performing a traditional dance or ritual. The background of the image is a simple beige color, with a bamboo tree and a sign with Chinese characters in the upper right corner.

Created by amazon.nova-lite-v1:0 on 2025-02-27

The image is a traditional Japanese painting featuring a woman in a kimono. The woman is depicted in a graceful pose, standing in front of a bamboo plant. The painting has a minimalist style, with a focus on the flowing lines of the kimono and the delicate details of the bamboo leaves. The woman's face is not visible, but her hair is styled in a traditional Japanese manner. The painting is in black and white, with some subtle shading to add depth and texture. The overall effect is serene and peaceful, evoking a sense of tranquility and harmony.

Google Gemini

Created by gemini-2.0-flash on 2025-05-14

The artwork presents a vertical rectangular format and depicts a standing figure in a traditional Japanese-style robe. The figure's head is adorned with a square-shaped hat, and a slender red ornament is visible on top. The robe itself is light in color, embellished with floral patterns, and a lighter colored sash or undergarment is visible beneath.

In the background, there is a sparse representation of bamboo leaves and stems, contributing to a natural, serene atmosphere. A section of a dark object, possibly a scroll or banner, is visible near the bamboo, adding a layer of cultural or artistic context to the scene.

To the right side of the figure, vertical inscriptions are seen, likely the artist's signature or an inscription related to the artwork. The overall impression of the artwork is one of elegance and traditional Japanese aesthetics, with delicate lines and a subdued color palette.

Created by gemini-2.0-flash-lite on 2025-05-14

Here is a description of the image:

This is a Japanese woodblock print, showcasing a figure, likely a woman, in traditional attire. The figure is elegantly posed, with their head slightly tilted and eyes closed. They are wearing a formal hat and layered kimono with floral patterns. The print uses a muted color palette of beige, soft yellows, and earthy tones, which contributes to the graceful and serene atmosphere. Bamboo stalks are visible in the upper left, while the bottom part of the image has a pattern, possibly indicating the base the figure stands on. The artist's name or signature, written in Japanese characters, is located on the right side of the image.