Machine Generated Data

Tags

Color Analysis

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Milk | 90.9% | |

Categories

Imagga

created on 2022-06-17

| cars vehicles | 100% | |

Captions

Microsoft

created by unknown on 2022-06-17

| a cup of coffee | 28.9% | |

| a close up of a cup | 28.8% | |

| close up of a cup | 28.7% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-04-29

a photograph of a black and white photo of a vase with a chinese writing on it

Created by general-english-image-caption-blip-2 on 2025-07-07

a black and white photo of a bowl with a chinese character on it

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-10

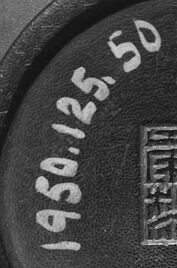

This is a black and white photograph of the base of a ceramic or lacquered bowl. The bowl appears to have a smooth, rounded surface and a circular base with an engraved stamp or seal at its center, featuring intricate geometric patterns and Chinese or East Asian characters. There is also a hand-written label on the underside near the seal that reads "1950.125.50," which might be a catalog or inventory number. The lighting emphasizes the texture and form of the bowl. The image is taken from directly above the bottom of the object.

Created by gpt-4o-2024-08-06 on 2025-06-10

The image depicts the bottom of a bowl. The bowl is predominantly dark in color and has a smooth surface. At the center of the base, there is a square seal or marking, which appears to contain Chinese characters. On the left side of this marking, the numbers "1950.125.50" are handwritten, likely an inventory or catalog number. The lighting in the image creates a subtle gradient on the curved surface of the bowl, accentuating its shape. The photograph is in black and white and is framed with a visible black border.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-18

The image shows a close-up view of a metal object with a circular shape. The surface of the object has a smooth, reflective appearance. On the center of the object, there is a stamp or imprint that appears to be some kind of symbol or logo. The background is dark, creating a high-contrast black and white image.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-18

This appears to be a black and white photograph showing the bottom or base of what looks like a ceramic or pottery piece. There are markings visible on the base including what appears to be numbers "163.25.50" and some Chinese characters or maker's marks. The image is taken at an angle that creates a circular perspective, with the base markings in the center surrounded by the curved edges of the vessel. The surface appears to have a smooth, possibly glazed finish.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-10

The image depicts a black and white photograph of the bottom of a bowl or vase, likely made of ceramic material. The object is circular in shape, with a flat base and a slightly raised edge around the circumference. The surface appears smooth, with no visible patterns or designs.

At the center of the base is an embossed logo or mark, consisting of four Chinese characters arranged in a square formation. The characters are intricately designed and appear to be a combination of lines and geometric shapes. The logo is surrounded by a thin border, which adds to its overall aesthetic appeal.

On the left side of the base, there is a handwritten notation in white ink that reads "1950.125.50." This suggests that the object may have been part of a collection or exhibit, possibly from the 1950s. The notation could also indicate the object's accession number or catalog number.

The background of the image is a plain white surface, which helps to highlight the details of the object. There are no other objects or features visible in the image, allowing the viewer to focus solely on the bowl or vase.

Overall, the image provides a clear and detailed view of the object's design and craftsmanship. The embossed logo and handwritten notation add an air of authenticity and historical significance to the image, making it a valuable resource for those interested in ceramics or art history.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-10

The image presents a close-up view of the bottom of a ceramic bowl, showcasing its base and the surrounding area. The bowl's base is circular, with a raised edge that forms a lip around the circumference. A small, square indentation is visible at the center of the base, featuring an intricate design that appears to be a Chinese character or symbol.

The bowl's surface exhibits a dark brown or black coloration, which may be due to the material it is made of or the lighting conditions in which the photograph was taken. The background of the image is white, suggesting that the bowl was placed on a plain surface for photography purposes.

A handwritten notation is visible on the left side of the bowl's base, reading "1950.125.50." This notation likely indicates the bowl's catalog number or identification code, which could be useful for researchers or collectors seeking to learn more about the object.

Overall, the image provides a detailed view of the bowl's base and surrounding area, highlighting its unique features and characteristics. The presence of the handwritten notation adds an element of context and provenance to the image, suggesting that the bowl may be part of a larger collection or archive.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-09

The image is a black-and-white photograph of a round object, possibly a bowl, with a shiny surface. The object is placed on a white surface, and the light is shining on it, creating a shadow on the right side. The object has a circular rim with a design in the center, and the design has the words "1950-125-50" written on it. The design also has a logo with some text and a symbol.

Created by amazon.nova-pro-v1:0 on 2025-06-09

The image is a black-and-white photograph of a circular object, possibly a ceramic bowl. The object has a smooth, glossy surface and a slightly curved shape. In the center of the object, there is a circular design with a rectangular pattern and some text. The text reads "1950-12-50". The rectangular pattern has four squares, each with a different symbol inside. The image is captured from a top-angle view, and the object appears to be placed on a white surface.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-26

Here is a description of the image:

The image is a black and white photograph of the underside of a dark-colored bowl. The bowl's smooth, curved surface dominates the frame. Near the center of the bowl's underside, a smaller, circular base is visible. On this base, there is a stamped inscription. The inscription includes numbers "1950.125.50" in a curved arrangement. Beneath the numbers, a square, block-like design, presumably a seal, is visible. The photograph is well-lit, with the details of the bowl clearly defined. The contrast between the bowl and the background is high, emphasizing its form. The photograph is bordered by a black frame.

Created by gemini-2.0-flash on 2025-05-26

Here is a description of the image:

The image shows the underside of a bowl-shaped object in black and white. The object has a smooth, rounded surface with a smaller circular base. On the base, there is a faded inscription "1950.125.50" written around the edge, and a stamped square seal with intricate calligraphic characters in the center. The lighting creates highlights on the bowl's surface, accentuating its shape and texture. The overall tone is dark, with shadows contributing to the depth of the image.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-02

The image shows the bottom of a ceramic object, likely a bowl or a cup, with a smooth, dark finish. The base of the object features an inscription that includes both numbers and Chinese characters. The numbers "1950.125.50" are printed on the left side of the base, possibly indicating a catalog or inventory number, suggesting that the object is part of a collection or museum piece. On the right side, there are four Chinese characters arranged in a square formation. These characters might be a mark or signature of the artisan or manufacturer, or they could indicate the place of origin or the type of ceramic. The overall appearance of the object suggests it is an antique or a piece of cultural significance.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-06-29

The image appears to be a close-up of the base of a ceramic object, possibly a bowl or a plate, viewed from above. The object has a glossy, dark surface with a circular design on the bottom. At the center of this design, there is an intricate emblem or stamp that seems to be a signature or maker's mark. Surrounding this emblem, there is text that reads "1950.125.50". The style of the emblem and the text suggests that this might be from a museum collection, as the numbers are commonly used for cataloging items in a museum. The overall appearance and detail suggest that this is a piece of ceramic art, possibly of historical or cultural significance.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-06-29

This black-and-white image shows the bottom of a bowl or similar ceramic object. The surface appears to be smooth and slightly curved, suggesting it is the interior of the bowl. In the center, there is a circular area with an engraved or embossed symbol that appears to be an East Asian seal or stamp, possibly Chinese or Japanese. To the left of the seal, there is a number "1950.125.50" etched or engraved. The image has a vintage or historical quality, indicated by the monochrome tone and the style of the engraving. The overall condition of the bowl appears to be slightly worn, with some minor marks and blemishes visible.