Machine Generated Data

Tags

Color Analysis

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Painting | 85.1% | |

Categories

Imagga

created on 2020-04-23

| paintings art | 97.9% | |

Captions

Microsoft

created by unknown on 2020-04-23

| a close up of a desert | 69.2% | |

| a person in a desert | 46.4% | |

| close up of a desert | 46.3% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-04-29

a photograph of a group of sheeps are gathered around a mountain

Created by general-english-image-caption-blip-2 on 2025-06-27

a painting of a mountain with a bird on top

OpenAI GPT

Created by gpt-4 on 2024-12-03

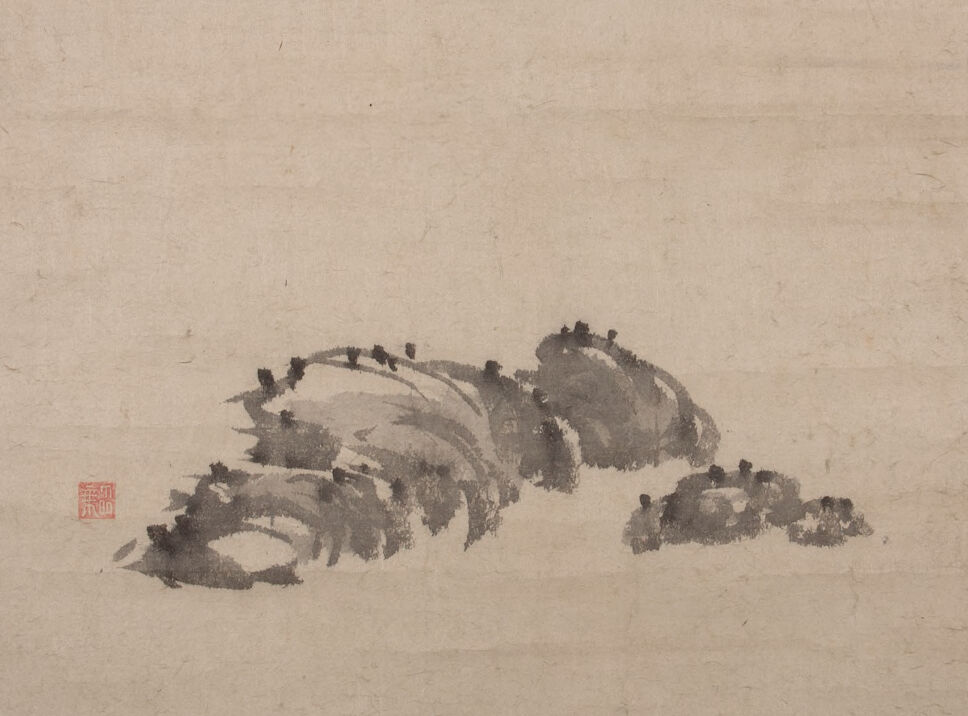

This image is a traditional East Asian ink wash painting, commonly associated with Chinese sumi-e or Japanese ink painting styles. It depicts an abstract landscape scene consisting of a few brushstrokes that suggest the shapes of mountains or rocks, with darker ink spots potentially representing vegetation or trees. There is a large white space, which is typical of this style, meant to indicate fog, sky, or perhaps water. To the bottom left of the composition, there is a red seal or stamp that is often used by artists to sign their work in East Asian art. The paper has an aged appearance, with a beige color and slight creases or textures which contribute to the simple yet evocative nature of the artwork.

Anthropic Claude

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-27

This is a traditional East Asian ink painting, likely Chinese or Japanese, executed in a minimalist style. The artwork shows what appears to be a branch or plant form rendered in black ink on a light, natural-colored background. The brushwork is loose and expressive, creating a flowing, organic shape that stretches horizontally across the composition. In the lower right corner, there is a red seal stamp, which is typical of East Asian artwork and usually contains the artist's name or studio mark. The overall composition is very spare and demonstrates the importance of empty space (or "ma" in Japanese aesthetics) in this artistic tradition. The ink wash technique creates various gradations of gray, giving the form a sense of depth and movement despite its simplicity.

Created by claude-3-5-sonnet-20241022 on 2024-12-28

This is a minimalist East Asian ink painting, likely Japanese or Chinese, showing what appears to be rocks or small hills rendered in loose, flowing brushstrokes of black or gray ink on a light beige background. The composition is simple and spare, with the rocky forms arranged horizontally across the middle of the image. There's a red seal or stamp mark visible in the lower left corner, which is traditional in East Asian brush painting. The style is very gestural and abstract, emphasizing the natural flow of the ink and the artist's spontaneous brush movements. This type of painting often reflects Zen Buddhist influences in its simplicity and directness.

Created by claude-3-haiku-20240307 on 2024-11-27

The image appears to be an ink painting or drawing depicting an abstract, cloud-like form. The form has a soft, wispy quality, with indistinct, organic shapes that suggest natural elements like rocks or foliage. The overall composition has a sense of movement and fluidity, with the forms appearing to flow across the page. The image has a monochrome palette, using various shades of black and gray to create the atmospheric, minimalist aesthetic. The presence of a red seal or stamp in the bottom right corner suggests this is a work of traditional East Asian art, likely Chinese or Japanese in origin.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-26

The image depicts a serene and minimalist painting of a mountain range, rendered in shades of gray on a beige background. The painting is executed in a loose, expressive style, with bold brushstrokes that evoke a sense of movement and energy.

Key Features:

- Mountain Range: The central focus of the painting is a rugged mountain range, depicted in various shades of gray. The mountains are rendered in a stylized manner, with smooth curves and jagged peaks.

- Brushstrokes: The brushstrokes used to create the painting are bold and expressive, with visible texture and movement. This gives the painting a dynamic and energetic feel.

- Color Palette: The color palette is limited to shades of gray, which creates a sense of simplicity and elegance. The beige background provides a subtle contrast to the darker grays used for the mountains.

- Composition: The composition of the painting is balanced and harmonious, with the mountain range placed centrally in the frame. The negative space around the mountains creates a sense of depth and atmosphere.

- Symbolism: The painting may be interpreted as a representation of the natural world, with the mountains symbolizing strength, resilience, and beauty. The use of gray tones may also suggest a sense of neutrality or balance.

Overall Impression:

The painting exudes a sense of calmness and serenity, inviting the viewer to contemplate the beauty of the natural world. The loose brushstrokes and expressive style create a sense of movement and energy, while the limited color palette adds a touch of simplicity and elegance.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-24

The image depicts a serene and minimalist landscape painting, characterized by a light brown background with subtle texture. The focal point of the painting is a cluster of dark gray hills or mountains, rendered in loose brushstrokes that evoke a sense of movement and fluidity. The hills are depicted in various shades of gray, with some areas appearing lighter or darker than others, creating a sense of depth and dimensionality.

In the bottom-left corner of the painting, a small red stamp or seal is visible, featuring Chinese characters that add an air of authenticity and cultural significance to the artwork. The overall effect of the painting is one of calmness and tranquility, inviting the viewer to step into the peaceful world it depicts. The use of muted colors and simple composition creates a sense of simplicity and elegance, making the painting a beautiful and contemplative piece.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-01-05

The image is a black-and-white painting of a crab on a beige background. The crab has a long, curved body and a pair of large, round eyes. Its claws are extended, and its body is slightly curved. The crab's body is painted in shades of gray and black, and the background is a light beige color. The painting appears to be in a vintage style, with a slightly blurry effect.

Created by amazon.nova-pro-v1:0 on 2025-01-05

The image is a monochromatic painting of a group of gourds. The gourds are depicted in a simplistic and abstract style, using a combination of black and gray tones. The shapes are fluid and organic, with some gourds appearing to be elongated and others more rounded. The painting has a minimalist aesthetic, with the focus on the forms and textures of the gourds. There is a red stamp on the left side of the image.

Google Gemini

Created by gemini-2.0-flash on 2025-04-27

The image appears to be a traditional East Asian ink wash painting on a tan or beige background. The artwork depicts a landscape, likely a rocky hill or small mountain range, rendered in varying shades of gray and black.

The composition is simple, with the subject centrally placed on the textured paper, leaving a significant amount of empty space above and around the focal point. The brushstrokes are loose and expressive, creating a sense of depth and texture on the rocks.

There are small, dark dots or strokes on top of the rocks, possibly representing trees or vegetation. The bottom of the rocks seem to blend into the background, giving an impression of a misty or undefined base.

In the bottom left corner of the image, there's a red seal, a common element in East Asian art that signifies the artist's signature or mark. The overall feel of the painting is serene and contemplative, typical of the East Asian artistic tradition.

Created by gemini-2.0-flash-lite on 2025-04-27

Certainly! Here's a description of the image:

Overall Impression:

The image appears to be a traditional East Asian ink wash painting. It has a minimalist quality with a focus on conveying atmosphere and essence rather than detailed representation.

Composition:

- The painting has a horizontal orientation.

- The central focus of the artwork is a series of rounded hills or rock formations. These are rendered in varying shades of gray ink, suggesting depth and texture through the use of brushstrokes and washes.

- Above the hills is a solid, neutral background.

- In the lower left corner, there's a small, red stamp.

Technique & Style:

- The painting is executed primarily with shades of gray ink, likely using a brush.

- The style is characterized by a focus on suggestion rather than detailed depiction. The forms of the hills are generalized, and the texture is implied through the brushwork and washes.

- The composition is simple, emphasizing the serene and contemplative qualities of the subject matter.

Possible Interpretations:

- The painting could represent a landscape scene, with hills or rocks forming a natural feature.

- The minimal use of detail allows the viewer's imagination to fill in the details. It emphasizes the beauty of natural forms and the meditative aspect of the art form.

Let me know if you would like any further details.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-04-26

The image depicts a traditional ink painting on a light-colored, textured paper. The artwork features a minimalist landscape with a series of undulating, abstract forms that suggest mountains or hills. The forms are rendered in varying shades of black and gray, creating a sense of depth and dimension. The brushwork is loose and expressive, with visible strokes that add texture and movement to the scene. In the bottom left corner, there is a red seal impression, which is a common element in East Asian art, often used to sign or authenticate the work. The overall composition is simple and evocative, capturing the essence of a natural landscape with a few elegant strokes.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-06-25

This image is a traditional ink and wash painting, likely of East Asian origin, possibly Chinese or Japanese. It features a minimalist and abstract depiction of natural forms, possibly mountains or hills, rendered in varying shades of black and gray ink. The brushstrokes are loose and expressive, with a strong emphasis on the interplay of light and shadow. The composition is simple and uncluttered, with a large empty space in the upper portion, which could suggest a sky or an open landscape. The texture of the paper adds to the overall sense of depth and atmosphere, while the red stamp in the bottom left corner is a common feature in East Asian art, often used to mark the artist's signature or seal.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-06-25

This image is a traditional East Asian ink painting, likely inspired by Chinese or Japanese styles. It features an abstract representation of a landscape, possibly depicting rocks or clouds, using varying shades of ink. The strokes are loose and expressive, creating a sense of movement and texture. The composition is minimalistic, with a significant amount of empty space, emphasizing the forms and shapes. The use of ink gradients and splashes gives the painting a dynamic and fluid appearance. There is a red seal stamp in the bottom left corner, which is commonly used in East Asian art to signify the artist's signature or studio. The background is plain, with horizontal lines suggesting the texture of the paper or silk used for the painting.