Machine Generated Data

Tags

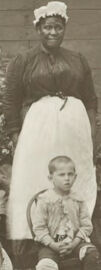

Amazon

created on 2019-06-07

Clarifai

created on 2019-06-07

Imagga

created on 2019-06-07

| barrow | 19.7 | |

|

| ||

| man | 18.1 | |

|

| ||

| person | 16.1 | |

|

| ||

| handcart | 15.9 | |

|

| ||

| people | 15.1 | |

|

| ||

| cemetery | 14.8 | |

|

| ||

| water | 13.3 | |

|

| ||

| black | 12.6 | |

|

| ||

| old | 12.5 | |

|

| ||

| wheeled vehicle | 12.4 | |

|

| ||

| vintage | 12.4 | |

|

| ||

| outdoors | 12.1 | |

|

| ||

| dirty | 11.7 | |

|

| ||

| grunge | 11.1 | |

|

| ||

| summer | 10.9 | |

|

| ||

| child | 10.5 | |

|

| ||

| sky | 10.2 | |

|

| ||

| two | 10.2 | |

|

| ||

| shovel | 10.2 | |

|

| ||

| dark | 10 | |

|

| ||

| park | 9.9 | |

|

| ||

| sunlight | 9.8 | |

|

| ||

| vehicle | 9.7 | |

|

| ||

| landscape | 9.7 | |

|

| ||

| wall | 9.6 | |

|

| ||

| forest | 9.6 | |

|

| ||

| male | 9.4 | |

|

| ||

| sand | 8.9 | |

|

| ||

| couple | 8.7 | |

|

| ||

| love | 8.7 | |

|

| ||

| scene | 8.7 | |

|

| ||

| antique | 8.7 | |

|

| ||

| cold | 8.6 | |

|

| ||

| life | 8.6 | |

|

| ||

| men | 8.6 | |

|

| ||

| tree | 8.5 | |

|

| ||

| beach | 8.4 | |

|

| ||

| girls | 8.2 | |

|

| ||

| danger | 8.2 | |

|

| ||

| industrial | 8.2 | |

|

| ||

| sunset | 8.1 | |

|

| ||

| holiday | 7.9 | |

|

| ||

| sea | 7.8 | |

|

| ||

| travel | 7.7 | |

|

| ||

| outdoor | 7.6 | |

|

| ||

| retro | 7.4 | |

|

| ||

| light | 7.4 | |

|

| ||

| lake | 7.3 | |

|

| ||

| sun | 7.2 | |

|

| ||

| lifestyle | 7.2 | |

|

| ||

| art | 7.2 | |

|

| ||

| wet | 7.2 | |

|

| ||

| trees | 7.1 | |

|

| ||

| season | 7 | |

|

| ||

Google

created on 2019-06-07

| Photograph | 97.4 | |

|

| ||

| Snapshot | 89.5 | |

|

| ||

| Child | 78.5 | |

|

| ||

| Stock photography | 71.4 | |

|

| ||

| Photography | 70.6 | |

|

| ||

| Vintage clothing | 67.2 | |

|

| ||

| Adaptation | 67 | |

|

| ||

| Room | 65.7 | |

|

| ||

| Family | 62 | |

|

| ||

| Black-and-white | 56.4 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 4-9 |

| Gender | Female, 50.2% |

| Surprised | 45.2% |

| Angry | 45.1% |

| Calm | 45.4% |

| Sad | 46.6% |

| Confused | 45.1% |

| Disgusted | 45.2% |

| Happy | 52.5% |

AWS Rekognition

| Age | 4-9 |

| Gender | Male, 53.6% |

| Confused | 45.2% |

| Angry | 46% |

| Surprised | 45.1% |

| Calm | 45.2% |

| Sad | 53.1% |

| Happy | 45.1% |

| Disgusted | 45.1% |

AWS Rekognition

| Age | 9-14 |

| Gender | Male, 54.4% |

| Angry | 45.6% |

| Surprised | 45.2% |

| Sad | 47.9% |

| Confused | 45.9% |

| Happy | 47.3% |

| Calm | 45.6% |

| Disgusted | 47.6% |

AWS Rekognition

| Age | 6-13 |

| Gender | Female, 53.9% |

| Angry | 45.3% |

| Sad | 53.5% |

| Surprised | 45.1% |

| Confused | 45.3% |

| Happy | 45.2% |

| Calm | 45.5% |

| Disgusted | 45.1% |

AWS Rekognition

| Age | 20-38 |

| Gender | Female, 53.6% |

| Happy | 45% |

| Angry | 45.1% |

| Calm | 45.1% |

| Surprised | 45% |

| Disgusted | 45.1% |

| Sad | 54.7% |

| Confused | 45% |

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 54.1% |

| Sad | 52% |

| Surprised | 45.4% |

| Calm | 45.4% |

| Disgusted | 45.2% |

| Confused | 46% |

| Angry | 45.5% |

| Happy | 45.4% |

AWS Rekognition

| Age | 6-13 |

| Gender | Male, 53.7% |

| Calm | 45.9% |

| Angry | 45.5% |

| Confused | 45.1% |

| Surprised | 45.1% |

| Sad | 53.1% |

| Happy | 45.1% |

| Disgusted | 45.2% |

AWS Rekognition

| Age | 10-15 |

| Gender | Female, 52.1% |

| Calm | 45% |

| Happy | 45% |

| Surprised | 45% |

| Disgusted | 45% |

| Sad | 54.8% |

| Angry | 45.1% |

| Confused | 45% |

AWS Rekognition

| Age | 4-7 |

| Gender | Female, 54.7% |

| Surprised | 45% |

| Disgusted | 45% |

| Angry | 45.1% |

| Confused | 45.1% |

| Calm | 45% |

| Happy | 45% |

| Sad | 54.7% |

AWS Rekognition

| Age | 45-66 |

| Gender | Female, 52% |

| Surprised | 45.2% |

| Angry | 45.3% |

| Calm | 45.4% |

| Sad | 50% |

| Confused | 45.2% |

| Disgusted | 45.2% |

| Happy | 48.7% |

AWS Rekognition

| Age | 17-27 |

| Gender | Female, 50.2% |

| Calm | 49.6% |

| Confused | 49.5% |

| Happy | 49.5% |

| Angry | 49.5% |

| Surprised | 49.5% |

| Disgusted | 49.5% |

| Sad | 50.4% |

Feature analysis

Categories

Imagga

| paintings art | 69.4% | |

|

| ||

| pets animals | 11.8% | |

|

| ||

| beaches seaside | 6.5% | |

|

| ||

| streetview architecture | 5.1% | |

|

| ||

| nature landscape | 4.7% | |

|

| ||

| people portraits | 1.6% | |

|

| ||

Captions

Microsoft

created on 2019-06-07

| a vintage photo of a group of people posing for the camera | 93.4% | |

|

| ||

| a vintage photo of a group of people posing for a picture | 93.3% | |

|

| ||

| a vintage photo of a group of people | 93.2% | |

|

| ||

Text analysis

Amazon

9 SR