Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

Imagga

AWS Rekognition

| Age | 26-43 |

| Gender | Female, 77% |

| Confused | 5.1% |

| Happy | 7.3% |

| Disgusted | 1.6% |

| Angry | 3.5% |

| Surprised | 9.1% |

| Sad | 4.8% |

| Calm | 68.5% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 93.6% | |

Categories

Imagga

created on 2018-03-23

| people portraits | 90.9% | |

| events parties | 7.5% | |

| streetview architecture | 0.7% | |

| paintings art | 0.3% | |

| text visuals | 0.2% | |

| pets animals | 0.1% | |

| food drinks | 0.1% | |

| nature landscape | 0.1% | |

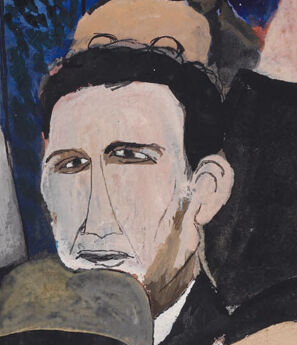

Captions

Microsoft

created by unknown on 2018-03-23

| a group of people posing for the camera | 81.7% | |

| a group of people posing for a picture | 81.6% | |

| a group of people posing for a photo | 74.1% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-04-28

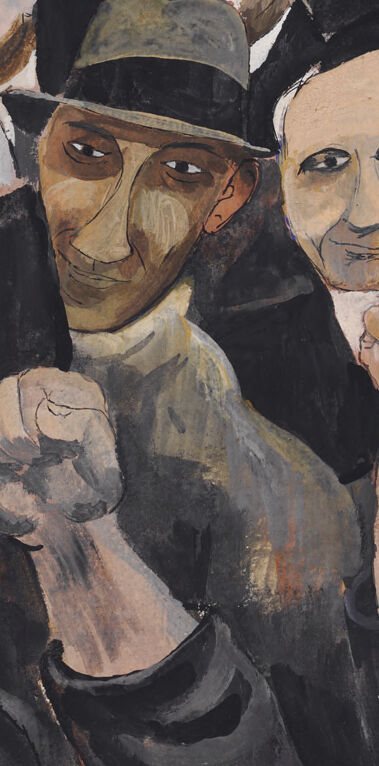

a photograph of a painting of a group of people with their hands up

Created by general-english-image-caption-blip-2 on 2025-06-27

a painting of people holding up their fists in front of a brick wall

OpenAI GPT

Created by gpt-4 on 2024-01-26

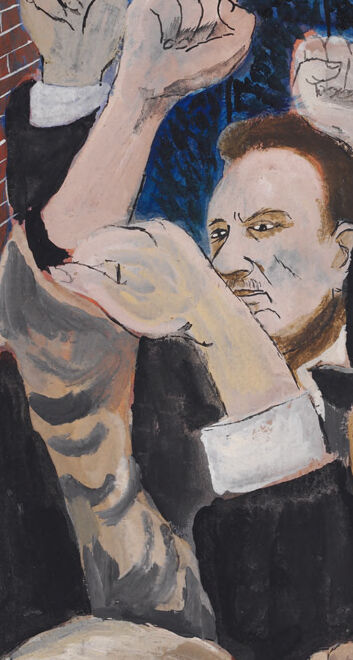

The image depicts a group of people, mostly in dark suits and hats, raising their fists in what appears to be a gesture of solidarity or protest. They stand in front of a red brick wall, next to an ornate, dark metal fence that separates them from a night-time landscape with tall pine trees and a dark blue sky. The atmosphere suggests they are gathered for a cause, possibly at dusk or during the evening.

Anthropic Claude

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-24

This appears to be a painting depicting a protest or demonstration scene. The artwork shows a group of people with raised fists in a gesture of solidarity or resistance. They are dressed in dark clothing, many wearing hats typical of a past era - fedoras and caps. The background features a red brick wall on the left side and what appears to be an ornate iron fence with trees visible behind it on the right, set against a dark blue sky. The style of the painting suggests it might be from the early-to-mid 20th century, possibly depicting a labor movement or social protest. The composition creates a powerful sense of unity and collective action among the figures shown.

Created by claude-3-haiku-48k-20240307 on 2024-03-29

The image depicts a crowd of people, with their fists raised in protest or defiance. The figures are stylized and appear to be caricatures, with distinctive facial features and expressions. They are positioned in front of a brick wall, with a wrought-iron gate visible in the background, suggesting an urban setting. The overall scene conveys a sense of collective action and resistance.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-26

The image depicts a painting of a group of people standing in front of a brick wall and an iron gate, with their fists raised in the air. The painting is done in a style reminiscent of Expressionism, with bold brushstrokes and vivid colors.

Key Features:

- Group of People: The central focus of the painting is a group of people, likely men, dressed in dark clothing and hats. They are standing in front of a brick wall and an iron gate, which appears to be closed.

- Raised Fists: Each person in the group has their fist raised in the air, suggesting a sense of unity and defiance.

- Brick Wall: The brick wall behind the group is depicted in shades of red and brown, with a subtle gradient effect that gives it a sense of depth.

- Iron Gate: The iron gate is shown in the background, with intricate details and a sense of texture. It appears to be closed, but the people in the foreground seem to be pushing against it or trying to open it.

- Color Palette: The painting features a muted color palette, with shades of gray, brown, and red dominating the scene. The only splash of color comes from the red hats worn by some of the individuals in the group.

- Style: The painting is done in an Expressionist style, characterized by bold brushstrokes, vivid colors, and a sense of emotional intensity. The artist has used loose, gestural strokes to capture the movement and energy of the scene.

Overall Impression:

The painting conveys a sense of determination and defiance, as if the people in the group are fighting for something or pushing against an obstacle. The closed iron gate and the brick wall behind them suggest that they are facing resistance or opposition, but their raised fists and determined expressions suggest that they will not be deterred. The painting is a powerful commentary on the human spirit and the struggle for freedom and justice.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-24

The image depicts a painting of a group of people standing in front of a brick wall and iron gate, with their hands raised in a gesture of protest or solidarity. The group is diverse, comprising men and women of different ages and ethnicities, all dressed in dark clothing and hats. They are positioned in front of a red brick wall on the left side of the image and an iron gate on the right side, which appears to be set against a blue sky with trees in the background.

The overall atmosphere of the painting suggests a sense of unity and defiance, as if the group is standing together in opposition to something or someone. The use of muted colors and the somber expressions on the faces of the individuals add to the sense of gravity and seriousness of the scene.

The painting is signed by the artist in the bottom-left corner, but the signature is not legible. The style of the painting is reminiscent of the work of Pablo Picasso, with its use of bold lines, geometric shapes, and a focus on capturing the essence of the subject rather than creating a realistic representation.

Overall, the image conveys a powerful message about the importance of standing together in the face of adversity and the need for collective action to bring about change.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-01-04

The painting depicts a group of people, possibly a crowd, gathered in front of a brick wall with a black gate. They are all wearing hats and raising their fists in the air, which may signify a protest or demonstration. The painting is in black and white, giving it a vintage look. The people are painted in a realistic style, with attention to detail in their facial expressions and clothing.

Created by amazon.nova-lite-v1:0 on 2025-01-04

The image is a painting of a crowd of people standing in front of a brick wall. The people are wearing hats and coats, and some of them have their hands raised in the air. The painting is painted in a realistic style, with attention to detail in the faces and expressions of the people. The brick wall is painted in a reddish-brown color, and there is a black metal gate in the background.

Google Gemini

Created by gemini-2.0-flash on 2025-04-26

The painting depicts a crowd of people, predominantly men, raising their fists in a unified gesture. They appear to be in a protest or rally. The figures are rendered in a somewhat stylized, simplified manner, with strong outlines and minimal detail. They are dressed in dark suits or jackets and wear various hats, creating a sense of uniformity and solidarity.

On the left side of the image, there is a red brick wall. To the right, a dark, wrought-iron gate stands in front of a blue background. The blue background suggests a night sky or a similarly dark, uncertain environment, potentially emphasizing the seriousness or urgency of the group's cause. The lighting seems to be coming from the front, illuminating the faces of the crowd and highlighting their expressions, which range from determination to defiance.

Created by gemini-2.0-flash-lite on 2025-04-26

Here's a description of the image:

The image is a painting that depicts a crowd of people raising their fists in a gesture of solidarity or protest. The style is somewhat stylized, with simplified facial features and a focus on the overall composition and emotion.

Key elements:

- People: There are many individuals in the crowd, mostly men, but also a woman in the lower right corner. Most are wearing hats and dark clothing, giving a sense of a unified group. They are all raising their fists in the air, which strongly suggests a political or social context.

- Background: The background includes a red brick wall on the left and a dark blue area with a black iron gate to the right. The gate appears to be guarding a park or other open space with trees in the background.

- Color and Light: The color palette is somewhat muted, with a focus on earthy tones. The lighting appears to be coming from the top, casting shadows that help define the figures and the scene.

Overall Impression:

The painting conveys a sense of defiance, unity, and possibly struggle or hope. The raised fists, the dark clothing, and the brick wall suggest a gathering of workers or individuals seeking change or expressing dissent. It could be a depiction of a strike, a protest, or a moment of solidarity.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-04-26

The image depicts a group of people, seemingly engaged in a protest or demonstration. The individuals are shown raising their clenched fists, a universal symbol of solidarity and resistance. They are dressed in dark clothing, and some are wearing hats, giving a sense of unity and shared purpose among the group.

The setting appears to be outdoors, with a brick wall on the left side and an ornate iron fence in the background. The sky is dark, suggesting it might be evening or night. The expressions on the faces vary, with some appearing determined and others looking more somber or concerned. The overall mood of the image conveys a sense of collective action and determination.

The style of the painting is somewhat expressionistic, with exaggerated features and bold lines, emphasizing the emotional intensity of the scene. The use of color is muted, with a focus on earthy tones, which further highlights the serious and solemn nature of the gathering. The artist's signature is visible in the bottom left corner.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-06-27

The image is a painting depicting a group of people gathered in front of a brick wall and a wrought-iron fence. The people are raising their fists, which suggests a demonstration or protest. The brick wall, painted in red, dominates the left side of the image, while the dark, sky-blue background with the fence in the center creates a contrast. The individuals in the painting are wearing hats and dark clothing, giving the scene a somber and serious tone. The overall composition and style suggest this is a representation of a social or political movement, possibly from a historical context.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-06-27

This painting depicts a crowd of people, likely in a protest or demonstration, with their fists raised in a show of unity and defiance. The scene is set against a backdrop of a red brick wall on the left and a dark blue sky with a black wrought iron fence on the right. The individuals in the crowd are dressed in a variety of clothing, including hats, suits, and casual attire, suggesting a diverse group. The expressions on their faces range from determined to joyful, indicating a strong sense of purpose and camaraderie. The overall style of the painting is somewhat stylized and expressive, with bold lines and a limited color palette that emphasizes the reds, blues, and dark tones of the scene. The artist's signature is visible in the bottom left corner.