Machine Generated Data

Tags

Amazon

created on 2019-04-08

Clarifai

created on 2018-03-16

Imagga

created on 2018-03-16

Google

created on 2018-03-16

| photograph | 95.6 | |

|

| ||

| black | 95 | |

|

| ||

| black and white | 89.1 | |

|

| ||

| snapshot | 81.8 | |

|

| ||

| vintage clothing | 70.9 | |

|

| ||

| art | 66.4 | |

|

| ||

| monochrome | 64.4 | |

|

| ||

| drawing | 61.7 | |

|

| ||

| monochrome photography | 61.7 | |

|

| ||

| artwork | 59.6 | |

|

| ||

| visual arts | 56.8 | |

|

| ||

Color Analysis

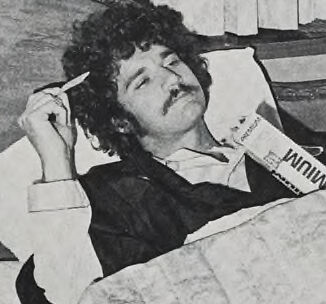

Face analysis

Amazon

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 99.4% |

| Sad | 5.6% |

| Calm | 81.9% |

| Angry | 2.9% |

| Surprised | 1% |

| Confused | 6.8% |

| Happy | 0.8% |

| Disgusted | 1% |

Feature analysis

Amazon

| Person | 89.6% | |

|

| ||

Categories

Imagga

| paintings art | 99.9% | |

|

| ||

| streetview architecture | 0.1% | |

|

| ||

Captions

Microsoft

created on 2018-03-16

| a person lying on a bed | 63.2% | |

|

| ||

| a person lying in bed reading a book | 37.3% | |

|

| ||

| a person lying on a bed | 37.2% | |

|

| ||

Text analysis

Amazon

Wniw

FA

FA