Machine Generated Data

Tags

Amazon

created on 2022-01-08

| Clothing | 99.6 | |

|

| ||

| Apparel | 99.6 | |

|

| ||

| Person | 99.4 | |

|

| ||

| Human | 99.4 | |

|

| ||

| Person | 99.3 | |

|

| ||

| Person | 99.2 | |

|

| ||

| Person | 97.1 | |

|

| ||

| Person | 96.1 | |

|

| ||

| Person | 95.6 | |

|

| ||

| Hardhat | 93.2 | |

|

| ||

| Person | 91.2 | |

|

| ||

| Display | 90.9 | |

|

| ||

| Screen | 90.9 | |

|

| ||

| Electronics | 90.9 | |

|

| ||

| Person | 87.4 | |

|

| ||

| Hat | 86.6 | |

|

| ||

| Person | 83.2 | |

|

| ||

| Person | 82.4 | |

|

| ||

| Person | 79.3 | |

|

| ||

| Helmet | 78.8 | |

|

| ||

| Monitor | 65.8 | |

|

| ||

| Person | 64.6 | |

|

| ||

| TV | 64.4 | |

|

| ||

| Television | 64.4 | |

|

| ||

| Lighting | 63.8 | |

|

| ||

| Face | 61.9 | |

|

| ||

| Person | 61.3 | |

|

| ||

| Crowd | 58.9 | |

|

| ||

| Sailor Suit | 58.8 | |

|

| ||

| Person | 57.5 | |

|

| ||

| Crash Helmet | 56.4 | |

|

| ||

| Hat | 55.6 | |

|

| ||

| City | 55.6 | |

|

| ||

| Building | 55.6 | |

|

| ||

| Urban | 55.6 | |

|

| ||

| Town | 55.6 | |

|

| ||

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

| cowboy hat | 40.3 | |

|

| ||

| hat | 40.2 | |

|

| ||

| headdress | 26.8 | |

|

| ||

| clothing | 23.7 | |

|

| ||

| man | 20.8 | |

|

| ||

| male | 18.4 | |

|

| ||

| black | 17.4 | |

|

| ||

| people | 15.6 | |

|

| ||

| world | 15.2 | |

|

| ||

| person | 14.4 | |

|

| ||

| covering | 14.2 | |

|

| ||

| business | 14 | |

|

| ||

| architecture | 11.7 | |

|

| ||

| consumer goods | 11.6 | |

|

| ||

| billboard | 11.5 | |

|

| ||

| adult | 11 | |

|

| ||

| car | 9.9 | |

|

| ||

| businessman | 9.7 | |

|

| ||

| portrait | 9.7 | |

|

| ||

| men | 9.4 | |

|

| ||

| work | 9.4 | |

|

| ||

| industry | 9.4 | |

|

| ||

| signboard | 9.3 | |

|

| ||

| building | 9.2 | |

|

| ||

| entertainment | 9.2 | |

|

| ||

| holding | 9.1 | |

|

| ||

| musical instrument | 9 | |

|

| ||

| one | 8.9 | |

|

| ||

| structure | 8.9 | |

|

| ||

| looking | 8.8 | |

|

| ||

| smiling | 8.7 | |

|

| ||

| smile | 8.5 | |

|

| ||

| percussion instrument | 8.4 | |

|

| ||

| old | 8.4 | |

|

| ||

| city | 8.3 | |

|

| ||

| silhouette | 8.3 | |

|

| ||

| job | 8 | |

|

| ||

| equipment | 7.9 | |

|

| ||

| uniform | 7.9 | |

|

| ||

| sitting | 7.7 | |

|

| ||

| happy | 7.5 | |

|

| ||

| vehicle | 7.5 | |

|

| ||

| outdoors | 7.5 | |

|

| ||

| marimba | 7.3 | |

|

| ||

| protection | 7.3 | |

|

| ||

| suit | 7.2 | |

|

| ||

| night | 7.1 | |

|

| ||

| television | 7.1 | |

|

| ||

Google

created on 2022-01-08

| Hat | 90.2 | |

|

| ||

| Fedora | 86.3 | |

|

| ||

| Coat | 85.5 | |

|

| ||

| Headgear | 82 | |

|

| ||

| Suit | 80.1 | |

|

| ||

| Font | 79.1 | |

|

| ||

| Sun hat | 72.7 | |

|

| ||

| Vintage clothing | 68 | |

|

| ||

| White-collar worker | 65.4 | |

|

| ||

| History | 64.3 | |

|

| ||

| Monochrome | 63.1 | |

|

| ||

| Advertising | 62.3 | |

|

| ||

| Monochrome photography | 61.7 | |

|

| ||

| Rectangle | 61 | |

|

| ||

| Art | 60.3 | |

|

| ||

| Display device | 59.1 | |

|

| ||

| Room | 55.6 | |

|

| ||

| Photo caption | 54.9 | |

|

| ||

| Photographic paper | 51.1 | |

|

| ||

| Street | 50.4 | |

|

| ||

Microsoft

created on 2022-01-08

| text | 99 | |

|

| ||

| hat | 98.8 | |

|

| ||

| person | 95.4 | |

|

| ||

| fashion accessory | 91.4 | |

|

| ||

| fedora | 91 | |

|

| ||

| man | 87.2 | |

|

| ||

| clothing | 84.3 | |

|

| ||

| cowboy hat | 80.2 | |

|

| ||

| white | 61.5 | |

|

| ||

| old | 40 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 26-36 |

| Gender | Female, 97.5% |

| Calm | 90.8% |

| Sad | 4.5% |

| Happy | 1.6% |

| Confused | 1% |

| Disgusted | 0.8% |

| Surprised | 0.6% |

| Fear | 0.4% |

| Angry | 0.3% |

AWS Rekognition

| Age | 31-41 |

| Gender | Male, 99.9% |

| Calm | 53.1% |

| Sad | 38.5% |

| Fear | 3% |

| Disgusted | 2.5% |

| Angry | 1.6% |

| Confused | 0.5% |

| Surprised | 0.4% |

| Happy | 0.3% |

AWS Rekognition

| Age | 19-27 |

| Gender | Male, 98% |

| Calm | 93.9% |

| Confused | 4.7% |

| Angry | 0.5% |

| Surprised | 0.3% |

| Sad | 0.2% |

| Fear | 0.1% |

| Disgusted | 0.1% |

| Happy | 0% |

AWS Rekognition

| Age | 38-46 |

| Gender | Male, 99.3% |

| Calm | 97.5% |

| Happy | 1% |

| Sad | 1% |

| Surprised | 0.2% |

| Angry | 0.1% |

| Fear | 0.1% |

| Disgusted | 0.1% |

| Confused | 0.1% |

AWS Rekognition

| Age | 28-38 |

| Gender | Male, 99.8% |

| Calm | 92.3% |

| Sad | 3.5% |

| Angry | 1.4% |

| Confused | 1.2% |

| Surprised | 0.7% |

| Fear | 0.5% |

| Happy | 0.3% |

| Disgusted | 0.2% |

AWS Rekognition

| Age | 23-33 |

| Gender | Female, 63% |

| Angry | 58.6% |

| Calm | 18.5% |

| Sad | 8.7% |

| Happy | 4.8% |

| Fear | 3.6% |

| Surprised | 3.5% |

| Disgusted | 1.4% |

| Confused | 1% |

AWS Rekognition

| Age | 20-28 |

| Gender | Male, 69.4% |

| Angry | 62.5% |

| Surprised | 15.3% |

| Happy | 9.9% |

| Calm | 3.7% |

| Sad | 3.4% |

| Fear | 2.4% |

| Disgusted | 1.6% |

| Confused | 1.1% |

AWS Rekognition

| Age | 14-22 |

| Gender | Male, 50.1% |

| Sad | 43.7% |

| Angry | 26.4% |

| Calm | 18.4% |

| Fear | 4.8% |

| Happy | 3.2% |

| Disgusted | 1.9% |

| Surprised | 0.9% |

| Confused | 0.6% |

AWS Rekognition

| Age | 21-29 |

| Gender | Female, 82% |

| Calm | 71.7% |

| Angry | 24% |

| Surprised | 1.5% |

| Disgusted | 0.7% |

| Sad | 0.6% |

| Confused | 0.6% |

| Happy | 0.5% |

| Fear | 0.4% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Likely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 59.7% | |

|

| ||

| interior objects | 21.2% | |

|

| ||

| cars vehicles | 9.2% | |

|

| ||

| food drinks | 7.3% | |

|

| ||

| text visuals | 1.2% | |

|

| ||

Captions

Microsoft

created by unknown on 2022-01-08

| a group of people standing in front of a store | 78.1% | |

|

| ||

| an old photo of a man | 78% | |

|

| ||

| a group of people in front of a store | 76% | |

|

| ||

Google Gemini

Created by gemini-2.0-flash on 2025-05-16

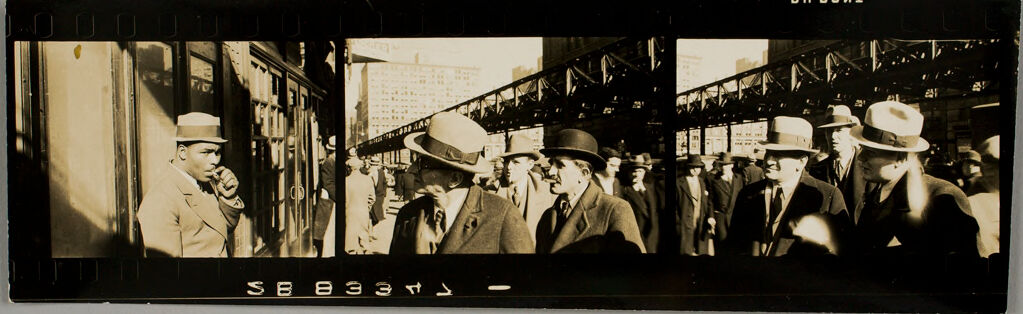

The image appears to be a strip of film containing three frames. Each frame depicts a scene of people in what looks like a city setting, possibly an older time period based on the clothing and hats.

Frame 1: Shows a man in a suit and hat standing by what looks like a storefront. He is holding something to his mouth, possibly smoking. The scene has strong shadows.

Frame 2: Shows a group of people, mostly men, wearing suits and hats. They appear to be walking in a crowded street. In the background, there are buildings and what looks like an elevated train track or structure.

Frame 3: Similar to the second frame, showing a continuation of the same scene with people walking on the street under an elevated structure with buildings in the background.

The overall tone of the image is reminiscent of a street scene from the mid-20th century.

Created by gemini-2.0-flash-lite on 2025-05-16

The image is a vintage, black and white photograph, likely from the mid-20th century. It's presented as a film strip, with the sprocket holes visible along the top and bottom edges. The main subject of the photograph is a scene of people on a city street, likely in a downtown area.

Here's a breakdown:

- Composition: The image is divided into a few sections due to the nature of the film strip. The sections give a sense of the environment and movement, capturing a glimpse of everyday life.

- Subject Matter: The focus is primarily on the people present, mostly men, who appear to be walking in the street or on a platform. Some are wearing suits and hats, indicative of the era. The overall scene suggests a busy urban environment.

- Atmosphere: The black and white style and the nature of the photograph itself lend it a nostalgic, timeless quality. There's a sense of observation and capturing a moment in time.

- Details: The images include a person smoking a cigarette. The architecture of the buildings and the structure of the elevated train tracks in the background provides a sense of the city's urban landscape.

Text analysis

Amazon