Machine Generated Data

Tags

Color Analysis

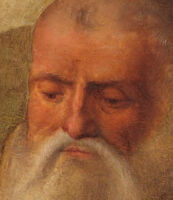

Face analysis

Amazon

Microsoft

Imagga

AWS Rekognition

| Age | 19-27 |

| Gender | Female, 98.1% |

| Calm | 94.5% |

| Surprised | 6.4% |

| Fear | 6.1% |

| Sad | 2.8% |

| Confused | 1.8% |

| Angry | 0.3% |

| Disgusted | 0.3% |

| Happy | 0.3% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.1% | |

Categories

Imagga

created on 2018-08-20

| people portraits | 61.9% | |

| events parties | 37.6% | |

| streetview architecture | 0.2% | |

| pets animals | 0.1% | |

| paintings art | 0.1% | |

Captions

Microsoft

created by unknown on 2018-08-20

| a group of people posing for the camera | 93.9% | |

| a group of people posing for a picture | 93.7% | |

| a group of people posing for a photo | 91% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-04-28

a photograph of a painting of a painting of a woman holding a baby

Created by general-english-image-caption-blip-2 on 2025-06-27

the madonna and child with the saints

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-29

This image depicts a religious scene, likely a Renaissance painting, featuring a group of individuals gathered around a central female figure holding a child. The background includes a rural landscape with trees, buildings, and a winding path. The central figure is positioned against a richly decorated tapestry. The other figures are dressed in a mix of classical and religious attire, with some holding objects such as books or branches. The composition and style suggest a focus on significant religious or historical themes.

Created by gpt-4 on 2024-01-26

This is a Renaissance painting featuring a central figure draped in blue and red garments, holding a child on their lap. To the left and right of the central figure are characters dressed in period clothing, and in the background, there are serene landscape scenes depicting rolling hills, trees, structures, and a clear sky. The middle panel showcases a patterned background, while the outer panels display outdoor settings that contribute to the painting's tranquil and bucolic atmosphere.

Anthropic Claude

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-25

This appears to be a Renaissance-era religious painting depicting a group of figures in a landscape setting. The composition shows several individuals wearing clothing typical of the period, including robes in rich colors like red, blue, and white. The background features a pastoral scene with trees, buildings, and a decorative gold-patterned element on the left side. The painting includes some infants or cherubs, and the overall style and composition suggests this is likely a devotional or religious work from around the 15th or 16th century. The figures are arranged in a tight group, creating an intimate scene characteristic of Renaissance religious art. The painting demonstrates the period's mastery of perspective, color, and detailed rendering of faces and fabric.

Created by claude-3-haiku-48k-20240307 on 2024-03-29

The image depicts a religious scene featuring the Virgin Mary holding the infant Jesus, surrounded by several other figures. In the center, the Virgin Mary is seated, wearing a red robe and white veil, holding the baby Jesus on her lap. She is flanked by two male figures, one elderly and one younger, who appear to be Saint Joseph and another male saint. In the foreground, there are several other figures, including a young man with long hair and a halo, possibly representing one of the apostles, and two women, one with a white headdress and one with long blonde hair. The background features a detailed, ornate tapestry or textile hanging, as well as a pastoral landscape with buildings in the distance. The overall composition and style suggest this is a Renaissance-era religious painting.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-23

The image is a painting of the Madonna and Child, surrounded by various saints and figures. The central figure is the Madonna, who is depicted holding the infant Jesus in her arms. She is dressed in a red robe with a white veil covering her head, and her gaze is directed downward toward the child.

To the left of the Madonna is a group of male figures, including Saint John the Baptist, who is depicted as a young man with long hair and a loincloth. He is holding a staff in his right hand and has a gentle expression on his face. Next to him is another male figure, who is dressed in a green robe and appears to be a saint or apostle.

On the right side of the painting, there are several female figures, including Saint Catherine of Alexandria, who is depicted as a young woman with a crown on her head and a sword in her hand. She is standing next to an older man with a long white beard, who may be Saint Joseph or another saint.

In the background of the painting, there are several buildings and trees, which suggest a rural or countryside setting. The sky above is blue with a few clouds, adding to the serene and peaceful atmosphere of the scene.

Overall, the painting appears to be a devotional work, intended to honor the Virgin Mary and the saints depicted in the scene. The use of rich colors and detailed imagery suggests that the artist was skilled and experienced in their craft.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-23

The image is a painting of the Virgin Mary holding the infant Jesus, surrounded by several figures. The Virgin Mary is depicted wearing a red dress with a white head covering and blue skirt. She is holding the infant Jesus in her lap. The infant Jesus is naked and has a white cloth wrapped around his waist.

To the left of the Virgin Mary is Saint John the Baptist, who is depicted as a young man with long hair and a green robe. He is holding a staff in his right hand. To the right of the Virgin Mary is Saint Joseph, who is depicted as an older man with a long white beard and a brown robe. He is holding a staff in his right hand.

In front of the Virgin Mary are two other figures. One is a woman with a white head covering and a black dress, who is holding a book in her left hand. The other is a man with a white head covering and a black robe, who is holding a staff in his right hand.

In the background of the painting are several buildings and trees. The sky is blue with white clouds.

The overall atmosphere of the painting is one of serenity and reverence. The Virgin Mary and the infant Jesus are depicted in a peaceful and calm manner, surrounded by the other figures who are looking at them with devotion and admiration. The painting is likely a depiction of the Holy Family and their followers, and it conveys a sense of unity and harmony among the figures.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-01-04

The painting depicts the Holy Family with Sts John the Baptist, Elizabeth, Joseph, and Catherine of Alexandria. The painting was executed for private devotion. The Virgin and Child are in the centre, with the other saints on either side. The donor, a woman in a black dress, is on the right, while St John the Baptist is on the left. The landscape in the background is painted in a style similar to that of the Flemish masters.

Created by amazon.nova-pro-v1:0 on 2025-01-04

The painting depicts a group of people, including a woman holding a baby, who are probably saints. They are gathered in front of a green curtain with gold floral designs. On the left side of the painting, there is a tree and a man in a robe. On the right side, there is a man with a beard and a woman with a feather in her hair. Behind them, there are houses and trees.

Google Gemini

Created by gemini-2.0-flash on 2025-04-26

The image is a Renaissance-style oil painting featuring the Madonna and Child surrounded by various figures, set against a detailed landscape backdrop.

In the center, the Madonna, dressed in red and blue robes with a white veil, holds the infant Jesus, who is nude with red hair. Her gaze is directed downwards, conveying a sense of solemnity. Behind the Madonna is a brocaded tapestry with an intricate pattern of floral motifs in gold on a dark background.

To the left of the Madonna, a bare-chested male figure with red curly hair stands draped in a white cloth. Next to him is a man in a light-colored monastic robe, looking down with an introspective expression. Another male figure with a beard and green robe stands next to the robed man. In front of them, a balding man in a black robe looks up with his hands clasped in prayer.

To the right of the Madonna, an elderly man with a long white beard holds a book and looks towards the Madonna and Child. Beside him is a woman in a red veil, looking down with a serene expression. Next to her, a man in a grey monastic robe holds a palm frond. In front of the group on the right, a woman with blonde hair looks to the side with a calm expression.

The background consists of a pastoral landscape with rolling hills, trees, and buildings, creating depth and context to the scene. The sky is a muted blue with hints of clouds. The painting is executed in a style that emphasizes realism and detailed rendering of the figures and their clothing. The overall tone of the painting is one of reverence and serenity, typical of Renaissance religious art.

Created by gemini-2.0-flash-lite on 2025-04-26

The image is a Renaissance-era religious painting depicting the Madonna and Child surrounded by saints and other figures. Here's a breakdown of the scene:

- Central Figures: The Virgin Mary, seated and wearing a blue robe with a red undershirt and a white headdress, cradles the infant Jesus. The baby is nude, as is common in this era's depictions, and has curly red hair.

- Surrounding Figures:

- To the left, there is Saint John the Baptist, partially nude, with long curly hair and an accompanying figure.

- On Mary's right side there's an old man with a long white beard, likely St. Joseph. There are multiple female saints as well as a monk holding a lily and a book.

- A man dressed in dark garments kneels at the front of the painting, looking up at Mary, with another woman nearby.

- Setting and Background: A detailed background with rolling hills, a few buildings in the distance, and a decorative tapestry behind Mary and the child.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-04-25

The image depicts a religious painting, likely from the Renaissance period, characterized by its detailed and realistic portrayal of figures and landscape. Here's a detailed summary:

Central Figures:

- Mary and Jesus: At the center of the painting, the Virgin Mary is seated with the infant Jesus on her lap. Mary is dressed in a red robe with a blue cloak, while Jesus is depicted as a cherubic baby.

- Joseph: To the right of Mary, an older man, likely Saint Joseph, is portrayed with a beard and holding a staff.

Surrounding Saints:

- Saint Sebastian: On the far left, a young man with a bare torso and a white cloth wrapped around his waist is depicted. He is Saint Sebastian, identifiable by the arrows in his body, symbolizing his martyrdom.

- Saint Anthony Abbot: Next to Saint Sebastian, a man with a tonsure (a shaved head) and wearing a brown robe is holding a T-shaped staff, indicating Saint Anthony Abbot.

- Saint John the Baptist: Next to Saint Anthony, a bearded man in green and red robes, likely Saint John the Baptist, is depicted.

- Saint Catherine of Alexandria: In the foreground, a woman with a crown and a red dress, holding a palm frond, represents Saint Catherine of Alexandria.

- Saint Francis of Assisi: On the far right, a man in a gray robe with a tonsure and holding a book and a palm frond is Saint Francis of Assisi.

- Unidentified Female Saint: Behind Saint Francis, a woman in a red dress and white headdress is present, possibly another saint or a donor figure.

Background:

- The background features a serene landscape with rolling hills, trees, and a few buildings, suggesting a rural setting. The sky is clear with a few clouds, adding to the tranquil atmosphere.

Artistic Style:

- The painting exhibits characteristics of Renaissance art, including realistic depictions of the human form, detailed clothing, and a sense of depth and perspective in the landscape.

This painting is a sacred conversation (sacra conversazione), a type of devotional painting popular during the Renaissance, where the Virgin and Child are depicted with a group of saints.

Qwen

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-06-24

This image is a Renaissance-style religious painting depicting a group of figures, likely saints and biblical characters, surrounding the Virgin Mary and the Christ Child. The central figure is a woman dressed in a red tunic and blue robe, holding a baby in her lap, who is likely the Virgin Mary and the Christ Child, respectively. The background is divided into two sections, each showing a landscape with buildings and trees, separated by an ornate tapestry.

Surrounding the central figures are several other characters in various states of dress and holding different objects, possibly symbolizing their roles or attributes. The figures include a man with a cross, a man with a book and a branch, a man with a staff, and others in robes or draped garments. The painting is rich in detail, with careful attention to the clothing, expressions, and objects held by the figures. The overall composition and style suggest it is a work from the Renaissance period, characterized by its use of perspective, realistic depiction of figures, and symbolic elements.

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-06-24

This image is a Renaissance painting depicting a religious scene. The central figure is a woman dressed in a red garment with a blue cloak, likely representing the Virgin Mary, holding a baby, who is likely the infant Jesus. Surrounding her are several figures, including a man with long curly hair, a bearded man, a figure in monk's robes, and a woman with a red cloth on her head. In the background, there is a landscape with trees, buildings, and a distant mountain. The painting features detailed rendering of clothing, facial expressions, and a serene atmosphere, typical of Renaissance art. The central figure is flanked by two panels with ornate curtains, adding to the grandeur of the scene.