Machine Generated Data

Tags

Amazon

created on 2021-12-14

| Person | 99.4 | |

|

| ||

| Human | 99.4 | |

|

| ||

| Person | 97.5 | |

|

| ||

| Person | 97.3 | |

|

| ||

| Person | 95.5 | |

|

| ||

| Nature | 95.2 | |

|

| ||

| Outdoors | 93.6 | |

|

| ||

| Person | 89.8 | |

|

| ||

| Person | 87.1 | |

|

| ||

| Person | 84.8 | |

|

| ||

| Person | 82.4 | |

|

| ||

| Countryside | 69.5 | |

|

| ||

| Painting | 63.9 | |

|

| ||

| Art | 63.9 | |

|

| ||

| Building | 62.5 | |

|

| ||

| Person | 60.9 | |

|

| ||

| People | 60.8 | |

|

| ||

| Porch | 58.3 | |

|

| ||

| Overcoat | 57.7 | |

|

| ||

| Clothing | 57.7 | |

|

| ||

| Coat | 57.7 | |

|

| ||

| Apparel | 57.7 | |

|

| ||

| Shack | 57.7 | |

|

| ||

| Rural | 57.7 | |

|

| ||

| Hut | 57.7 | |

|

| ||

Clarifai

created on 2023-10-15

| people | 100 | |

|

| ||

| group | 99.3 | |

|

| ||

| adult | 98.9 | |

|

| ||

| group together | 97.9 | |

|

| ||

| man | 97.8 | |

|

| ||

| street | 97.4 | |

|

| ||

| woman | 96.9 | |

|

| ||

| monochrome | 96.6 | |

|

| ||

| child | 96.1 | |

|

| ||

| many | 95.7 | |

|

| ||

| boy | 91.7 | |

|

| ||

| administration | 90.2 | |

|

| ||

| wear | 89.9 | |

|

| ||

| transportation system | 89 | |

|

| ||

| several | 88.5 | |

|

| ||

| portrait | 87.3 | |

|

| ||

| war | 86.7 | |

|

| ||

| music | 85.8 | |

|

| ||

| two | 85.1 | |

|

| ||

| three | 83.9 | |

|

| ||

Imagga

created on 2021-12-14

| city | 26.6 | |

|

| ||

| people | 25.1 | |

|

| ||

| urban | 21.8 | |

|

| ||

| building | 20.7 | |

|

| ||

| architecture | 19.6 | |

|

| ||

| travel | 19 | |

|

| ||

| business | 18.2 | |

|

| ||

| window | 17.6 | |

|

| ||

| street | 17.5 | |

|

| ||

| man | 16.1 | |

|

| ||

| wall | 15.4 | |

|

| ||

| hall | 15.1 | |

|

| ||

| men | 13.7 | |

|

| ||

| gate | 13.4 | |

|

| ||

| walk | 13.3 | |

|

| ||

| modern | 13.3 | |

|

| ||

| walking | 13.3 | |

|

| ||

| outdoors | 12.7 | |

|

| ||

| clothing | 12.6 | |

|

| ||

| crowd | 12.5 | |

|

| ||

| adult | 12.1 | |

|

| ||

| life | 11.9 | |

|

| ||

| women | 11.9 | |

|

| ||

| stone | 11.8 | |

|

| ||

| transportation | 11.7 | |

|

| ||

| black | 11.6 | |

|

| ||

| silhouette | 11.6 | |

|

| ||

| interior | 11.5 | |

|

| ||

| old | 11.1 | |

|

| ||

| fur coat | 10.9 | |

|

| ||

| airport | 10.7 | |

|

| ||

| tourism | 10.7 | |

|

| ||

| coat | 10.5 | |

|

| ||

| couple | 10.4 | |

|

| ||

| standing | 10.4 | |

|

| ||

| construction | 10.3 | |

|

| ||

| glass | 10.1 | |

|

| ||

| house | 10.1 | |

|

| ||

| person | 9.9 | |

|

| ||

| fashion | 9.8 | |

|

| ||

| station | 9.7 | |

|

| ||

| portrait | 9.1 | |

|

| ||

| steel | 9 | |

|

| ||

| light | 8.7 | |

|

| ||

| garment | 8.5 | |

|

| ||

| clothes | 8.4 | |

|

| ||

| sidewalk | 8.3 | |

|

| ||

| inside | 8.3 | |

|

| ||

| transport | 8.2 | |

|

| ||

| world | 8.2 | |

|

| ||

| bag | 7.8 | |

|

| ||

| motion | 7.7 | |

|

| ||

| culture | 7.7 | |

|

| ||

| journey | 7.5 | |

|

| ||

| tourist | 7.5 | |

|

| ||

| wood | 7.5 | |

|

| ||

| floor | 7.4 | |

|

| ||

| office | 7.4 | |

|

| ||

| vacation | 7.4 | |

|

| ||

| shopping | 7.3 | |

|

| ||

| reflection | 7.3 | |

|

| ||

| indoor | 7.3 | |

|

| ||

| group | 7.3 | |

|

| ||

| dress | 7.2 | |

|

| ||

| activity | 7.2 | |

|

| ||

| male | 7.1 | |

|

| ||

| indoors | 7 | |

|

| ||

Google

created on 2021-12-14

| Working animal | 75.7 | |

|

| ||

| Wood | 74.3 | |

|

| ||

| Vintage clothing | 71.2 | |

|

| ||

| Fence | 67.9 | |

|

| ||

| Art | 67.4 | |

|

| ||

| History | 66.1 | |

|

| ||

| Door | 65.1 | |

|

| ||

| Stock photography | 63.3 | |

|

| ||

| Suit | 63 | |

|

| ||

| Rectangle | 61.7 | |

|

| ||

| Room | 59.4 | |

|

| ||

| Monochrome | 57.4 | |

|

| ||

| Pack animal | 56.8 | |

|

| ||

| Visual arts | 55.6 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 25-39 |

| Gender | Female, 87.5% |

| Sad | 98.5% |

| Fear | 0.7% |

| Calm | 0.6% |

| Confused | 0.1% |

| Angry | 0% |

| Happy | 0% |

| Surprised | 0% |

| Disgusted | 0% |

AWS Rekognition

| Age | 33-49 |

| Gender | Male, 99.8% |

| Angry | 59% |

| Calm | 31.2% |

| Sad | 4.4% |

| Disgusted | 2.1% |

| Confused | 1.9% |

| Surprised | 0.7% |

| Happy | 0.5% |

| Fear | 0.2% |

AWS Rekognition

| Age | 23-35 |

| Gender | Male, 65.1% |

| Calm | 91.3% |

| Sad | 7.5% |

| Angry | 0.4% |

| Fear | 0.3% |

| Happy | 0.3% |

| Disgusted | 0% |

| Surprised | 0% |

| Confused | 0% |

AWS Rekognition

| Age | 22-34 |

| Gender | Female, 52.6% |

| Sad | 87.7% |

| Calm | 12% |

| Fear | 0.1% |

| Confused | 0.1% |

| Angry | 0.1% |

| Happy | 0.1% |

| Disgusted | 0% |

| Surprised | 0% |

AWS Rekognition

| Age | 32-48 |

| Gender | Male, 97% |

| Calm | 98.1% |

| Sad | 1.7% |

| Angry | 0.1% |

| Fear | 0% |

| Happy | 0% |

| Surprised | 0% |

| Disgusted | 0% |

| Confused | 0% |

AWS Rekognition

| Age | 28-44 |

| Gender | Male, 96.4% |

| Sad | 54.6% |

| Calm | 26.9% |

| Happy | 5.9% |

| Fear | 5.8% |

| Angry | 4% |

| Surprised | 1.5% |

| Confused | 1.1% |

| Disgusted | 0.1% |

AWS Rekognition

| Age | 46-64 |

| Gender | Male, 94.2% |

| Fear | 34.9% |

| Calm | 29% |

| Sad | 18.9% |

| Angry | 14% |

| Confused | 1.4% |

| Happy | 0.9% |

| Surprised | 0.7% |

| Disgusted | 0.3% |

AWS Rekognition

| Age | 49-67 |

| Gender | Male, 91.5% |

| Calm | 95.4% |

| Disgusted | 1.7% |

| Sad | 1% |

| Angry | 0.6% |

| Happy | 0.5% |

| Confused | 0.3% |

| Surprised | 0.2% |

| Fear | 0.1% |

AWS Rekognition

| Age | 28-44 |

| Gender | Male, 67.7% |

| Calm | 53.3% |

| Happy | 24.1% |

| Sad | 18.1% |

| Fear | 1.6% |

| Angry | 1.3% |

| Confused | 0.7% |

| Surprised | 0.7% |

| Disgusted | 0.2% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 94.7% | |

|

| ||

| streetview architecture | 2% | |

|

| ||

| nature landscape | 1.5% | |

|

| ||

| pets animals | 1.5% | |

|

| ||

Captions

Microsoft

created on 2021-12-14

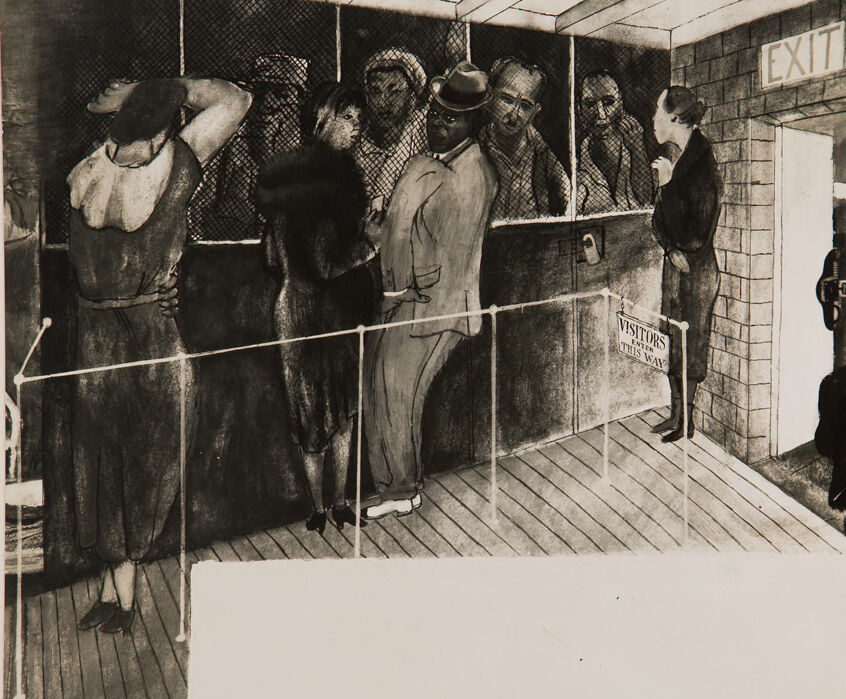

| a group of people standing in a cage | 93.1% | |

|

| ||

| a group of people in a cage | 93% | |

|

| ||

| a group of people standing outside of a cage | 92.1% | |

|

| ||

Text analysis

Amazon

EXIT

VISITORS

WAY

THIS WAY

ENTER

THIS

EXIT

VISITORS

THIS WAY

EXIT

VISITORS

THIS

WAY