Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 1-5 |

| Gender | Female, 91% |

| Disgusted | 0.7% |

| Angry | 0.4% |

| Confused | 0.3% |

| Sad | 0.8% |

| Happy | 2.6% |

| Calm | 94.3% |

| Surprised | 0.8% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 93.5% | |

Categories

Imagga

created on 2018-03-24

| paintings art | 71.2% | |

| people portraits | 27.6% | |

| events parties | 0.6% | |

| pets animals | 0.3% | |

| food drinks | 0.2% | |

Captions

Microsoft

created by unknown on 2018-03-24

| a group of people sitting on a bed | 44.8% | |

| a group of people sitting in a chair | 44.7% | |

| a group of people riding on the back of a motorcycle | 22.4% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-05-11

a photograph of a group of people standing around a dog

Created by general-english-image-caption-blip-2 on 2025-06-28

a black and white photograph of people on a train

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-06

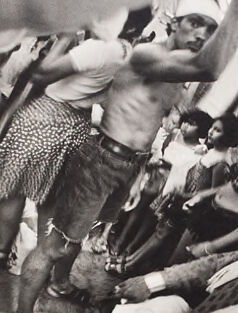

This black-and-white photograph captures a crowded subway scene, with several individuals seated and standing in the frame. The perspective is dynamic and slightly distorted, adding a feeling of movement and chaos. At the center, a shirtless man wearing shorts and glasses holds onto an overhead bar, appearing strained or animated, with his pose drawing attention.

Below, a young child sleeps on an adult's lap amidst the crammed seating area, conveying a sense of exhaustion or intimacy. Other passengers—including men, women, and children—populate the scene, each engaged in their own moments, some gazing out or interacting with one another. The details, such as patterned clothing and hairstyles, evoke a specific time period or urban environment. Overall, the photo presents a lively yet gritty snapshot of everyday life in a public setting.

Created by gpt-4o-2024-08-06 on 2025-06-06

The image depicts a crowded scene inside a subway car. The composition is vertically elongated, capturing the interior from floor to ceiling as it is filled with passengers. In the foreground, a person is sitting with a sleeping child resting on their lap; the child is wearing light-colored clothing. Beside them are more seated passengers engaged in various activities, including talking and looking around. Standing near the center of the image is a man dressed in a sleeveless shirt, patterned shorts, and sunglasses, holding onto the overhead strap for support. The subway car appears to be a vintage model, with ads visible above the seated passengers. This high-contrast black-and-white photograph shows the diverse array of people traveling together, highlighting the sense of motion and cramped space typical of public transit.

Anthropic Claude

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-24

This appears to be a black and white photograph taken in what looks like a crowded subway car or train. The image has a candid, documentary-style quality to it, showing several people in casual summer attire including shorts and sleeveless tops. Some individuals appear to be reclining or lounging in the space, while others are standing. The photo has an interesting composition with bodies arranged at various angles, creating a dynamic visual effect. The style and clothing suggests this may be from sometime in the mid-20th century. The cramped, informal nature of the scene gives it an authentic, slice-of-life quality that captures a moment of urban public transportation.

Created by claude-3-haiku-20240307 on 2024-12-31

The image appears to depict a crowded, chaotic scene with multiple people interacting in an intimate, physical way. The image has a black and white, gritty aesthetic, conveying a sense of rawness and intensity. Without identifying any individuals, I can describe the various elements I see - there are limbs, torsos, and bodies tangled together, with some people appearing to be in a state of undress or distress. The overall impression is one of a passionate, almost frenzied experience unfolding within the confined space shown in the photograph.

Created by claude-3-opus-20240229 on 2024-12-31

The black and white image shows a crowded scene inside what appears to be a subway car or train. Multiple people are standing closely packed together, with some holding onto handrails or poles for support. The passengers seem to be a mix of men and women dressed in casual attire. The tight, chaotic composition conveys a sense of the cramped and hectic nature of public transportation during rush hour or peak travel times in a busy urban setting.

Created by claude-3-5-sonnet-20241022 on 2024-12-31

This is a black and white photograph that appears to be taken inside a subway car or train. The image shows several people in casual summer attire, including shorts and sleeveless tops. The photograph has an interesting perspective, capturing people in various relaxed or slumped positions, creating a somewhat chaotic but intimate scene of urban life. Some people appear to be sitting while others are standing or leaning, and there's a sense of crowding that's typical of public transportation. The image has a documentary-style quality to it, likely from the mid-to-late 20th century, and captures a moment of everyday city life in what seems to be warm weather.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-29

The image depicts a black-and-white photograph of a crowded subway car, with people sitting and standing in various poses. The scene is set in a subway car, with the walls and windows visible in the background. The overall atmosphere of the image is one of chaos and disarray, with people moving in different directions and some appearing to be in a state of distress or discomfort.

The image is likely intended to convey a sense of the challenges and difficulties of commuting in a crowded urban environment. It may also be seen as a commentary on the human experience, highlighting the ways in which people interact with each other in public spaces.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-29

The image is a black-and-white photograph of a crowded train car, with people packed tightly together. The photo is taken from an overhead perspective, looking down on the passengers.

In the foreground, several people are visible, including a man wearing a white shirt and shorts, who appears to be standing up and holding onto something above him. Another person is sitting on the floor, with their legs stretched out in front of them. There are also several other people visible in the background, some of whom are standing up and others who are sitting down.

The overall atmosphere of the image suggests a sense of chaos and disorder, as the passengers are packed tightly together and appear to be struggling to find space. The use of black and white photography adds to the sense of grittiness and realism, giving the image a documentary feel.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-04

A black-and-white photograph shows a crowded subway car, with several people standing and sitting on the floor. One person is standing on a seat, holding onto the handrail, and appears to be reaching for something. Another person is lying on the floor, and a few people are sitting on the floor. The image captures a moment of discomfort and overcrowding in a public transportation setting.

Created by amazon.nova-pro-v1:0 on 2025-06-04

The black-and-white photo depicts a group of people in a crowded space. Some are standing, while others are lying on the floor. The man in the middle is holding a newspaper in his right hand, and his left hand is raised. The man lying on the floor is wearing a hat. Some people are wearing bracelets, necklaces, and shoes. There are also some people wearing glasses.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-10

Here's a description of the image:

Overall Impression:

The image is a black and white photograph, likely taken on a subway car. It presents a crowded and chaotic scene. The composition is vertically oriented and filled with people, giving a sense of the cramped environment.

Key Elements and Details:

- People: The photograph is dominated by figures of people, suggesting a busy transit situation. A shirtless man with a bandage on his head is at the top, possibly grabbing a support bar. The focus then drops to several other adults and children in a somewhat chaotic arrangement.

- Environment: The visible elements indicate a subway car interior, with the metal support bar and the glimpse of a window frame with advertisements on them. The general sense of claustrophobia is emphasized by the confined space of the car.

- Activity: The individuals are caught in a moment of motion and movement which gives a feeling of the busyness and the daily lives of city dwellers.

- Emotional Tone: The image seems to convey a sense of social realism. It captures a moment of urban life, with both tension and possibly a hint of resilience in the presence of the crowd.

- Photographic Style: The image is in black and white, and has a good depth of field. The composition looks natural and possibly captures a candid moment.

In summary, the photograph shows a crowded subway car, where different people are in close proximity. It is a snapshot of urban life and human interaction in the constraints of a public space.

Created by gemini-2.0-flash on 2025-05-10

The black and white image appears to be a photograph taken inside a crowded subway car. The composition is somewhat chaotic, with various individuals positioned at different levels within the frame. At the top, a shirtless man with a bandage on his head reaches upward, possibly to hold onto a rail.

Several seated passengers are visible, including a woman with a patterned dress and other individuals wearing casual clothing. In the center, a person with a beard and a patterned hat seems to be reclining. Further down, there's a child lying down and being held by someone. At the bottom of the image, various sets of feet and legs suggest a densely packed environment. The image has a grainy texture, which adds to the sense of immediacy and realism. Overall, the photo depicts a slice of life within a busy urban transportation setting.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-31

This black and white photograph captures a crowded scene inside what appears to be a bus or a public transport vehicle. The image is taken from a high angle, looking down on the passengers.

The passengers are densely packed, with many of them standing due to the lack of available seating. The individuals are dressed in various styles of clothing, and some are wearing head coverings. The expressions and postures of the people vary, with some looking upwards, possibly towards the camera, while others appear to be engaged in conversation or looking in different directions.

The interior of the vehicle is visible, including the overhead handrails and some advertisements or signs on the ceiling. The overall atmosphere conveys a sense of close proximity and the bustling nature of public transportation.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-18

This black-and-white photograph captures a crowded and intense scene inside a transport vehicle, possibly a bus or a train. The image is tightly packed with people, some of whom appear to be wearing traditional clothing, suggesting a cultural or social gathering. The individuals are closely huddled together, with some standing, some sitting on the floor, and some lying down. The setting appears to be crowded, with many people leaning against each other. The overall mood of the photograph conveys a sense of density and intimacy, with the subjects in close proximity to one another, creating a feeling of communal or shared experience. The black-and-white format emphasizes the contrast and texture of the clothing and skin tones.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-18

This black-and-white photograph captures a crowded scene inside a public transportation vehicle, likely a train or bus. The image is densely packed with people, many of whom appear to be in casual or summer attire. Some individuals are sitting, while others are standing, with their arms raised, possibly holding onto handrails or poles for balance. The expressions and postures suggest a sense of movement and perhaps discomfort due to the crowded conditions. The photograph has a dynamic and somewhat chaotic feel, emphasizing the close quarters and the interactions between the passengers. The image is framed with a white border, and there is a signature or text in the bottom right corner, though it is not clearly legible.