Machine Generated Data

Tags

Color Analysis

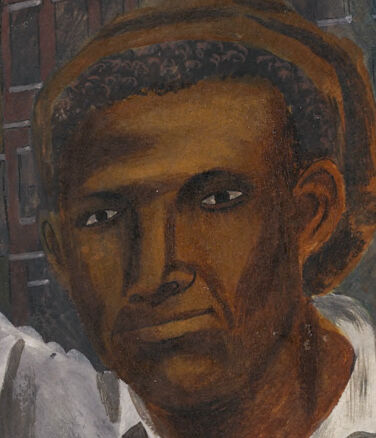

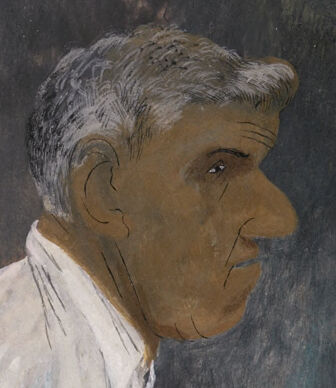

Face analysis

Amazon

Microsoft

Imagga

AWS Rekognition

| Age | 35-52 |

| Gender | Male, 98.3% |

| Confused | 4.6% |

| Sad | 8.3% |

| Disgusted | 0.6% |

| Happy | 2.3% |

| Angry | 10.7% |

| Surprised | 1.7% |

| Calm | 71.9% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.6% | |

Categories

Imagga

created on 2018-02-09

| events parties | 86.6% | |

| people portraits | 12.6% | |

| streetview architecture | 0.2% | |

| pets animals | 0.2% | |

| paintings art | 0.2% | |

| text visuals | 0.1% | |

| food drinks | 0.1% | |

Captions

Microsoft

created by unknown on 2018-02-09

| a man standing next to a painting | 78.8% | |

| a man standing next to a painting on the wall | 70.6% | |

| a man standing next to a painting on a wall | 69.9% | |

Clarifai

No captions written

Salesforce

Created by general-english-image-caption-blip on 2025-04-28

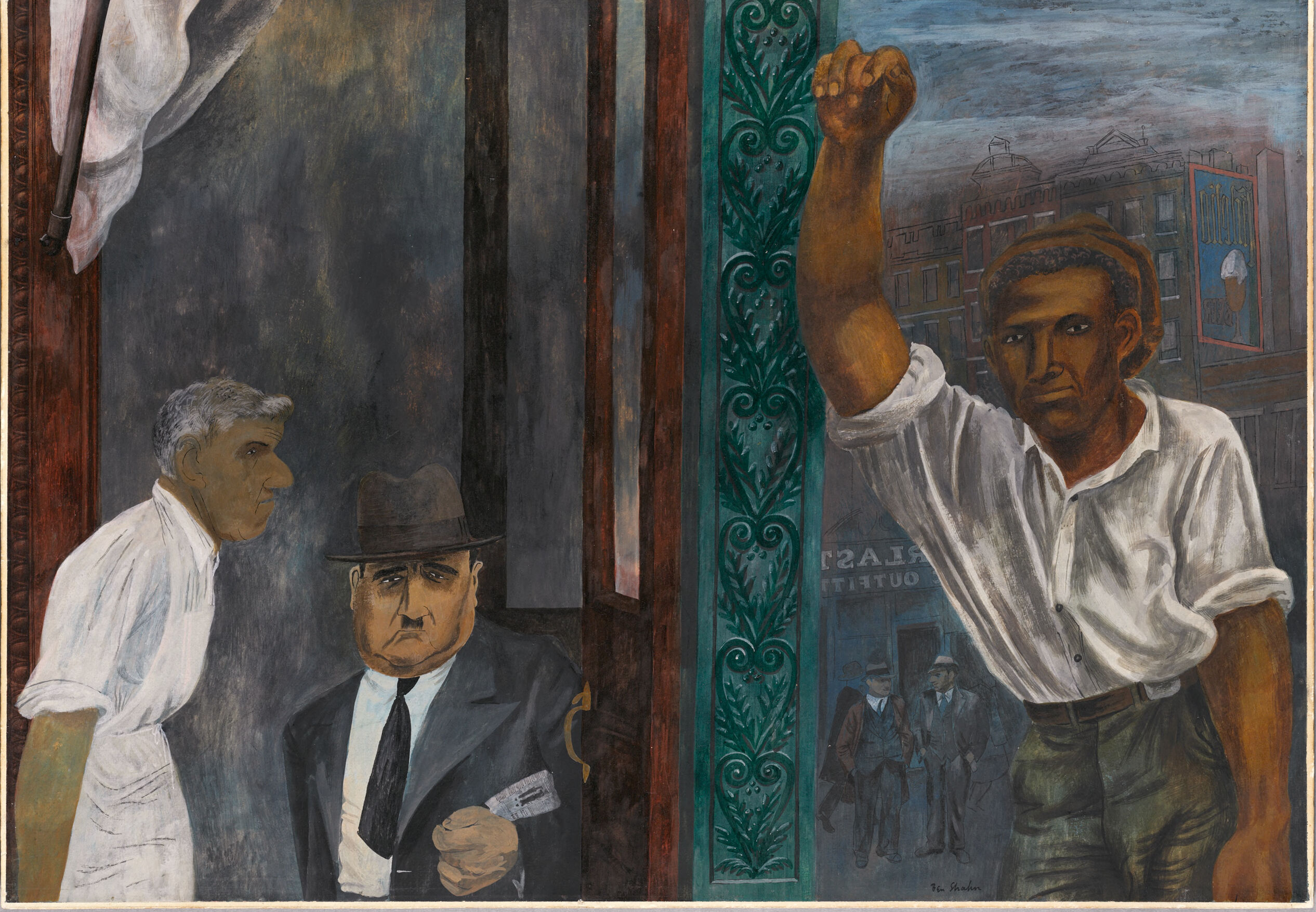

a photograph of a painting of a man in a suit and tie

Created by general-english-image-caption-blip-2 on 2025-06-27

a painting of a man and a woman standing next to each other

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-29

The image depicts a scene with a richly colored and detailed mural. On the left, a person in a white apron seems to be conversing with another person dressed in a suit and hat. On the right, a figure in a white shirt and green pants raises their right arm in a gesture. The scene takes place against a backdrop containing architectural elements with intricate carvings and a street scene with buildings, posters, and pedestrians in the background. The overall composition suggests a moment of action or communication in an urban setting.

Created by gpt-4 on 2024-01-27

This image depicts a painting divided into multiple sections with varying scenes and perspectives. The left side of the painting shows an interior scene with an individual in a white shirt. On the right side of the painting, there's a prominent green pillar. Behind this centerpiece, an urban streetscape with buildings, signs, and individuals in the background is visible. The color palette is muted with grey, green, and earth tones dominating the scene. The style carries a sense of realism with attention to detail and shading that gives depth to the figures and structures. The overall composition merges the architectural elements with the human figures, suggesting a narrative or social commentary.

Anthropic Claude

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-27

This appears to be a painting depicting an urban scene with several figures. The artwork has a dark, moody atmosphere with a predominantly gray color palette. In the composition, there are figures wearing white shirts and one person in a dark suit and hat. The painting features decorative green architectural elements on the side, possibly part of a building's facade. The background suggests a city setting with buildings visible in the distance. The style appears to be from the early-to-mid 20th century, with an expressionistic quality to the brushwork and composition. The overall tone of the piece seems to convey a sense of tension or unease through its somber colors and the positioning of the figures.

Created by claude-3-haiku-48k-20240307 on 2024-03-29

The image depicts a complex scene with multiple figures and elements. There appears to be two older men in suits, one with a serious expression and the other with a more pensive look. Behind them, there is a younger man with a clenched fist raised, suggesting some kind of confrontation or protest. The setting seems to be an urban environment, with a storefront or building in the background. The overall mood of the painting is one of tension and unrest.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

This image is a painting of three men in different settings. The man on the left is wearing a white shirt and appears to be a worker or laborer. He is standing in front of a dark background, possibly a factory or workshop. The man in the middle is dressed in a suit and tie, and is holding a newspaper. He is standing in front of a city street scene, with buildings and people in the background. The man on the right is wearing a white shirt and has his fist raised in the air. He is standing in front of a city street scene, with buildings and people in the background.

The painting appears to be a commentary on the struggles of the working class, with the three men representing different aspects of labor and industry. The use of muted colors and somber expressions adds to the overall sense of seriousness and social commentary.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-23

The image is a painting of three men in a room, with one man standing in the foreground and two men in the background. The man in the foreground is wearing a white shirt and has his right arm raised in a fist. He appears to be looking at the man in the background on the left, who is wearing a suit and hat and holding a newspaper. The man in the background on the right is wearing a white shirt and has his left arm raised, as if he is about to punch the man in the foreground.

The room has a dark and moody atmosphere, with shadows cast on the walls. The walls are adorned with ornate carvings, and there is a large window on the left side of the room that lets in natural light. The overall mood of the painting is one of tension and conflict, as the two men in the background seem to be engaged in a heated discussion or argument. The man in the foreground appears to be trying to intervene or mediate the situation.

The painting is signed by the artist, but the signature is not legible. The style of the painting is reminiscent of Expressionism, with bold brushstrokes and vivid colors used to convey emotion and mood. The use of chiaroscuro, a technique that uses strong contrasts between light and dark to create a sense of volume and depth, adds to the dramatic effect of the painting. Overall, the image is a powerful and thought-provoking work of art that invites the viewer to reflect on the themes of conflict and tension.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-01-04

The painting depicts three men in a room. The man on the left is wearing a white shirt and apron. The man in the middle is wearing a black suit, tie, and hat. The man on the right is wearing a white shirt, pants, and a belt. He is holding a white object in his right hand and raising his left hand. The man in the middle is looking at the man on the right.

Created by amazon.nova-pro-v1:0 on 2025-01-04

The painting depicts a scene with three men. The man on the left is wearing a white apron and looking at the man in the middle. The man in the middle is wearing a suit, a hat, and a tie. He is holding a piece of paper and looking at the man on the right. The man on the right is wearing a polo shirt and pants and is raising his hand.

Google Gemini

Created by gemini-2.0-flash on 2025-04-26

The painting depicts a scene seemingly divided into two distinct yet interconnected halves. On the left, two men are visible inside a building, likely a business or office. The first man, older with gray hair, is wearing a white shirt and has a pronounced nose. He appears to be looking intently, perhaps at the scene unfolding on the right side of the painting. The second man, dressed in a dark suit and fedora, is holding what appears to be money or a ticket. His expression is serious, almost stern.

To the right, a dark-skinned man stands with his fist raised in the air. He is wearing a white shirt and a cap, and his expression is determined, possibly defiant. Behind him, a street scene is visible, complete with buildings and other figures. The phrase "Tailors Outfitter" can be seen backward on a building in the background.

The two halves of the painting are separated by vertical lines, which may represent a doorway or window frame. These lines are painted in a muted color palette of browns, grays, and reds. On the right side, near the man with the raised fist, a column with decorative green detailing is visible. The overall mood of the painting seems to be one of tension and social commentary, possibly depicting a scene of labor unrest or social injustice.

Created by gemini-2.0-flash-lite on 2025-04-26

Here's a description of the image:

Overall Composition: The image is a painting that appears to depict a scene of social commentary or protest. The artwork is divided into two main areas by architectural elements suggesting a doorway or window frame. The style is somewhat stylized, with bold colors and expressive figures.

Left Side: On the left, there are two figures inside. One appears to be a man in a white shirt, looking at the second man who is wearing a suit and fedora. The man in the suit is holding a small object, possibly a document or card, and has a stern, imposing look.

Right Side: On the right, a man is shown with his arm raised in a fist. He is wearing a white shirt and appears to be looking directly at the viewer with a strong, determined expression. Behind him, a city street is visible with buildings and the blurred figures of other men. An "Outfitters" sign is visible on a building

Key Details & Possible Interpretations:

- Dividing Line: The structure dividing the image could represent barriers, social divisions, or conflict.

- Expressions: The expressions on the figures' faces are important. They communicate suspicion, authority, and defiance.

- The Fist: The raised fist is a clear symbol of resistance, solidarity, or protest.

- Social Context: The painting likely conveys a message about class, power, or social injustice.

The artwork seems to be communicating a message about social tension or struggle, potentially involving issues of labor, inequality, or political conflict. The artist uses symbolism, composition, and figure expression to convey an emotional and thought-provoking message.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-04-25

The image is a painting that appears to depict a scene involving three men in what seems to be a tense or confrontational moment. Here is a detailed description:

Setting and Background:

- The setting appears to be an urban environment, possibly a street or alleyway. There are buildings in the background, and the sky is overcast, giving a somewhat somber atmosphere.

- A sign reading "EAST SIDE QUARTER" is visible on a building, suggesting a specific location or district.

Characters:

- Man on the Left: This man is wearing a white shirt and appears to be a worker, possibly a butcher, given the white apron he is wearing. He is looking towards the center of the painting with a concerned expression.

- Man in the Center: This man is dressed in a suit, tie, and hat, holding a card or piece of paper in his hand. He has a stern expression and is looking directly at the viewer, suggesting he might be an authority figure or someone of importance.

- Man on the Right: This man is wearing a white shirt with the sleeves rolled up and green pants. He has his right arm raised with a clenched fist, indicating a gesture of defiance or protest. His expression is serious and determined.

Composition:

- The painting is divided into three sections by vertical elements, possibly doorways or pillars, which separate the three figures.

- The central figure is framed by a dark background, making him stand out.

- The right section features an ornate green pillar with intricate designs, adding a decorative element to the scene.

Symbolism and Themes:

- The raised fist of the man on the right is a common symbol of resistance and solidarity, often associated with labor movements and protests.

- The contrast between the working-class attire of the men on the left and right and the formal attire of the central figure suggests a class or power dynamic.

- The overall mood of the painting is serious and tense, hinting at social or political issues.

The painting seems to convey a narrative about social justice, labor rights, or class struggle, using vivid imagery and symbolic gestures to emphasize its themes.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-06-25

This image is a painting titled "The Great Depression" by Ben Shahn, created in 1936. It is a powerful and detailed commentary on the social and economic impacts of the Great Depression in the United States.

The painting is divided into two main sections. On the left side, there are two men in a dimly lit room. One man, dressed in a suit and hat, is reading a newspaper, while the other, wearing a white shirt, appears to be looking at him. The room has a dark, somber atmosphere, symbolizing the gloom of the economic crisis.

On the right side, there is a man raising his fist in the air, which is a symbolic gesture of resistance or solidarity. The background on this side features a cityscape with tall buildings and a sign that reads "OUTLET." This part of the painting represents the struggle and resilience of individuals and communities during the Great Depression.

The painting uses a realistic style to convey the harsh realities of the time, with muted colors and detailed brushstrokes that emphasize the emotional weight of the scene. The figure with the raised fist stands out as a beacon of hope and defiance amidst the despair depicted in the left side of the painting.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-06-25

This is a painting divided into two main sections. On the left side, there are two men in a dimly lit interior space. The man on the left is older with white hair, wearing a white shirt, and appears to be speaking. The man on the right is wearing a dark suit and a hat, holding a newspaper, and has a serious expression.

On the right side, the scene shifts to an outdoor setting with a man standing in the foreground, raising his right fist in the air. He is wearing a white shirt and dark pants. In the background, there are other people dressed in suits and hats, and buildings with signs, one of which reads "OUTLET." The overall mood of the painting seems to convey a sense of social or political tension, possibly related to labor or civil rights issues. The painting style is realistic with a slightly expressionistic touch, using muted and earthy colors. The artist's signature, "Ben Shahn," is visible in the bottom right corner.