Machine Generated Data

Tags

Color Analysis

Feature analysis

Amazon

| Rug | 61.8% | |

Categories

Imagga

| paintings art | 83.4% | |

| text visuals | 15.8% | |

Captions

Microsoft

created on 2021-04-03

| a close up of a logo | 77.3% | |

| a close up of a piece of paper | 66.9% | |

| close up of a logo | 66.8% | |

OpenAI GPT

Created by gpt-4 on 2025-02-13

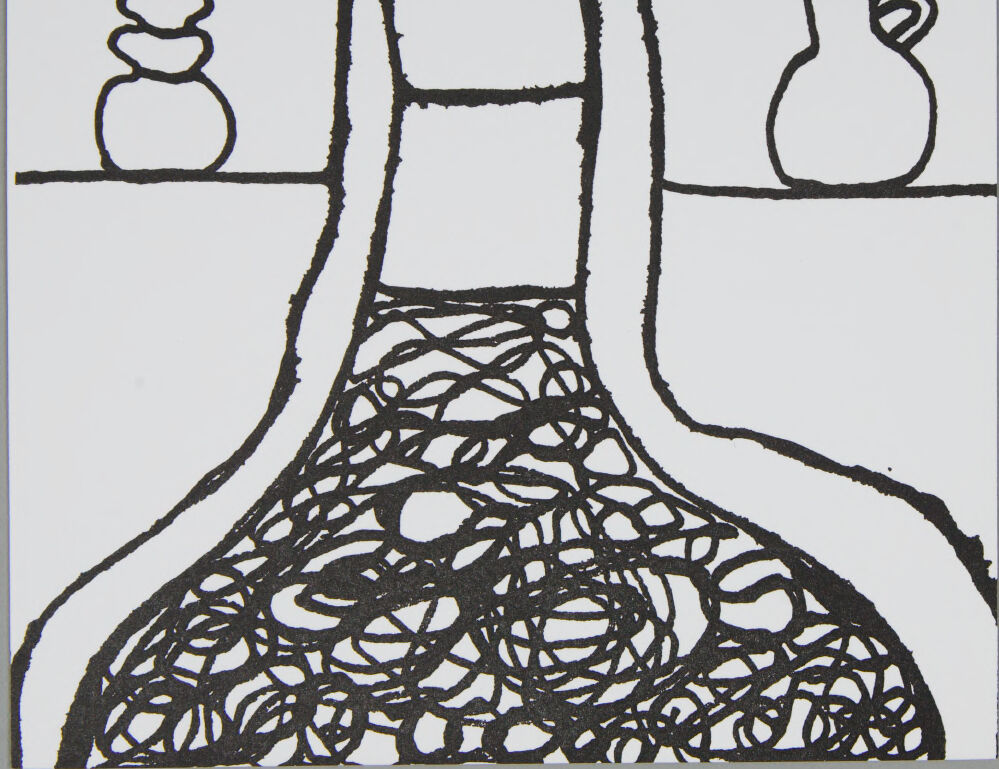

This is an image of a drawing that appears to be executed in black ink on a white background. It shows an abstract scene with a distinctive landscape-like design consisting of a horizontal line representing the ground or a table surface. On the left, there is a stacked form reminiscent of stones or a simplified cairn. In the middle, there's a larger, vertical, castle tower-like structure with a notched top, suggestive of battlements or a ruined structure. On the right, there is a simple representation of a jug or pot with a handle. Below these objects, the lower half of the image contains an intricate network of entangled lines, creating a complex, lace-like pattern that might be interpreted as a network of roots, organic entanglements, or perhaps a sort of shadow with a decorative aspect. The stark contrast between the simplicity of the objects in the upper half and the complexity of the pattern in the lower half creates a visually striking composition that plays with perception and might represent a thematic contrast between simplicity/structure and complexity/chaos.

Created by gpt-4o-2024-05-13 on 2025-02-13

The image is a simple black and white line drawing depicting a figure that appears to be seated or perhaps draped. The central figure is headless and amorphous, outlined with thick black lines. The lower part of the figure features a chaotic, scribbled pattern that could represent a garment or texture. On either side of the figure, placed on what seems to be a table or horizontal surface, are two objects. To the left, there is an object that looks like a stacked series of rounded shapes, possibly a sculptural item or an abstract form. To the right, there is a pitcher or jug with a handle, drawn in a similar thick line style. The background is plain and white, without any additional details. The overall style of the image is abstract and simplified, focusing more on form and outline rather than detailed representation.

Anthropic Claude

Created by claude-3-haiku-20240307 on 2025-02-13

The image appears to be a minimalist, abstract drawing featuring simple, line-based shapes and forms. In the center, there is a large, amorphous shape that could be interpreted as a chair or some other piece of furniture. On either side of this central shape, there are two smaller, more geometric shapes that resemble vases or pottery. The overall style of the drawing seems to be inspired by primitive or tribal art, with the emphasis on rough, gestural lines and a limited color palette of black and white.

Created by claude-3-opus-20240229 on 2025-02-13

The image is a simple line drawing depicting two small, rounded objects on either side of a larger, more elaborate central form. The central element resembles a curved, organic shape, possibly a vase or bottle, that sits on a base made up of tangled, interwoven lines. The overall composition is spare and minimalistic, rendered in black lines against a white background, giving it an abstract, stylized quality. The drawing seems to explore basic forms and the interaction between positive and negative space.

Created by claude-3-5-sonnet-20241022 on 2025-02-13

This appears to be a black and white line drawing or print featuring three vessels. The central and largest piece is a decorative bottle or vase with an intricate swirling pattern in its lower portion, created through overlapping circular designs. On either side of this main vessel are two smaller items: on the left is what appears to be a stacked or tiered decorative object, perhaps a sculpture or ornament, while on the right is a simple jug or pitcher with a handle. The style is minimalist, using bold outlines against a white background. The composition has a nice balance and symmetry to it.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-03-16

The image is a black and white drawing of a vase with a patterned base, sitting on a table. The vase is tall and narrow, with a wide base that has a pattern of squiggly lines. It is sitting on a table or shelf, with two other objects on either side of it. The object on the left is a small, round vase with a narrow neck, and the object on the right is a jug or pitcher with a handle on one side. The background of the image is a solid gray color, which provides a neutral backdrop for the vase and its surroundings. Overall, the image appears to be a simple yet elegant still life drawing, showcasing the beauty of a single vase and its placement in a room.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-03-16

The image is a black-and-white line drawing of a dress with a unique design. The dress has a high neckline and long sleeves, with a fitted bodice and a full skirt. The skirt is made up of swirling lines that resemble a mixture of scribbles and abstract shapes. In the background, there are two objects on a shelf or table. On the left side, there is a stack of three rounded shapes, possibly representing rocks or stones. On the right side, there is a pitcher or jug with a handle and spout. The overall style of the drawing is simple and minimalist, with bold lines and minimal details. The use of black and white creates a striking contrast that draws attention to the dress and its intricate design.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-02-13

The image is a black-and-white drawing on a white background. It features a central figure that appears to be a human silhouette, with a long, flowing dress or garment that covers the lower part of the body. The dress is intricately detailed with a series of circular patterns, giving it a textured and dynamic appearance. On the left side of the drawing, there is a small, round object, possibly a rock or a pebble, adding a natural element to the composition. On the right side, there is a larger, jug-like object with a handle, contributing to the overall balance of the drawing. The drawing is simple yet expressive, capturing the essence of its subjects with minimal detail.

Created by amazon.nova-pro-v1:0 on 2025-02-13

The image is a black-and-white drawing of a person with a long neck and a dress that looks like a net. The person's body is shaped like a vase, and the dress is made up of many small circles. On the left side, there is a stack of rocks, and on the right side, there is a vase with a handle. The drawing is on a white background.