Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 0-3 |

| Gender | Female, 57.4% |

| Calm | 79.3% |

| Happy | 5.5% |

| Sad | 5.4% |

| Confused | 3.4% |

| Angry | 3.2% |

| Fear | 1.5% |

| Disgusted | 0.9% |

| Surprised | 0.8% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 66.9% | |

Categories

Imagga

created on 2021-12-15

| interior objects | 85.1% | |

| paintings art | 11.7% | |

| text visuals | 1.5% | |

Captions

Microsoft

created by unknown on 2021-12-15

| a vintage photo of a suitcase | 38.5% | |

| a vintage photo of some clothes | 38.4% | |

| a vintage photo of a person | 38.3% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-15

a photograph of a baby with a dummy.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a baby in a stroller with a sign that says, i do not know

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-14

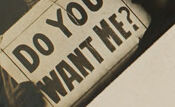

This image is a composite featuring two photographs. On the left, there is a vintage black-and-white photo showing a sign that reads, "DO YOU WANT ME?" with a rustic, dark atmosphere, including a hat and an ambiguous object in hand. On the right side, there is an antique photograph depicting a baby carriage with a child seated in it, wearing a long gown. The background includes wooden paneling, suggesting the setting is outdoors or near a building.

Created by gpt-4o-2024-08-06 on 2025-06-14

The image consists of two separate photographs. On the left, there is an image of someone holding a sign that reads "DO YOU WANT ME?" The background appears dark, and there is another person behind the sign holder it wears an elaborate hat. On the right side, there is a sepia-toned photo of a baby sitting in an old-fashioned stroller with large wheels, positioned in front of what looks like a wooden wall or siding.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-17

The image appears to be a vintage photograph depicting two separate scenes. On the left side, there is a black and white portrait of a person, likely a man, with the text "DO YOU WAIT?" written below the portrait. On the right side, there is a sepia-toned photograph of a baby or young child sitting in what appears to be an old-fashioned baby carriage or stroller.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-17

This appears to be a vintage photograph, likely from the early 1900s. The image shows a baby or young child sitting in what looks like a wooden high chair, dressed in light-colored clothing typical of that era. In the background, there appears to be a wall with wooden siding. There's also a dark photograph or card visible in the image that contains some text reading "DO YOU" though the rest is not clearly legible. The overall photograph has the sepia-toned quality characteristic of early photography, with some fading and aging visible around the edges.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-25

This image presents a vintage-style collage of two photographs, one featuring a baby in a stroller and the other showcasing two women holding a sign with the phrase "DO YOU WANT ME?" The photographs are arranged on a beige background, with the image on the right depicting a baby seated in a stroller. The baby is dressed in a white outfit and appears to be gazing directly at the camera. The stroller is positioned in front of a house with horizontal siding.

In contrast, the image on the left features two women, one of whom is holding a sign with the aforementioned phrase. The woman on the left has short hair and is attired in dark clothing, while the woman on the right wears a hat and also dons dark attire. The overall atmosphere of the image exudes a sense of nostalgia and historical significance, as evidenced by the vintage aesthetic and the use of sepia tones.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-25

The image presents a vintage-style collage featuring two distinct photographs, each with its own unique characteristics.

Left Photograph:

The left photograph is a black-and-white image of a man and woman, positioned at an angle to the left. The woman is attired in a hat and dark coat, while the man wears a dark coat and holds a sign that reads "DO YOU WANT ME?" in bold letters. The sign's background is white, and the text is in black. The photograph appears to be a vintage advertisement or promotional material.

Right Photograph:

The right photograph is a sepia-toned image of a baby sitting in a stroller. The baby is dressed in a white outfit and has a blanket draped over its lap. The stroller features a metal frame with a wooden seat and a white cushion. The background of the photograph is a wall with horizontal siding, and a wooden bench is visible behind the stroller.

Overall Composition:

The two photographs are arranged side by side, creating a sense of contrast between the formal, promotional nature of the left image and the more intimate, domestic scene depicted in the right image. The use of different colors and styles adds visual interest to the composition, making the image more engaging and dynamic.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-25

The image shows two black-and-white photographs placed side by side. The first photograph on the left shows a woman holding a sign that reads "DO YOU WANT ME?". She is wearing a hat and a long-sleeve shirt. The second photograph on the right shows a baby in a stroller. The baby is wearing a dress and has a blanket on its lap. The stroller has a handle and wheels.

Created by amazon.nova-lite-v1:0 on 2025-05-25

The image consists of two photographs. The left photo is a black-and-white portrait of two women standing together. One woman is wearing a hat and holding a sign that reads, "DO YOU WANT ME?" The other woman is wearing a long dress and a hat, and both women are looking at the camera. The right photo shows a baby sitting in a stroller. The baby is wearing a white dress and is looking directly at the camera. The stroller is positioned in front of a wooden wall.

Google Gemini

Created by gemini-2.0-flash on 2025-05-16

The image shows a photograph of a baby in a vintage-looking stroller. The baby is wearing a light-colored dress and looks directly at the camera. The stroller is ornate, with a high handle and spoked wheels. In the upper left corner of the photograph, there is a smaller, black and white photo affixed at an angle. This second photo depicts two women. One woman is wearing a hat and is positioned in the upper right, while the other is holding a sign that says "DO YOU WANT ME?". This secondary photo appears to be a form of political advocacy or appeal, likely related to women's rights or suffrage.

Created by gemini-2.0-flash-lite on 2025-05-16

Here's a description of the image:

The image is a sepia-toned photograph, likely an older picture, possibly from the early 20th century. It is composed of two distinct parts on a single surface.

Left Side:

- It features a black and white photograph of a woman, possibly a portrait. She is wearing a dark garment, and is positioned at a slight angle, gazing toward the viewer with a serious expression.

- Below the woman is another image of the same woman. The lower image has her holding a sign, and the sign reads "DO YOU WANT ME?".

Right Side:

- Shows a baby seated in a classic, old-fashioned baby carriage.

- The carriage has a metal frame and spoked wheels. The baby is wrapped in a light-colored blanket or clothing.

- The backdrop is a simple wall, likely the exterior of a building.

Overall Impression:

The juxtaposition of the woman's portraits and the baby in a carriage creates a thematic connection. The inclusion of the question "DO YOU WANT ME?" in one portrait might hint at a theme of motherhood, relationships, or the desire for companionship. The overall tone is melancholic or thought-provoking, given the sepia color and the serious expressions.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-26

The image consists of two distinct parts. On the left side, there is a black-and-white photograph featuring two women. The photograph appears to be an advertisement or promotional material, as it includes the text "DO YOU WANT ME?" The women in the photograph are dressed in what seems to be period clothing, suggesting it may be from the early 20th century. One woman is wearing a hat, and both are looking directly at the camera.

On the right side of the image, there is a photograph of a baby seated in a vintage baby carriage. The baby is dressed in a white garment and is looking directly at the camera. The baby carriage has a classic design with large wheels and a canopy. The background shows the side of a house with horizontal siding, indicating an outdoor setting.

The two photographs are juxtaposed, creating a contrast between the promotional image of the women and the more personal, familial image of the baby in the carriage.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-07

This image is a black-and-white photograph combining two distinct parts. On the left side, there is a smaller photograph showing two people. One appears to be wearing a hat and a dark jacket, while the other is in a formal, likely studio portrait pose. Below them, there is a sign with the text "DO YOU WANT ME?" displayed prominently.

On the right side of the image, there is a photograph of a baby seated in a vintage pram, dressed in a light-colored outfit, looking directly at the camera. The background features the exterior of a building with horizontal siding. The overall tone and style suggest that the photograph is historical, possibly from the early 20th century.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-07

This is a vintage-style, sepia-toned photograph with two distinct sections:

On the left side, there is an image of two women. One woman is holding a sign that reads "DO YOU WANT ME?" The woman in the foreground is wearing a cowboy hat and appears to be dressed in a style reminiscent of the early 20th century. The image has a playful or humorous tone.

On the right side, there is a photograph of a baby seated in an old-fashioned baby carriage. The baby is dressed in a light, possibly white outfit and appears to be looking directly at the camera. The carriage is ornate, with decorative elements and a cushioned seat. The photograph has a nostalgic and sentimental feel.

The photograph appears to be a composite, combining two separate images into one frame.